Cache Design An Overview

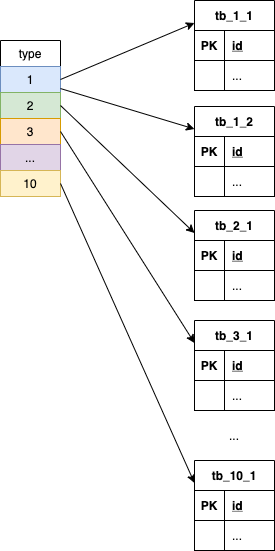

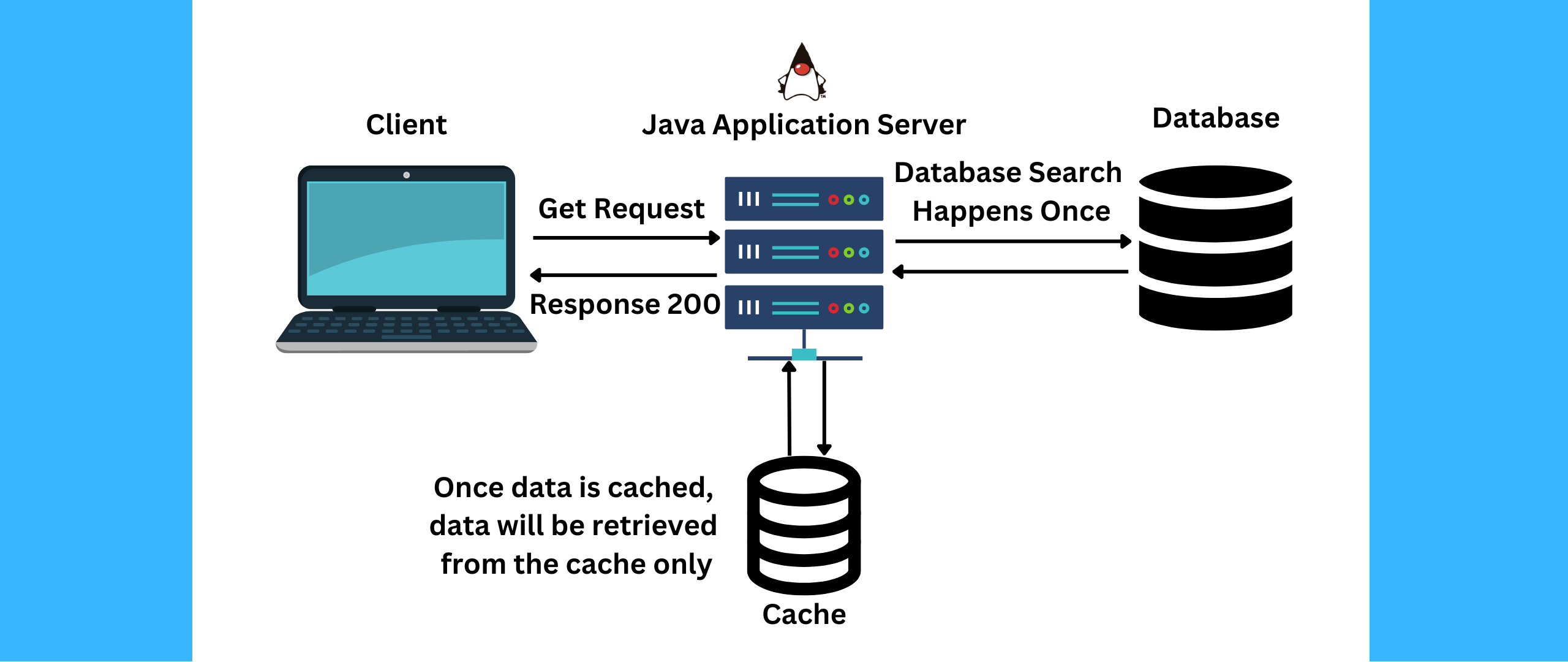

Elements Of Cache Design Pdf Cpu Cache Central Processing Unit Cache design – an overview introduces key aspects of cache design including block placement, replacement strategies, and write mechanisms for cache hits and misses. By writing this out, you practice multi layer caching design: browser, cdn, server, ttls, and cache invalidation plans — which is crucial for real world performance engineering.

Github Umeshmvn Cache Design Memory Hierarchy Design Cache design is defined as the process of maximizing speed and performance of cache memory while minimizing complexity and power consumption, particularly through techniques like the dual data cache (ddc) system that optimizes data access patterns and reduces transistor count. How should space be allocated to threads in a shared cache? should we store data in compressed format in some caches? how do we do better reuse prediction & management in caches?. It requires sophisticated algorithms and hardware mechanisms for cache management, including cache replacement policies, coherence protocols, and cache consistency maintenance. Cache design is a crucial aspect of memory hierarchy optimization in computer architecture. it involves creating small, fast memory units close to the processor to store frequently accessed data, reducing average memory access time and improving overall system performance.

An Overview Of The Proposed Cache Design Space Exploration Framework It requires sophisticated algorithms and hardware mechanisms for cache management, including cache replacement policies, coherence protocols, and cache consistency maintenance. Cache design is a crucial aspect of memory hierarchy optimization in computer architecture. it involves creating small, fast memory units close to the processor to store frequently accessed data, reducing average memory access time and improving overall system performance. The document discusses key elements of cache design including logical vs physical addresses, cache size, mapping functions, replacement algorithms, write policies, line size, and number of caches. In this post, i’ll break down the core caching concepts, architectural choices, and management policies every engineer should understand when designing scalable systems. Improving cache performance recall amat formula: amat = hit time miss rate × miss penalty to improve cache performance, we can improve any of the three components let’s start by reducing miss rate. Master the foundational principles of cache memory design, covering structural mapping, data management, and key performance metrics.

Remember A Good Cache Design Idea Fenq The document discusses key elements of cache design including logical vs physical addresses, cache size, mapping functions, replacement algorithms, write policies, line size, and number of caches. In this post, i’ll break down the core caching concepts, architectural choices, and management policies every engineer should understand when designing scalable systems. Improving cache performance recall amat formula: amat = hit time miss rate × miss penalty to improve cache performance, we can improve any of the three components let’s start by reducing miss rate. Master the foundational principles of cache memory design, covering structural mapping, data management, and key performance metrics.

Mastering The Fundamentals Of Cache For Systems Design Interview Improving cache performance recall amat formula: amat = hit time miss rate × miss penalty to improve cache performance, we can improve any of the three components let’s start by reducing miss rate. Master the foundational principles of cache memory design, covering structural mapping, data management, and key performance metrics.

A Complete Overview Of Cache Sites

Comments are closed.