Cache Coherence Wikiwand

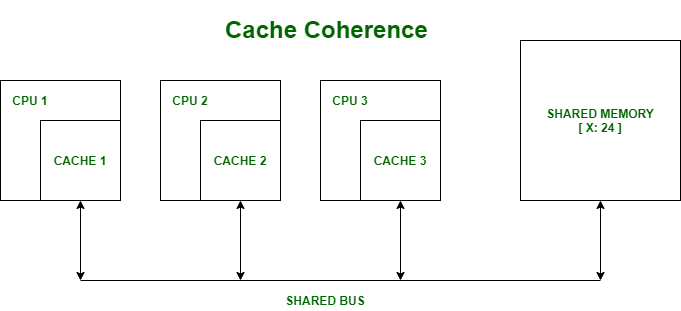

Cache Coherence Wikiwand Cache coherence is the discipline which ensures that the changes in the values of shared operands (data) are propagated throughout the system in a timely fashion. In computer architecture, cache coherence is the uniformity of shared resource data that is stored in multiple local caches. in a cache coherent system, if multiple clients have a cached copy of the same region of a shared memory resource, all copies are the same.

Cache Coherence Geeksforgeeks Memory coherence problem exists because there is both global storage (main memory) and per processor local storage (processor caches) implementing the abstraction of a single shared address space. As multiple processors operate in parallel, and independently multiple caches may possess different copies of the same memory block, this creates a cache coherence problem. Idea: cache coherence aims at making the caches of a shared memory system as functionally invisible as the caches of a single core system. Cache coherence refers to the mechanism that ensures agreement among various entities in a shared memory system regarding the order of values observed at a storage location, particularly in the presence of caches.

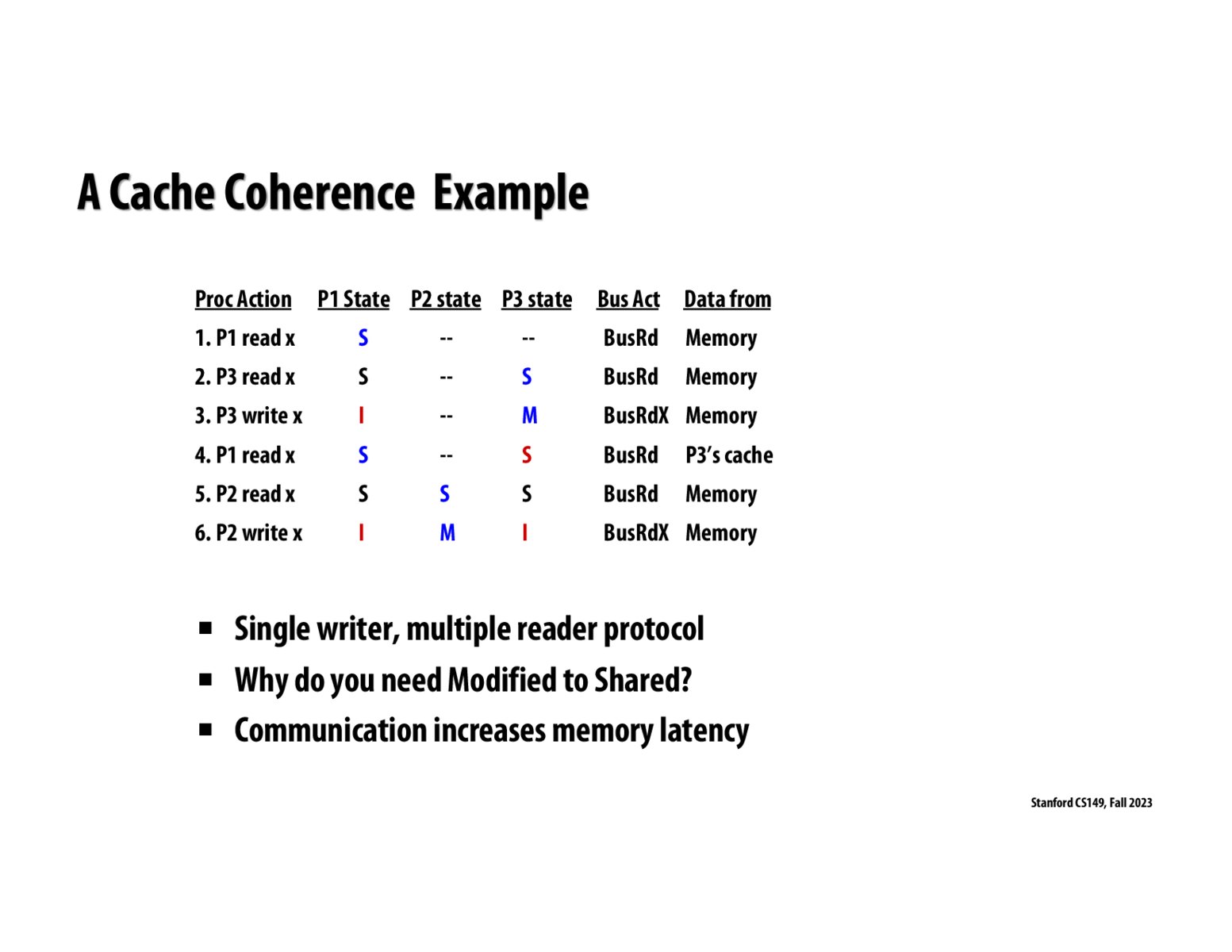

Image Of Slide 53 Idea: cache coherence aims at making the caches of a shared memory system as functionally invisible as the caches of a single core system. Cache coherence refers to the mechanism that ensures agreement among various entities in a shared memory system regarding the order of values observed at a storage location, particularly in the presence of caches. Cache coherence problem exists because there is both global storage (main memory) and per processor local storage (processor caches) implementing the abstraction of a single shared address space. Can programmer ensure coherence if caches invisible to software? coarse grained: page level coherence has overheads non solution: make shared locks data non cacheable. Cache coherence refers to a consistency mechanism in multiprocessor systems, ensuring that all processors access the same up to date copy of shared data stored in their local caches. it helps to maintain data consistency across multiple cache memories by utilizing specific protocols. Cache coherence protocols will cause mutex to ping pong between p1’s and p2’s caches. ping ponging can be reduced by first reading the mutex location (non atomically) and executing a swap only if it is found to be zero (test&test&set). thank you!.

Comments are closed.