Built In Data Quality Validations

Data Quality Monitoring Vs Technical Validations Key Differences And Data validation refers to verifying the quality and accuracy of data before using it. these are the main types of data validation, the pros and cons of the process and tips for how to perform data validation. This paper explores key data validation techniques, including range checks, type checks, code validation, uniqueness checks, and consistency checks. it also distinguishes between automated.

Data Validations Building Transparency Documentation This guide will help understand data validation and cleaning processes, best practices, and their value in building confidence in and usefulness of your data. Master 10 essential data validation techniques to build reliable pipelines. learn ai powered solutions, implementation steps & avoid common pitfalls. While understanding concepts like data quality frameworks and metrics is crucial, this guide is a hands on manual focused on one thing: the specific, practical data quality checks you can implement today. Data architectures have many integration points for validating data. this guide shows the the best place for data quality validation in them.

Increase Data Quality With Dimension Validations In The Field While understanding concepts like data quality frameworks and metrics is crucial, this guide is a hands on manual focused on one thing: the specific, practical data quality checks you can implement today. Data architectures have many integration points for validating data. this guide shows the the best place for data quality validation in them. Learn how to design a scalable data quality framework with automated validation, real time monitoring, and governance to reduce errors and prevent downstream failures. Enterprises use data validation processes to help ensure the quality of data is sufficient for use in data analytics and ai. in addition, data validation has become increasingly important in relation to regulatory compliance. This article breaks down my battle tested approach to data quality, covering everything from schema validation and anomaly detection to automated testing and monitoring. Poor data quality can lead to incorrect insights, disrupted business processes, and failed pipelines. the unified data model (udm) enforces robust validation rules to maintain high data quality, ensuring consistency across all assets.

Data Validations With Great Expectations In Ms Fabric Ise Developer Blog Learn how to design a scalable data quality framework with automated validation, real time monitoring, and governance to reduce errors and prevent downstream failures. Enterprises use data validation processes to help ensure the quality of data is sufficient for use in data analytics and ai. in addition, data validation has become increasingly important in relation to regulatory compliance. This article breaks down my battle tested approach to data quality, covering everything from schema validation and anomaly detection to automated testing and monitoring. Poor data quality can lead to incorrect insights, disrupted business processes, and failed pipelines. the unified data model (udm) enforces robust validation rules to maintain high data quality, ensuring consistency across all assets.

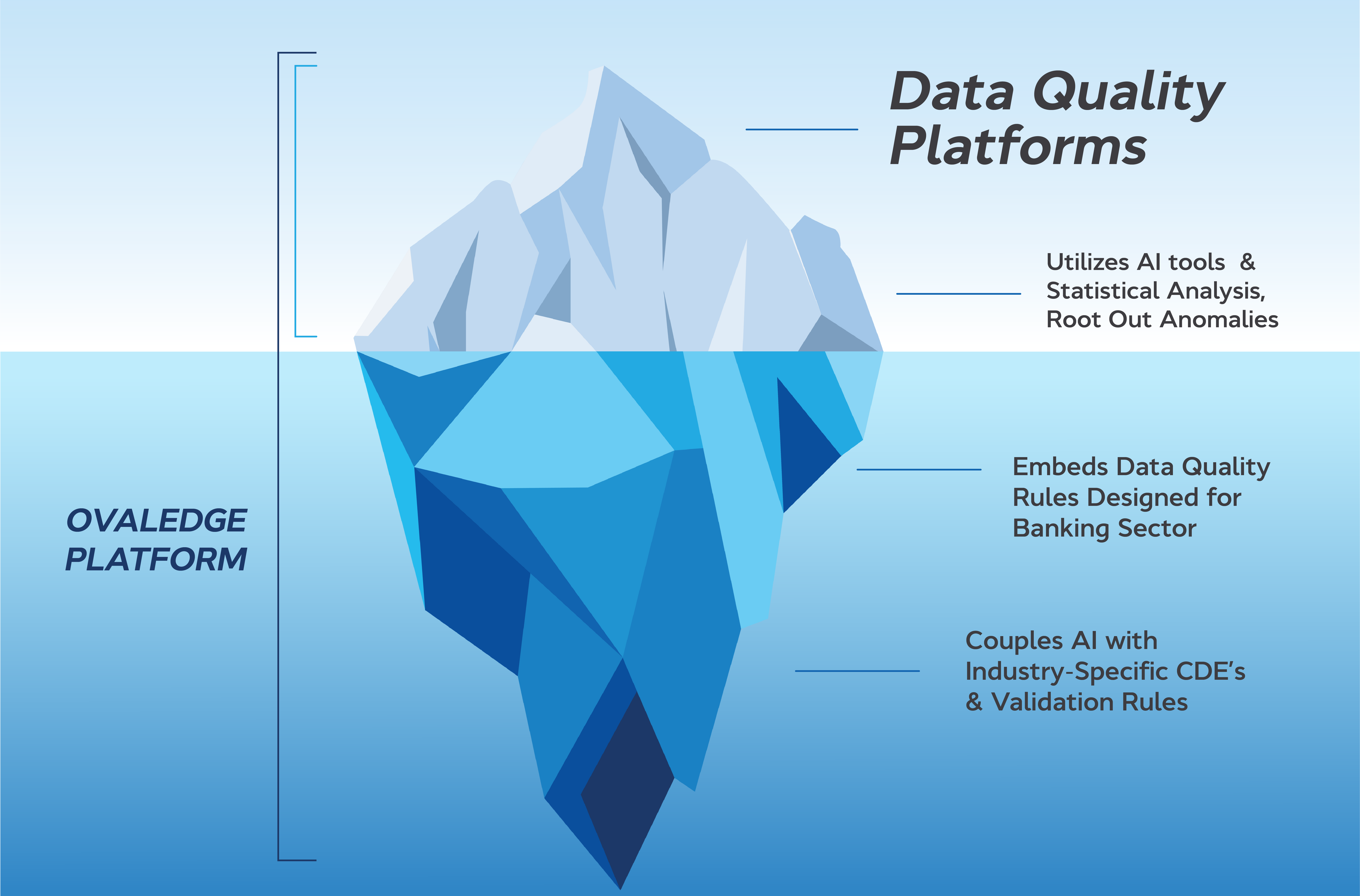

Data Quality Purpose Built For Banking This article breaks down my battle tested approach to data quality, covering everything from schema validation and anomaly detection to automated testing and monitoring. Poor data quality can lead to incorrect insights, disrupted business processes, and failed pipelines. the unified data model (udm) enforces robust validation rules to maintain high data quality, ensuring consistency across all assets.

Comments are closed.