Building Trustworthy Ai Introducing Nist Ai Risk Management Framework

Building Trustworthy Ai Introducing Nist Ai Risk Management Framework Led by the information technology laboratory (itl) ai program, and in collaboration with the private and public sectors, nist has developed a framework to better manage risks to individuals, organizations, and society associated with artificial intelligence (ai). In collaboration with the private and public sectors, nist has developed a framework to better manage risks to individuals, organizations, and society associated with artificial intelligence (ai).

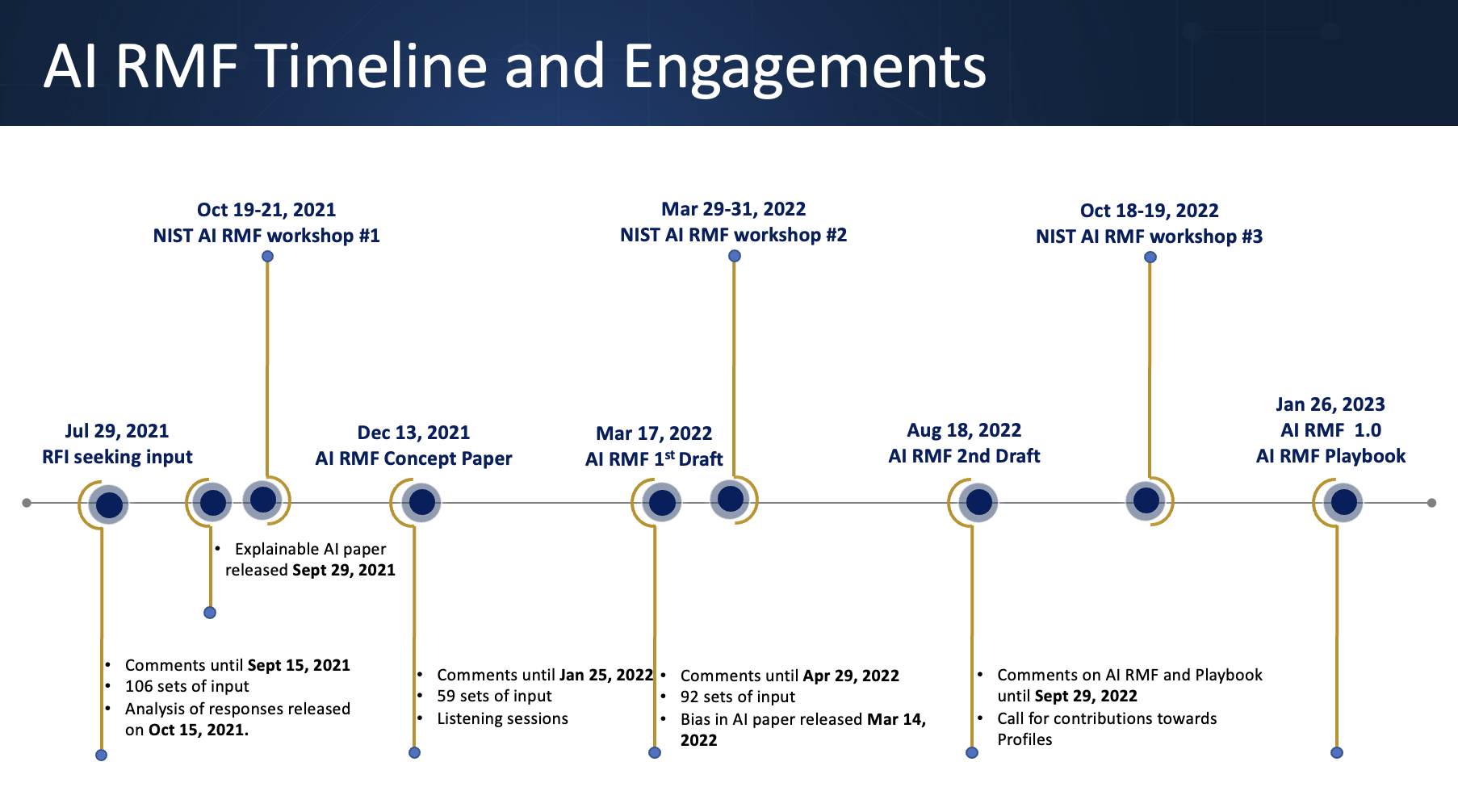

Building Trustworthy Ai The Nist Framework For Risk Management To help organizations manage these challenges, the u.s. national institute of standards and technology (nist) developed the ai risk management framework (ai rmf), first released in 2023. Learn how to build trustworthy ai systems using the nist ai risk management framework and cybernetic loops to ensure safety, fairness, and reliability. Implementing the nist ai risk management framework is not just about compliance — it’s about building sustainable, trustworthy ai capabilities that drive business value while effectively managing risks. Learn how to adopt the nist ai risk management framework to build trustworthy ai systems and streamline compliance with automation tools.

Ai Risk Management Framework Nist Implementing the nist ai risk management framework is not just about compliance — it’s about building sustainable, trustworthy ai capabilities that drive business value while effectively managing risks. Learn how to adopt the nist ai risk management framework to build trustworthy ai systems and streamline compliance with automation tools. The ai rmf core provides outcomes and actions that enable dialogue, understanding, and activities to manage ai risks and responsibly develop trustworthy ai systems. The nist ai rmf is built on foundational principles that guide organizations toward developing trustworthy ai systems. understanding these principles helps teams make better decisions throughout the ai lifecycle and ensures alignment with the framework's broader objectives. The decision to commission or deploy an ai system should be based on a contextual assessment of trustworthiness characteristics and the relative risks, impacts, costs, and benefits, and informed by a broad set of interested parties. Explore the nist ai risk management framework 1.0 and how businesses can govern ai use, reduce risk, and support safer, more reliable adoption with vistrada.

Nist Ai Risk Management Framework Explained Securiti The ai rmf core provides outcomes and actions that enable dialogue, understanding, and activities to manage ai risks and responsibly develop trustworthy ai systems. The nist ai rmf is built on foundational principles that guide organizations toward developing trustworthy ai systems. understanding these principles helps teams make better decisions throughout the ai lifecycle and ensures alignment with the framework's broader objectives. The decision to commission or deploy an ai system should be based on a contextual assessment of trustworthiness characteristics and the relative risks, impacts, costs, and benefits, and informed by a broad set of interested parties. Explore the nist ai risk management framework 1.0 and how businesses can govern ai use, reduce risk, and support safer, more reliable adoption with vistrada.

Nist Ai Risk Management Framework Explained Securiti The decision to commission or deploy an ai system should be based on a contextual assessment of trustworthiness characteristics and the relative risks, impacts, costs, and benefits, and informed by a broad set of interested parties. Explore the nist ai risk management framework 1.0 and how businesses can govern ai use, reduce risk, and support safer, more reliable adoption with vistrada.

Nist Ai Risk Management Framework Explained Securiti

Comments are closed.