Building Llm Apps With Memory

тнр Building Reliable Llm Apps 5 Things To Know The key idea: 👉 memory is external to the llm. 👉 the llm becomes a reasoning engine, not a storage engine. In this article, we will build a simple memory system from scratch, inspired by the popular mem0 architecture. unless otherwise mentioned, all illustrations embedded here were created by me, the author.

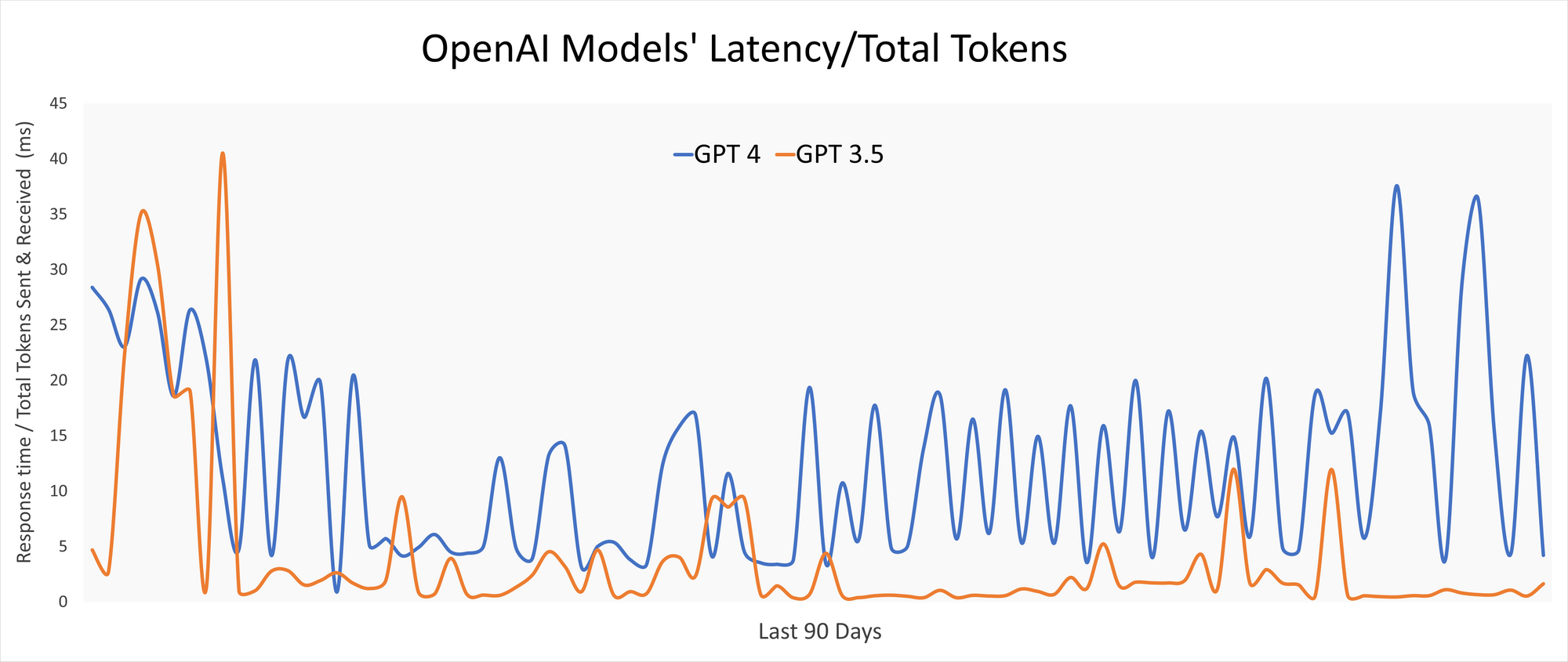

What Are Llm Apps We can’t just stuff an infinite amount of history into the model. we need to build sophisticated memory systems. this article is your definitive guide to solving this problem. Learn how large language models implement memory using context windows, rag, and advanced architectures. Choosing the right storage strategy is crucial for building efficient and scalable llm applications with memory. consider the pros and cons of each approach and select the one that best suits your needs. This article explains how large language model (llm) memory works at a technical level. it breaks down internal vs. external memory, short term vs. long term memory, and how modern applications implement memory using embeddings, vector search, and retrieval pipelines.

5 Llm Based Apps For Developers Hackernoon Choosing the right storage strategy is crucial for building efficient and scalable llm applications with memory. consider the pros and cons of each approach and select the one that best suits your needs. This article explains how large language model (llm) memory works at a technical level. it breaks down internal vs. external memory, short term vs. long term memory, and how modern applications implement memory using embeddings, vector search, and retrieval pipelines. This article discusses how to implement memory in llm applications using the langchain framework in python. Starting with the chatgpt memory feature and ending with a custom built app with memory management, we’ll explore everything from context windows and token limits to assistant thread management and user centered ai memory design. This track will guide you through google ai studio's new "build apps with gemini" feature, where you can turn a simple text prompt into a fully functional, deployed web application in minutes. This is the classic, diy way to give your llm context. you collect previous messages in the frontend or session, and send the entire history back to the model with each new input.

Ai Memory Exploring And Building Llm Memory Systems Online Class This article discusses how to implement memory in llm applications using the langchain framework in python. Starting with the chatgpt memory feature and ending with a custom built app with memory management, we’ll explore everything from context windows and token limits to assistant thread management and user centered ai memory design. This track will guide you through google ai studio's new "build apps with gemini" feature, where you can turn a simple text prompt into a fully functional, deployed web application in minutes. This is the classic, diy way to give your llm context. you collect previous messages in the frontend or session, and send the entire history back to the model with each new input.

Beginner S Guide To Building Llm Apps With Python Europeantech This track will guide you through google ai studio's new "build apps with gemini" feature, where you can turn a simple text prompt into a fully functional, deployed web application in minutes. This is the classic, diy way to give your llm context. you collect previous messages in the frontend or session, and send the entire history back to the model with each new input.

Comments are closed.