Building A Rag Using Opensearch As Vectordb And Aws Bedrock By Kvs

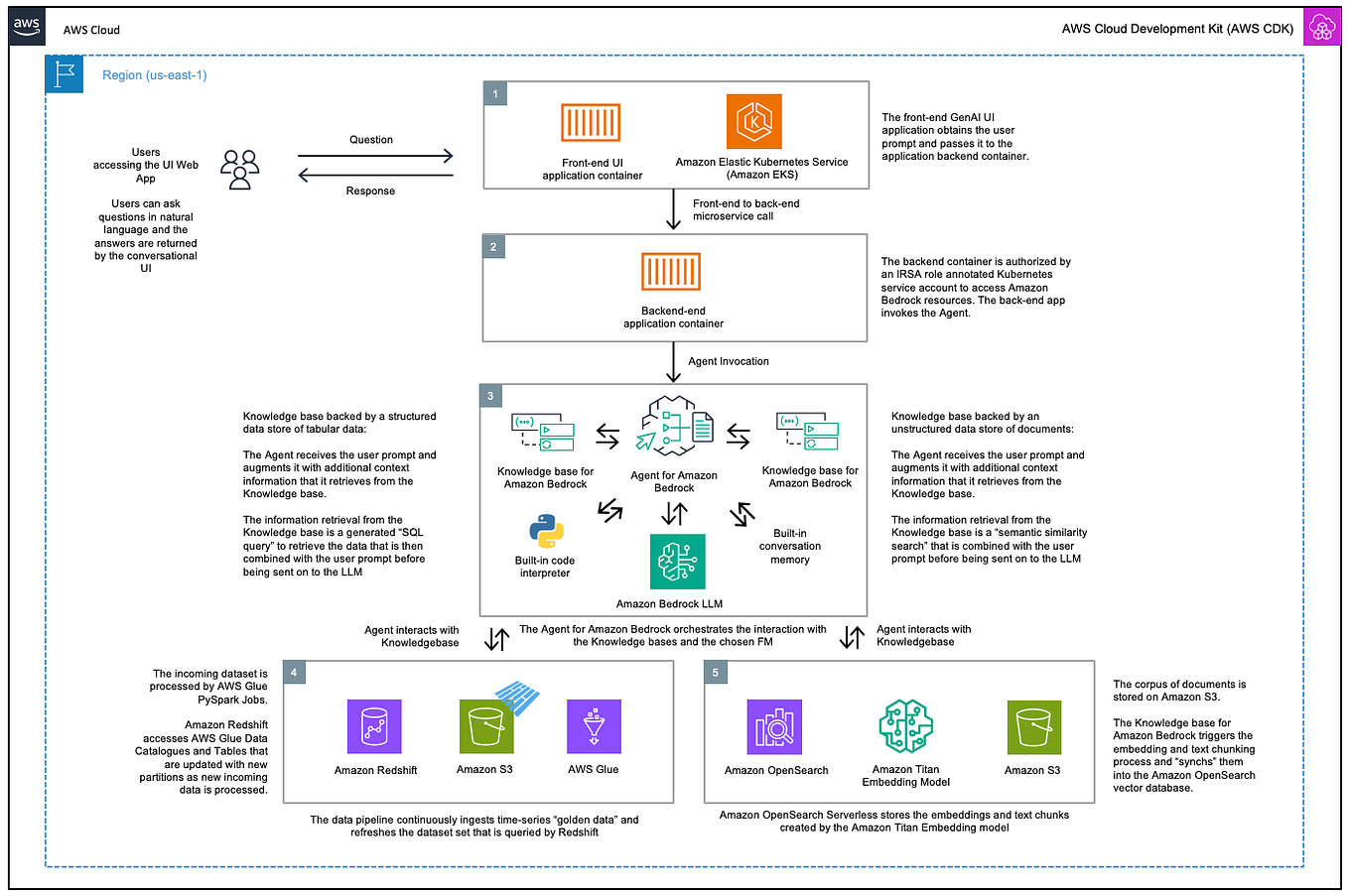

Building A Rag Using Opensearch As Vectordb And Aws Bedrock By Kvs This article demonstrates how to use opensearch as vector db and aws bedrock embeddings to build a retrieval augmented question answering. Read on to learn how to build your own rag solution using an opensearch serverless vector database and amazon bedrock.

Building A Rag Using Opensearch As Vectordb And Aws Bedrock By Kvs However, the purpose of this sample was to show how to set up an open source vector database, and since kendra and bedrock knowledgebases are not open source, this sample focuses on opensearch. Build a retrieval augmented generation application using amazon bedrock for llm inference and opensearch serverless for vector search and document retrieval. large language models are impressive, but they hallucinate. The following python implementation demonstrates a production grade rag pipeline using aws bedrock for embeddings and generation, with opensearch serverless as the vector store. We will walk through how to split the content into chunks, store them as vector embeddings in opensearch, and perform a search based on user queries to answer questions.

Building A Rag Using Opensearch As Vectordb And Aws Bedrock By Kvs The following python implementation demonstrates a production grade rag pipeline using aws bedrock for embeddings and generation, with opensearch serverless as the vector store. We will walk through how to split the content into chunks, store them as vector embeddings in opensearch, and perform a search based on user queries to answer questions. After we successfully loaded embeddings into opensearch, we will then start querying our llm, by using langchain. we will ask questions, retrieving similar embedding for a more accurate prompt. However, the purpose of this sample was to show how to set up an open source vector database, and since kendra and bedrock knowledgebases are not open source, this sample focuses on opensearch. In this post, we show how to implement a generative ai agentic assistant that uses both semantic and text based search using amazon bedrock, amazon bedrock agentcore, strands agents and amazon opensearch. In this sample we will use opensearch serverless to build a vector store and then use it in a rag application using langchain. the vector search collection type in opensearch serverless provides a similarity search capability that is scalable and high performing.

Building A Rag Using Opensearch As Vectordb And Aws Bedrock By Kvs After we successfully loaded embeddings into opensearch, we will then start querying our llm, by using langchain. we will ask questions, retrieving similar embedding for a more accurate prompt. However, the purpose of this sample was to show how to set up an open source vector database, and since kendra and bedrock knowledgebases are not open source, this sample focuses on opensearch. In this post, we show how to implement a generative ai agentic assistant that uses both semantic and text based search using amazon bedrock, amazon bedrock agentcore, strands agents and amazon opensearch. In this sample we will use opensearch serverless to build a vector store and then use it in a rag application using langchain. the vector search collection type in opensearch serverless provides a similarity search capability that is scalable and high performing.

Comments are closed.