Box2d Benchmarks Learning Curves Of Trpo On Openai Gym Box2d

Box2d Benchmarks Learning Curves Of Trpo On Openai Gym Box2d Download scientific diagram | box2d benchmarks: learning curves of trpo on openai gym box2d locomotion tasks. each curve is averaged over 5 random seeds and shows mean ± std. These environments all involve toy games based around physics control, using box2d based physics and pygame based rendering. these environments were contributed back in the early days of openai gym by oleg klimov, and have become popular toy benchmarks ever since.

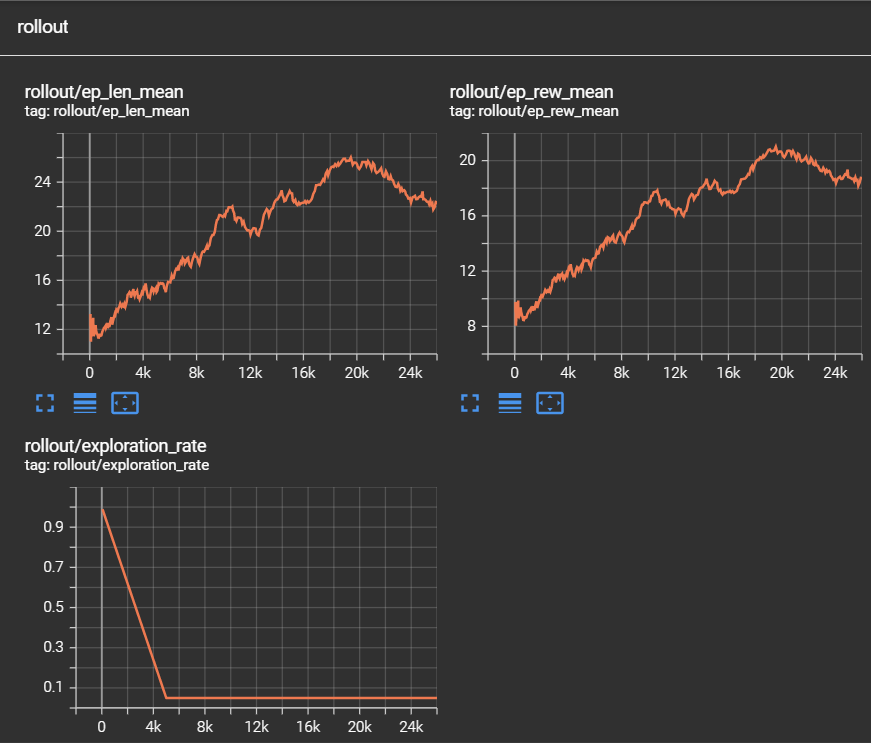

Github Openai Gym A Toolkit For Developing And Comparing Reinforcement learning agent using an existing openai gym gymnasium environment as a base, in this case, box2d. introducing specific changes and customizations to the environment and training a reinforcement learning agent using the stable baselines library. The control tasks domain utilizes the carl (context adaptive reinforcement learning) framework to benchmark model based transfer learning (mbtl). the training and transfer pipeline is designed to evaluate how agents trained on specific source contexts (e.g., a specific gravity or mass) generalize to target contexts. this is achieved through a standardized interface involving train.py for. The spinning up implementations of vpg, trpo, and ppo are overall a bit weaker than the best reported results for these algorithms. this is due to the absence of some standard tricks (such as observation normalization and normalized value regression targets) from our implementations. These environments all involve toy games based around physics control, using box2d based physics and pygame based rendering. these environments were contributed back in the early days of gym by oleg klimov, and have become popular toy benchmarks ever since.

Openai Gym The spinning up implementations of vpg, trpo, and ppo are overall a bit weaker than the best reported results for these algorithms. this is due to the absence of some standard tricks (such as observation normalization and normalized value regression targets) from our implementations. These environments all involve toy games based around physics control, using box2d based physics and pygame based rendering. these environments were contributed back in the early days of gym by oleg klimov, and have become popular toy benchmarks ever since. In this blog post, we will explore the fundamental concepts of gae with trpo using pytorch and gym. we will cover the basic ideas behind these techniques, how to use them in practice, common implementation practices, and best practices to achieve optimal results. The topic defines continuous control benchmarks as simulated tasks that test reinforcement learning algorithms in continuous state and action spaces using box2d, mujoco, and pybullet. The amd ryzen 9 7950x is locked at 4.42ghz and threads are given affinity to the first ccd. the m2 is allowed to boost so it appears faster than the amd cpu. in practice the amd chip is slightly faster. compare branch: loading branches. These environments all involve toy games based around physics control, using box2d based physics and pygame based rendering. these environments were contributed back in the early days of gym by oleg klimov, and have become popular toy benchmarks ever since.

Comments are closed.