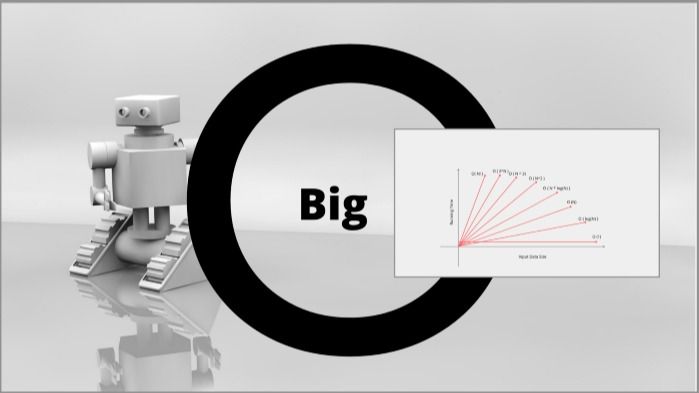

Big O Notation Explained In 60 Seconds

Big O Notation Explained For Beginners Samuel Martins Tealfeed 6. o (n²) — quadratic. nested loops over the input. bubble sort compares every pair — fine for small data, terrible for large. 7. o (2ⁿ) — exponential. doubles with each new element. Big o is a way to express an upper bound of an algorithm’s time or space complexity. describes the asymptotic behavior (order of growth of time or space in terms of input size) of a function, not its exact value. can be used to compare the efficiency of different algorithms or data structures.

Big O Notation Explained Big o notation is a mathematical notation that describes the approximate size of a function on a domain. big o is a member of a family of notations invented by german mathematicians paul bachmann [1] and edmund landau [2] and expanded by others, collectively called bachmann–landau notation. Instead of measuring actual time or memory usage, big o provides a high level view of efficiency. to make this abstract idea easier to grasp, let’s break it down using real life examples. Learn big o notation with visual examples and simple explanations. this beginner friendly guide breaks down time & space complexity, including o (1), o (n), o (log n), and more — perfect for. In this article, i’ll explain what big o notation is and give you a list of the most common running times for algorithms using it. algorithm running times grow at different rates.

Big O Notation Explained With Examples Learn big o notation with visual examples and simple explanations. this beginner friendly guide breaks down time & space complexity, including o (1), o (n), o (log n), and more — perfect for. In this article, i’ll explain what big o notation is and give you a list of the most common running times for algorithms using it. algorithm running times grow at different rates. Big o notation explained through derivation, not memorization. learn to calculate time and space complexity from unfamiliar code. Big o notation describes the relationship between the input size (n) of an algorithm and its computational complexity, or how many operations it will take to run as n grows larger. it focuses on the worst case scenario and uses mathematical formalization to classify algorithms by speed. Understand big o notation and time complexity through real world examples, visual guides, and code walkthroughs. learn how algorithm efficiency impacts performance and how to write scalable code that stands up under pressure. In just 60 seconds, learn what really makes an algorithm *fast* — and why it’s not just about having a faster computer.this mini lecture introduces **big o n.

Comments are closed.