Balanced Accuracy

Balanced Accuracy Classification Models Finnstats This tutorial explains balanced accuracy, including a formal definition and an example. Learn how to compute the balanced accuracy for binary and multiclass classification problems with imbalanced datasets. the balanced accuracy is the average of recall on each class, and can be adjusted for chance.

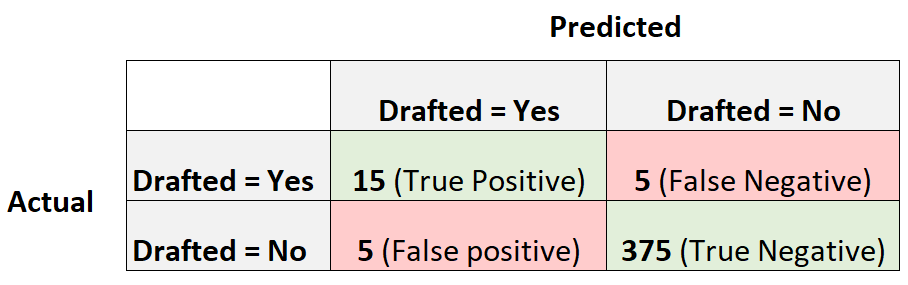

What Is Balanced Accuracy Definition Example Balanced accuracy can serve as an overall performance metric for a model, whether or not the true labels are imbalanced in the data, assuming the cost of fn is the same as fp. Balanced accuracy is a metric that evaluates the performance of classification models on imbalanced datasets. it is the average of recall for each class, and it accounts for both sensitivity and specificity. learn how to compute it from scratch and compare it with other metrics. Balanced accuracy is a metric for evaluating classification models that gives equal weight to each class, regardless of how many examples belong to it. it’s calculated as the average recall (or detection rate) across all classes. Balanced accuracy is a statistical measure used to assess the performance of classification models on imbalanced datasets. it represents the arithmetic mean of sensitivity (true positive rate) and specificity (true negative rate) and ensures that both minority and majority classes are equally important during evaluation.

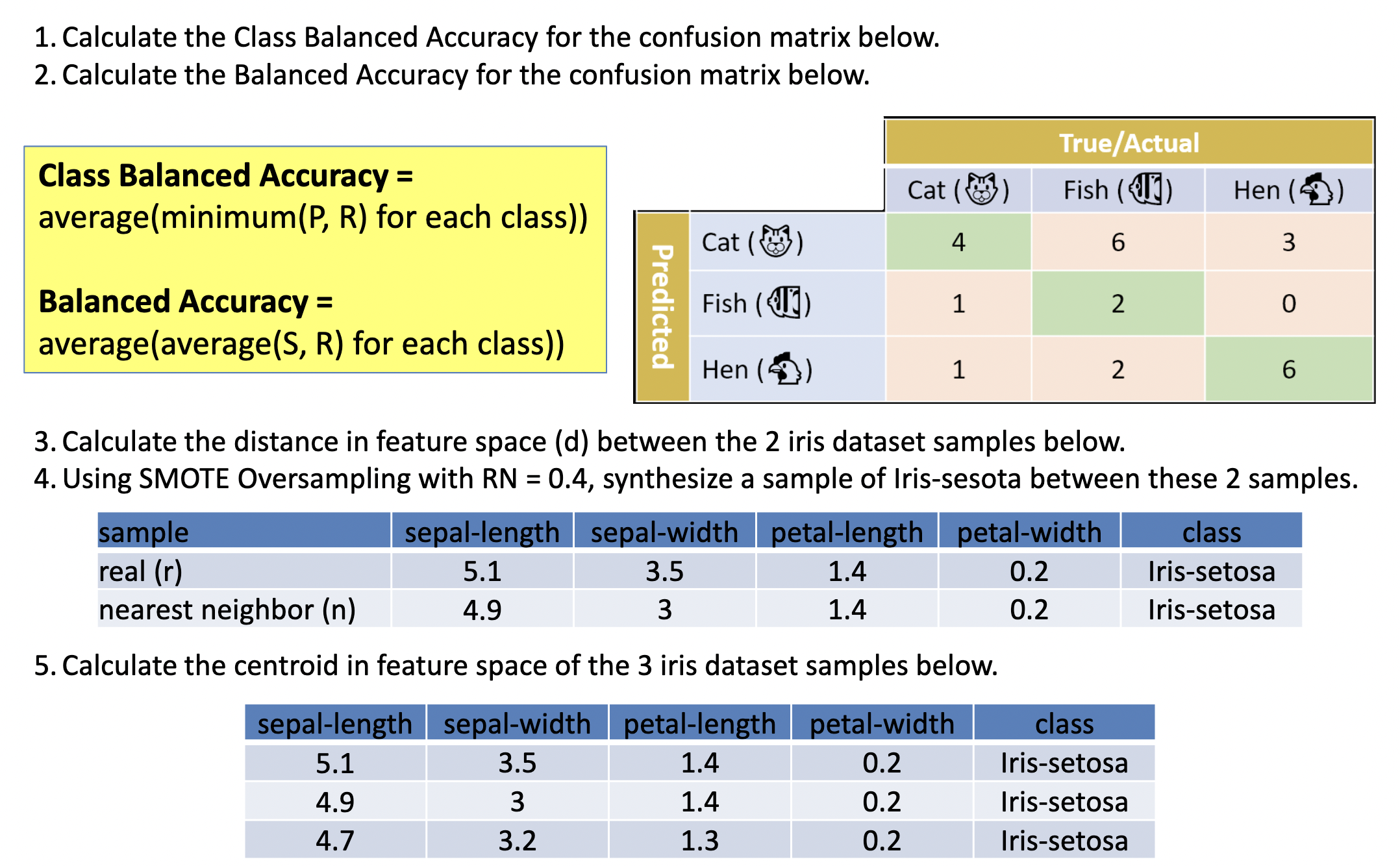

Solved 1 Calculate The Class Balanced Accuracy For The Chegg Balanced accuracy is a metric for evaluating classification models that gives equal weight to each class, regardless of how many examples belong to it. it’s calculated as the average recall (or detection rate) across all classes. Balanced accuracy is a statistical measure used to assess the performance of classification models on imbalanced datasets. it represents the arithmetic mean of sensitivity (true positive rate) and specificity (true negative rate) and ensures that both minority and majority classes are equally important during evaluation. Balanced accuracy is independent of class prevalence, assigns equal importance to both classes, extends naturally to multi class settings, and most directly captures the key property needed for prevalence comparison: how well a judge distinguishes positive from negative instances. We learned that accuracy is not always the best validation metric and can sometimes give a misleading impression of the model’s effectiveness. for imbalanced classes, a more appropriate metric is balanced accuracy, which provides a global view of the model’s performance across all classes. The matthews correlation coefficient (mcc) is more reliable than balanced accuracy, bookmaker informedness, and markedness in two class confusion matrix evaluation. Balanced accuracy is a metric defined as the average of class wise recall, ensuring that each class is equally weighted regardless of its prevalence. it generalizes to multi class scenarios by computing the macro average of recalls and addresses the pitfalls of raw accuracy in imbalanced datasets.

Accuracy And Balanced Accuracy Of Top Methods On All Datasets Balanced accuracy is independent of class prevalence, assigns equal importance to both classes, extends naturally to multi class settings, and most directly captures the key property needed for prevalence comparison: how well a judge distinguishes positive from negative instances. We learned that accuracy is not always the best validation metric and can sometimes give a misleading impression of the model’s effectiveness. for imbalanced classes, a more appropriate metric is balanced accuracy, which provides a global view of the model’s performance across all classes. The matthews correlation coefficient (mcc) is more reliable than balanced accuracy, bookmaker informedness, and markedness in two class confusion matrix evaluation. Balanced accuracy is a metric defined as the average of class wise recall, ensuring that each class is equally weighted regardless of its prevalence. it generalizes to multi class scenarios by computing the macro average of recalls and addresses the pitfalls of raw accuracy in imbalanced datasets.

Balanced Accuracy Of Classifiers Balanced Accuracy Or The Average Of The matthews correlation coefficient (mcc) is more reliable than balanced accuracy, bookmaker informedness, and markedness in two class confusion matrix evaluation. Balanced accuracy is a metric defined as the average of class wise recall, ensuring that each class is equally weighted regardless of its prevalence. it generalizes to multi class scenarios by computing the macro average of recalls and addresses the pitfalls of raw accuracy in imbalanced datasets.

Comments are closed.