Back Propagation With Tensorflow Geeksforgeeks

What Is Backpropagation In Neural Networks And Why Do We Need It Tensorflow is one of the most popular deep learning libraries which helps in efficient training of deep neural network and in this article we will focus on the implementation of backpropagation in tensorflow. In backward pass or back propagation the errors between the predicted and actual outputs are computed. the gradients are calculated using the derivative of the sigmoid function and weights and biases are updated accordingly.

The Backpropagation Algorithm By Damien Benveniste Backpropagation is a common method for training a neural network. there is no shortage of papers online that attempt to explain how backpropagation works, but few that include an example with actual numbers. Let's solve a complete forward pass and backpropagation step by step. there are multiple libraries (pytorch, tensorflow) that can assist you in implementing almost any neural network architecture. this article is not about solving a neural net using one of those libraries. A step by step backpropagation example for regression using an one hot encoded categorical variable by hand and in tensorflow backpropagation is a common method for training a neural network. In this notebook you will see how to use tensorflow to do a single update step based on stochastic gradient descent with one data point. you will do one forward pass and one backward pass and.

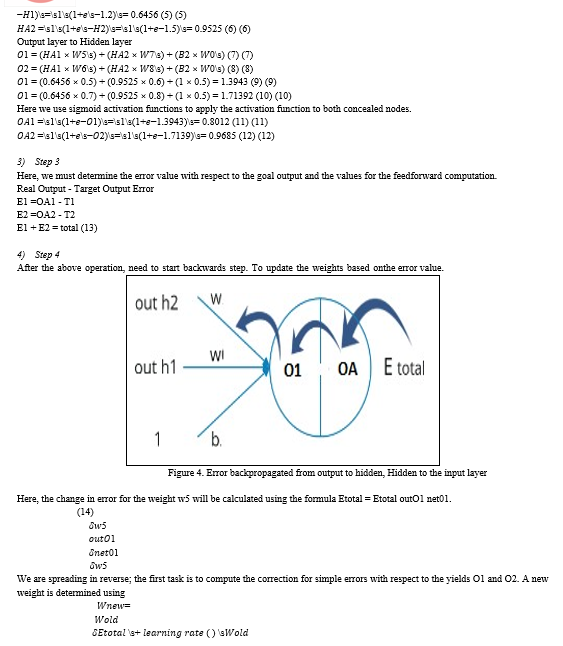

Back Propagation A step by step backpropagation example for regression using an one hot encoded categorical variable by hand and in tensorflow backpropagation is a common method for training a neural network. In this notebook you will see how to use tensorflow to do a single update step based on stochastic gradient descent with one data point. you will do one forward pass and one backward pass and. In tensorflow it seems that the entire backpropagation algorithm is performed by a single running of an optimizer on a certain cost function, which is the output of some mlp or a cnn. Backpropagation, in essence, is an application of the chain rule from calculus used to compute the gradients (partial derivatives) of a loss function with respect to the weights of the network. the process involves three main steps: the forward pass, loss calculation, and the backward pass. Learn about backpropagation in tensorflow the fundamental algorithm for training neural networks, with step by step explanations and practical examples. Bptt works by unfolding the rnn across time steps and applying backpropagation on this unfolded network. gradients are computed and accumulated across all relevant time steps. this enables rnns to learn complex temporal and sequential patterns in data.

Python Back Propagation In Tensorflow Stack Overflow In tensorflow it seems that the entire backpropagation algorithm is performed by a single running of an optimizer on a certain cost function, which is the output of some mlp or a cnn. Backpropagation, in essence, is an application of the chain rule from calculus used to compute the gradients (partial derivatives) of a loss function with respect to the weights of the network. the process involves three main steps: the forward pass, loss calculation, and the backward pass. Learn about backpropagation in tensorflow the fundamental algorithm for training neural networks, with step by step explanations and practical examples. Bptt works by unfolding the rnn across time steps and applying backpropagation on this unfolded network. gradients are computed and accumulated across all relevant time steps. this enables rnns to learn complex temporal and sequential patterns in data.

An Intuitive Guide To Back Propagation Algorithm With Example Learn about backpropagation in tensorflow the fundamental algorithm for training neural networks, with step by step explanations and practical examples. Bptt works by unfolding the rnn across time steps and applying backpropagation on this unfolded network. gradients are computed and accumulated across all relevant time steps. this enables rnns to learn complex temporal and sequential patterns in data.

Comments are closed.