Autoencoders

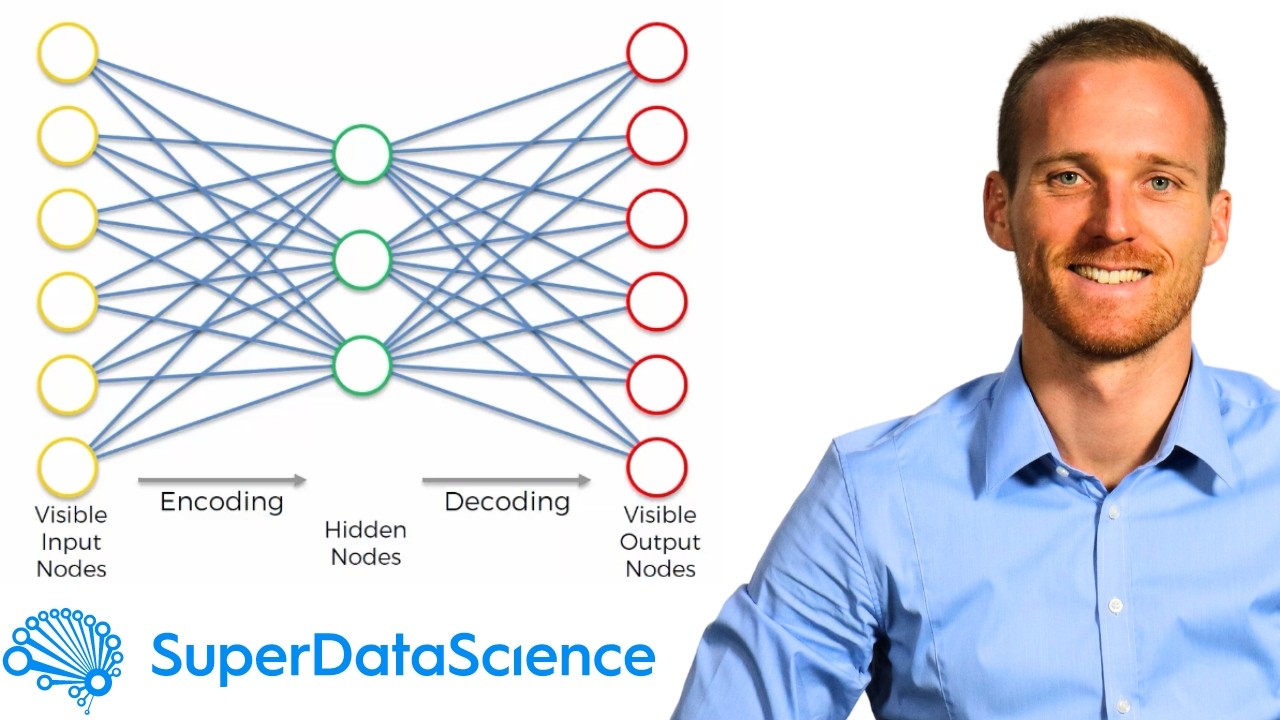

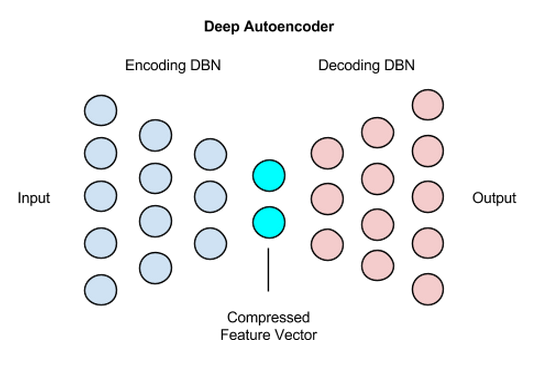

Autoencoders In Neural Networks Explained The Key To Data Compression Autoencoders are neural networks that compress input data into a smaller representation and then reconstruct it, helping the model learn important patterns efficiently. Autoencoders are often trained with a single layer encoder and a single layer decoder, but using many layered (deep) encoders and decoders offers many advantages.

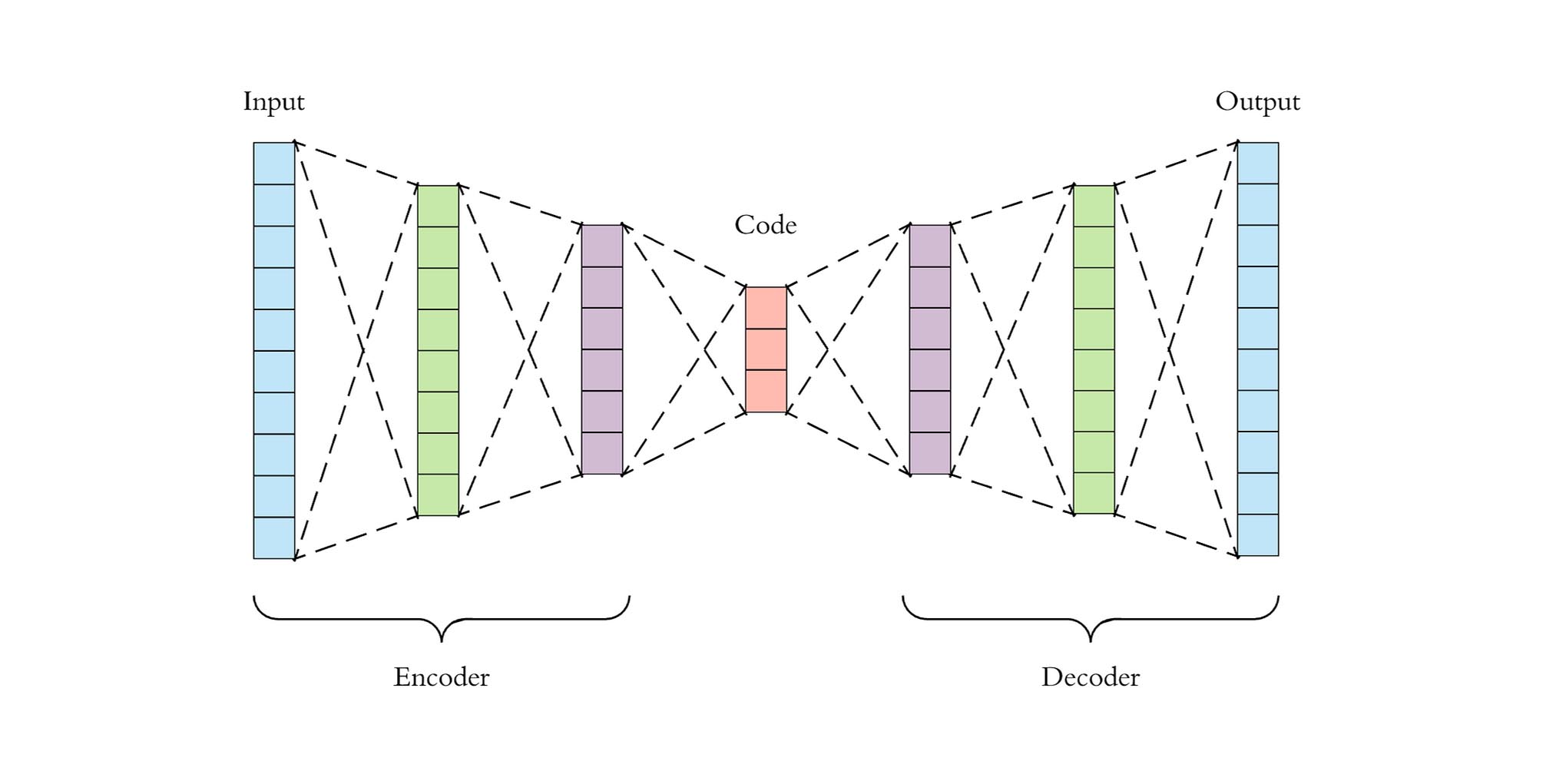

Machine Learning Andrew Valentine This tutorial introduces autoencoders with three examples: the basics, image denoising, and anomaly detection. an autoencoder is a special type of neural network that is trained to copy its input to its output. Dive into the world of autoencoders with our comprehensive tutorial. learn about their types and applications, and get hands on experience using pytorch. Autoencoders refer to a specific subset of encoder decoder architectures that are trained via un supervised learning to reconstruct their own input data. because they do not rely on labeled training data, autoencoders are not considered a supervised learning method. Sparse autoencoders sparse autoencoders add a sparsity constraint, usually a penalty term based on kl divergence, to the loss function. this encourages the network to activate only a small subset of hidden neurons for any given input. the result is a distributed, sparse code that often captures more interpretable features than a standard autoencoder. denoising autoencoders introduced by pascal.

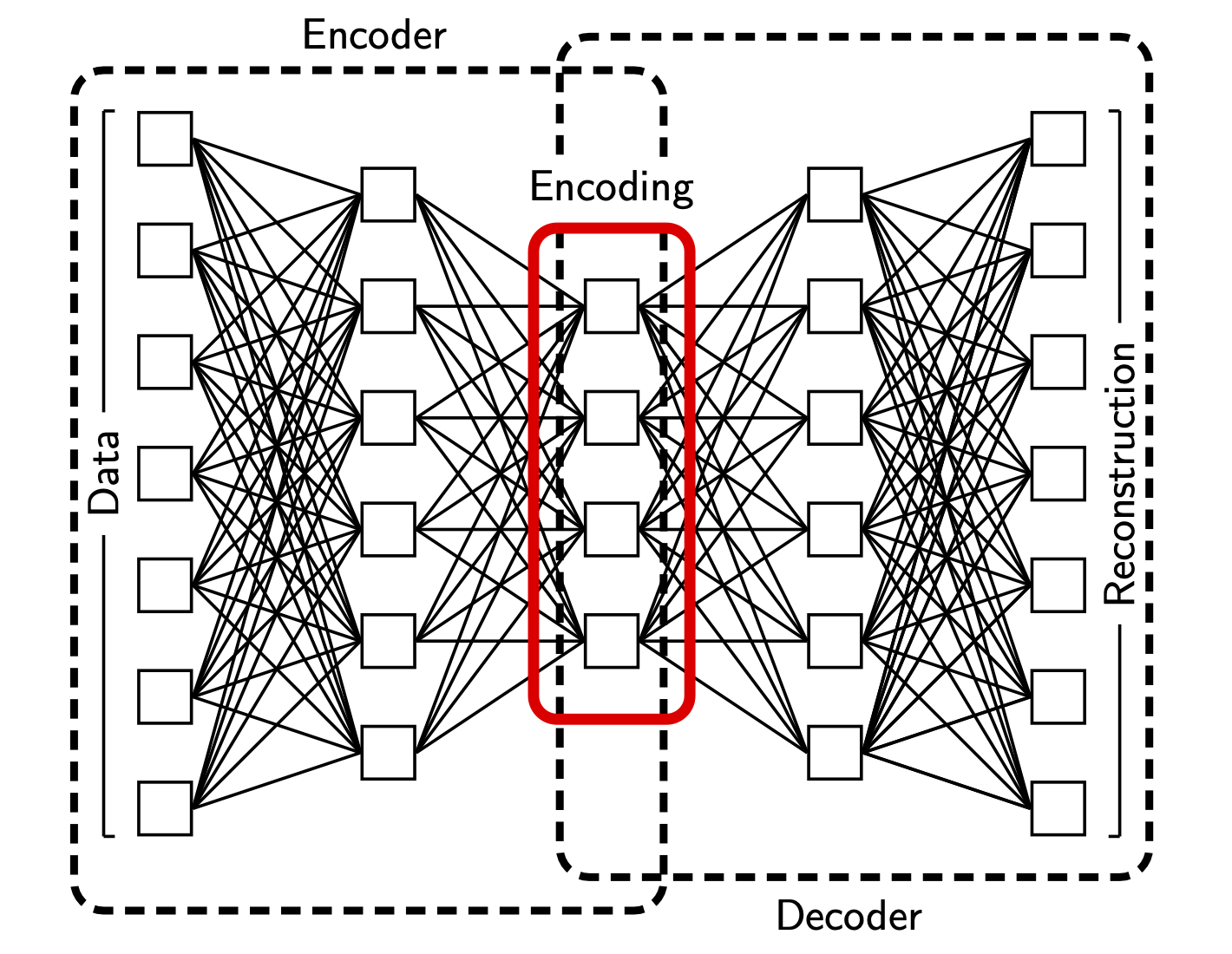

Deep Learning Architecture Autoencoders refer to a specific subset of encoder decoder architectures that are trained via un supervised learning to reconstruct their own input data. because they do not rely on labeled training data, autoencoders are not considered a supervised learning method. Sparse autoencoders sparse autoencoders add a sparsity constraint, usually a penalty term based on kl divergence, to the loss function. this encourages the network to activate only a small subset of hidden neurons for any given input. the result is a distributed, sparse code that often captures more interpretable features than a standard autoencoder. denoising autoencoders introduced by pascal. Autoencoder based models have become a fundamental component of unsupervised and self supervised learning in natural language processing (nlp), enabling models to learn compact latent representations through input reconstruction. from early denoising autoencoders to probabilistic variational autoencoders (vaes) and transformer based masked autoencoding, reconstruction driven objectives have. Section 4 discusses the evolution of autoencoder architectures, from the basic architectures, such as sparse and denoising autoencoders, to more advanced architectures like variational, adversarial, convolutional autoencoders, and others. Autoencoders are another family of unsupervised learning algorithms, in this case seeking to obtain insights about our data by learning compressed versions of the original data, or, in other words, by finding a good lower dimensional feature representations of the same data set. Learn what autoencoders are, how they work, and what types of autoencoders exist. autoencoders are neural networks that can compress, reconstruct, and denoise data, and have applications in anomaly detection, image inpainting, and information retrieval.

Deep Autoencoders Pathmind Autoencoder based models have become a fundamental component of unsupervised and self supervised learning in natural language processing (nlp), enabling models to learn compact latent representations through input reconstruction. from early denoising autoencoders to probabilistic variational autoencoders (vaes) and transformer based masked autoencoding, reconstruction driven objectives have. Section 4 discusses the evolution of autoencoder architectures, from the basic architectures, such as sparse and denoising autoencoders, to more advanced architectures like variational, adversarial, convolutional autoencoders, and others. Autoencoders are another family of unsupervised learning algorithms, in this case seeking to obtain insights about our data by learning compressed versions of the original data, or, in other words, by finding a good lower dimensional feature representations of the same data set. Learn what autoencoders are, how they work, and what types of autoencoders exist. autoencoders are neural networks that can compress, reconstruct, and denoise data, and have applications in anomaly detection, image inpainting, and information retrieval.

Comments are closed.