Artists Finally Have A Tool To Fight Back Against Ai Theft

Artists Fight Back Against Ai Using Their Work South African Art Times Artists face ai image generators copying their styles without consent. cara, an artist run platform, uses glaze tech to protect creators by disrupting unauthorized ai training. The tool, called nightshade, is intended as a way to fight back against ai companies that use artists’ work to train their models without the creator’s permission.

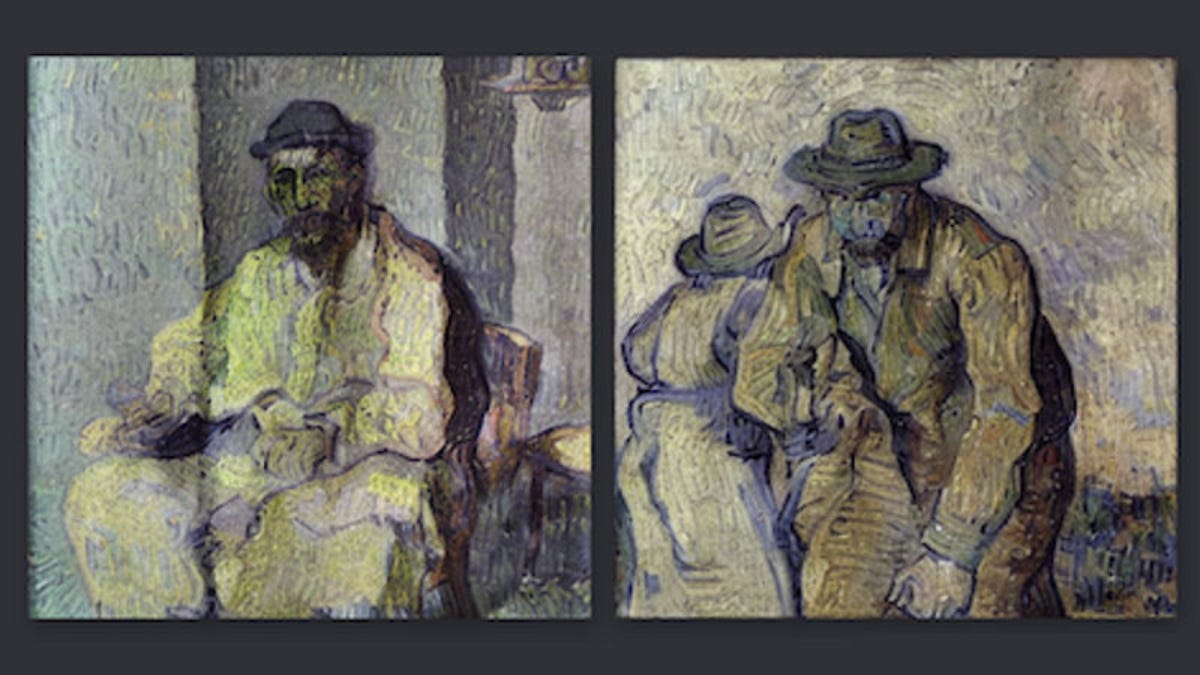

Artists Finally Have A Tool To Help Fight Back Against Ai Platforms The first painting released to the world that utilizes glaze, a protective tech against unethical ai ml models, developed by the @uchicago team led by @ravenben. Media companies making ads or animation are always looking for a cheaper way to produce, and using ai is cheaper than hiring artists. the software that combs the internet for data are called ai. Researchers at the university of chicago have created a software called glaze to keep ai models from learning a particular artist's style. it's part of a growing effort to prevent ai art from including the work of human artists without attribution. But now, a team of computer science researchers at the university of chicago wants to level the playing field and arm artists with the tools they need to fight back against unauthorized use of their work in training new ai models.

Artists Fight Back Tech Weapons Deployed Against Ai Copycats Researchers at the university of chicago have created a software called glaze to keep ai models from learning a particular artist's style. it's part of a growing effort to prevent ai art from including the work of human artists without attribution. But now, a team of computer science researchers at the university of chicago wants to level the playing field and arm artists with the tools they need to fight back against unauthorized use of their work in training new ai models. In october 2023, university of chicago academics revealed a new tool for artists to imperceptibly ‘poison’ ai models which seek to exploit art published online. nightshade makes very subtle changes to the pixels and attached data (metadata) of an image. Artists are pushing back against ai generated art, raising legal, ethical, and creative concerns. learn about copyright battles, protests, and tools protecting creators in the age of ai. Artists have been fighting back on a number of fronts against artificial intelligence companies that they say steal their works to train ai models — including launching class action. Project leads shawn shan and professor ben zhao launched two groundbreaking tools: glaze, a defensive tool to prevent artists’ styles from being mimicked, and nightshade, an offensive tool to corrupt ai models.

Comments are closed.