Ai Training Data Bias

The 5 Leading Causes Of Ai Bias In Training Data Detecting and mitigating ai bias in training data is essential to build fair, transparent, and ethical ai systems that serve everyone equally and responsibly. Ai bias, also called machine learning bias or algorithm bias, refers to the occurrence of biased results due to human biases that skew the original training data or ai algorithm—leading to distorted outputs and potentially harmful outcomes.

.webp)

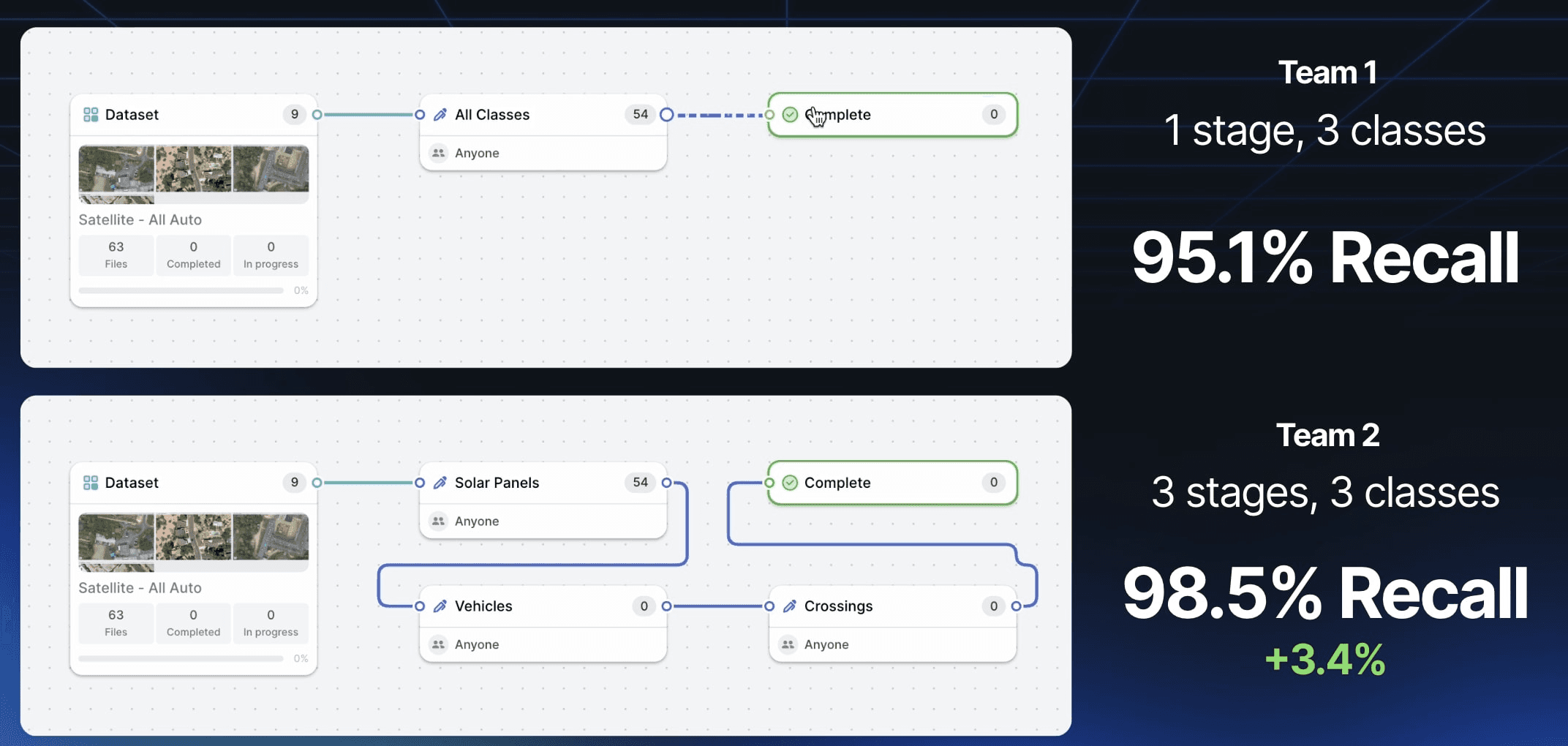

The 5 Leading Causes Of Ai Bias In Training Data Training data bias occurs when datasets contain systematic errors or prejudices that don’t accurately represent the real world population or scenario the ai model will encounter. this bias emerges from historical inequities, limited data collection methods, or skewed sampling processes. No bias, no problem? representative training data can improve ai, but it’s important to recognize that accurate representation in ai tools can be weaponized against marginalized groups. for example, the accuracy of facial recognition technology means that it can cause great harm in the wrong hands. The presence of bias in ai training data is not merely a technical flaw; it has profound ethical implications and translates into tangible, often severe, real world consequences that disproportionately affect marginalized and vulnerable populations. Mit researchers developed an ai debiasing technique that improves the fairness of a machine learning model by boosting its performance for subgroups that are underrepresented in its training data, while maintaining its overall accuracy.

Artificial Intelligence Data Fairness And Bias Coursera The presence of bias in ai training data is not merely a technical flaw; it has profound ethical implications and translates into tangible, often severe, real world consequences that disproportionately affect marginalized and vulnerable populations. Mit researchers developed an ai debiasing technique that improves the fairness of a machine learning model by boosting its performance for subgroups that are underrepresented in its training data, while maintaining its overall accuracy. A widely discussed concern about generative ai is that systems trained on biased data can perpetuate and even amplify those biases, leading to inaccurate outputs or unfair decisions. but. The fairness learning process proposed in this paper is categorized into training and pre training techniques to mitigate bias in structured data. during training, the biases learned by a causal model are mitigated. Discover how bias in ai training data shapes the answers you get. learn what causes it, how it affects results, and what we can do for fairer ai systems. Bias in machine learning occurs when the algorithms used to analyze data reflect and amplify the biases present in the data itself. this can happen at various stages of the machine learning process, including data collection, data preparation, model selection, and model deployment.

The 5 Leading Causes Of Ai Bias In Training Data A widely discussed concern about generative ai is that systems trained on biased data can perpetuate and even amplify those biases, leading to inaccurate outputs or unfair decisions. but. The fairness learning process proposed in this paper is categorized into training and pre training techniques to mitigate bias in structured data. during training, the biases learned by a causal model are mitigated. Discover how bias in ai training data shapes the answers you get. learn what causes it, how it affects results, and what we can do for fairer ai systems. Bias in machine learning occurs when the algorithms used to analyze data reflect and amplify the biases present in the data itself. this can happen at various stages of the machine learning process, including data collection, data preparation, model selection, and model deployment.

The 5 Leading Causes Of Ai Bias In Training Data Discover how bias in ai training data shapes the answers you get. learn what causes it, how it affects results, and what we can do for fairer ai systems. Bias in machine learning occurs when the algorithms used to analyze data reflect and amplify the biases present in the data itself. this can happen at various stages of the machine learning process, including data collection, data preparation, model selection, and model deployment.

The 5 Leading Causes Of Ai Bias In Training Data

Comments are closed.