Ai Scaling Laws

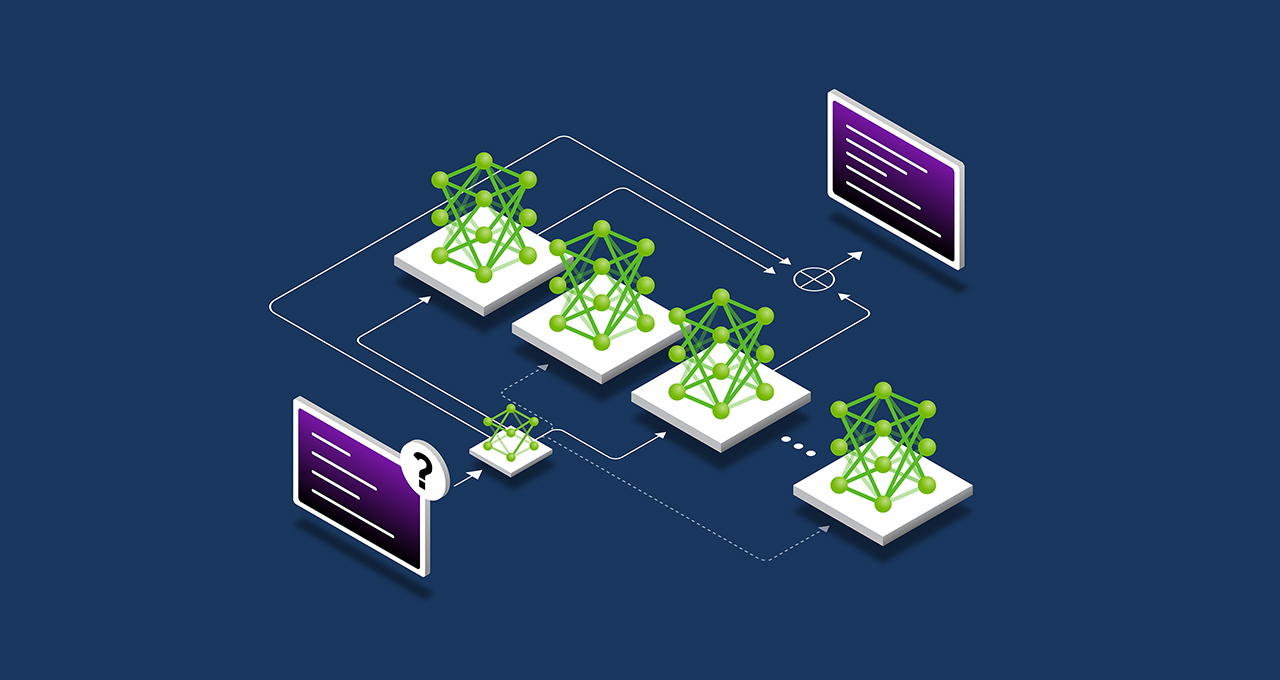

Rethinking Scaling Laws In Ai Development Unite Ai These laws help predict how improving one or more of these factors will affect the ai’s capabilities making it easier to plan and build better systems. scaling laws in ai describe how model performance improves as factors like model size, training data or compute increase. In machine learning, a neural scaling law is an empirical scaling law that describes how neural network performance changes as key factors are scaled up or down.

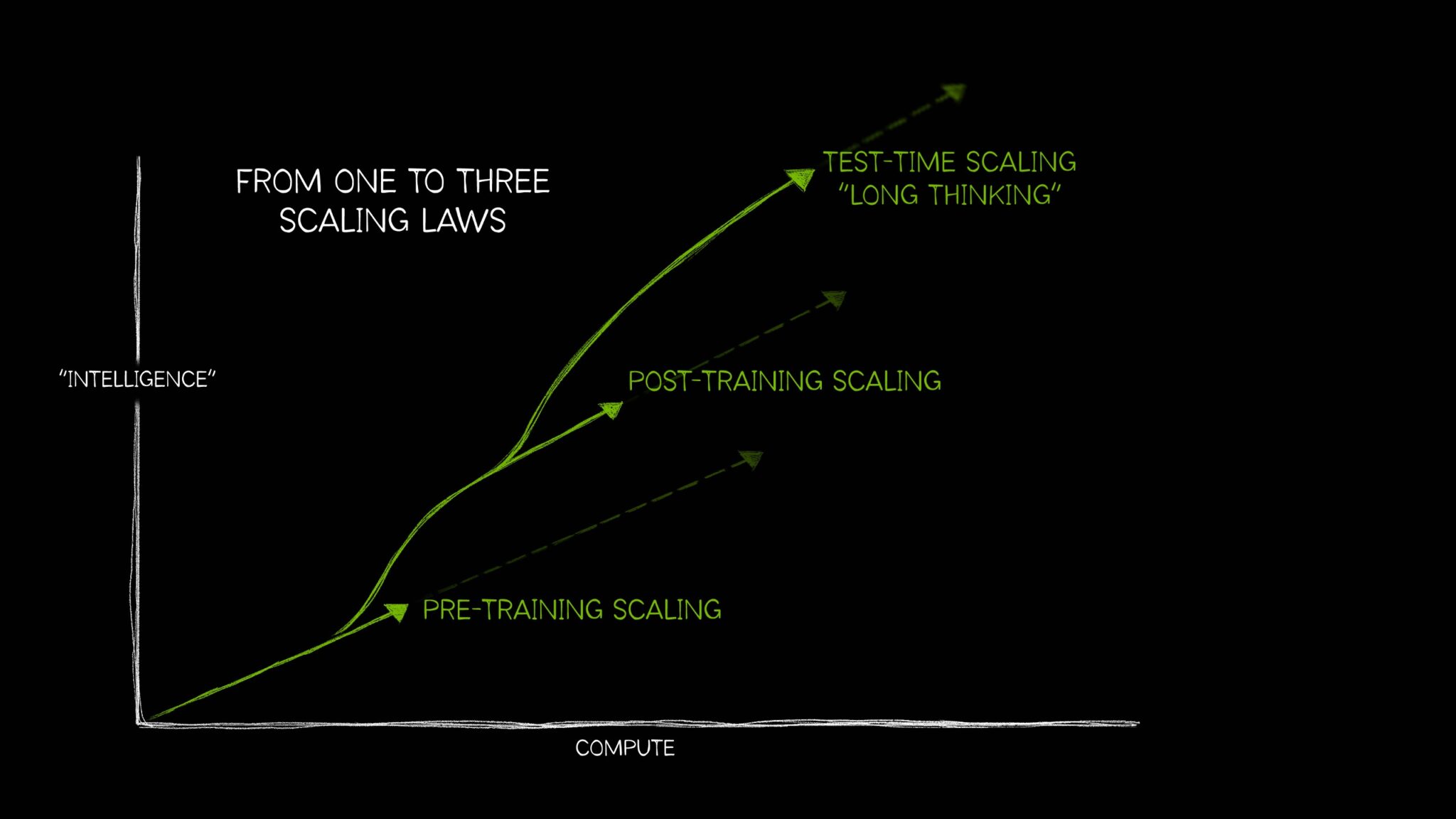

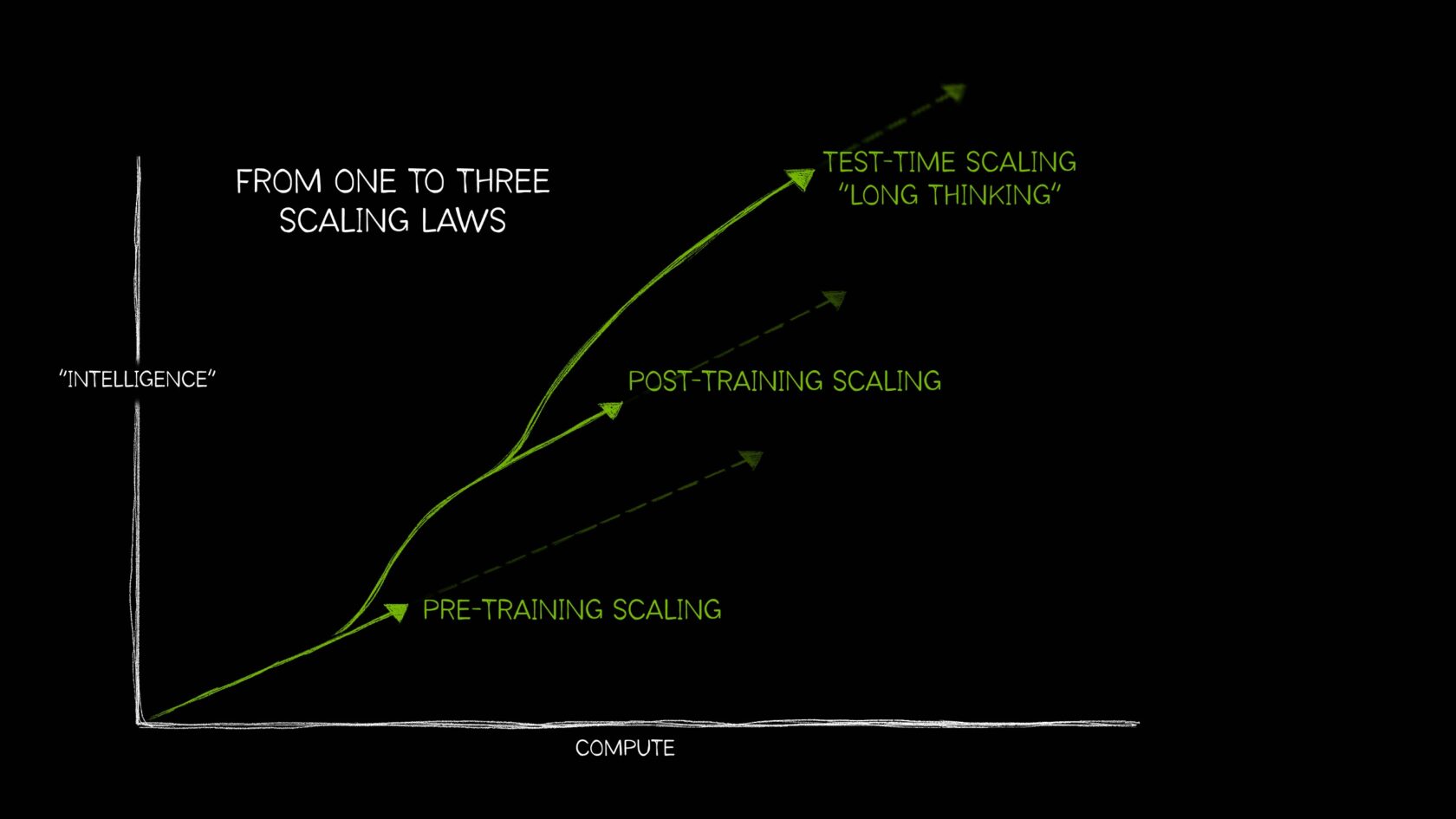

How Scaling Laws Drive Smarter More Powerful Ai Nvidia Blog Scaling laws describe how the performance of ai systems improves as the size of the training data, model parameters or computational resources increases. A blog post by anuraag rath on hugging face. Scaling laws in artificial intelligence are empirical relationships that describe how the performance of neural networks improves as key factors, such as model size, training data volume, and computational resources, are increased. The evolution of ai scaling laws—from the foundational trio identified by openai to the more nuanced concepts of post training and test time scaling championed by nvidia—underscores the complexity and dynamism of modern ai.

How Scaling Laws Drive Smarter More Powerful Ai Nvidia Blog Scaling laws in artificial intelligence are empirical relationships that describe how the performance of neural networks improves as key factors, such as model size, training data volume, and computational resources, are increased. The evolution of ai scaling laws—from the foundational trio identified by openai to the more nuanced concepts of post training and test time scaling championed by nvidia—underscores the complexity and dynamism of modern ai. Scaling laws predict ai capability from compute, data, and parameters. learn chinchilla, kaplan, hoffmann, and the inference compute era that followed. Scaling is one of the most impactful concepts in the history of ai research. for large language models (llms), scaling has mostly been studied in the context of pretraining, where rigorous scaling laws have allowed us to clearly define the relationship between compute and performance. What are scaling laws? at its core, a scaling law in ai describes the mathematical relationship between the performance of a machine learning model (usually measured by loss, or how many errors it makes) and the resources put into it. We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude.

How Scaling Laws Drive Smarter More Powerful Ai Nvidia Blog Scaling laws predict ai capability from compute, data, and parameters. learn chinchilla, kaplan, hoffmann, and the inference compute era that followed. Scaling is one of the most impactful concepts in the history of ai research. for large language models (llms), scaling has mostly been studied in the context of pretraining, where rigorous scaling laws have allowed us to clearly define the relationship between compute and performance. What are scaling laws? at its core, a scaling law in ai describes the mathematical relationship between the performance of a machine learning model (usually measured by loss, or how many errors it makes) and the resources put into it. We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude.

How Scaling Laws Drive Smarter More Powerful Ai Nvidia Blog What are scaling laws? at its core, a scaling law in ai describes the mathematical relationship between the performance of a machine learning model (usually measured by loss, or how many errors it makes) and the resources put into it. We study empirical scaling laws for language model performance on the cross entropy loss. the loss scales as a power law with model size, dataset size, and the amount of compute used for training, with some trends spanning more than seven orders of magnitude.

Comments are closed.