Ai Red Teaming Methodology Explained

Ai Red Teaming Methodology Explained Here we systematically test an ai model using a red teaming methodology to find and fix potential harms, biases and security vulnerabilities before the model is released to the public. This guide provides security professionals with a comprehensive framework for understanding and implementing ai red teaming.

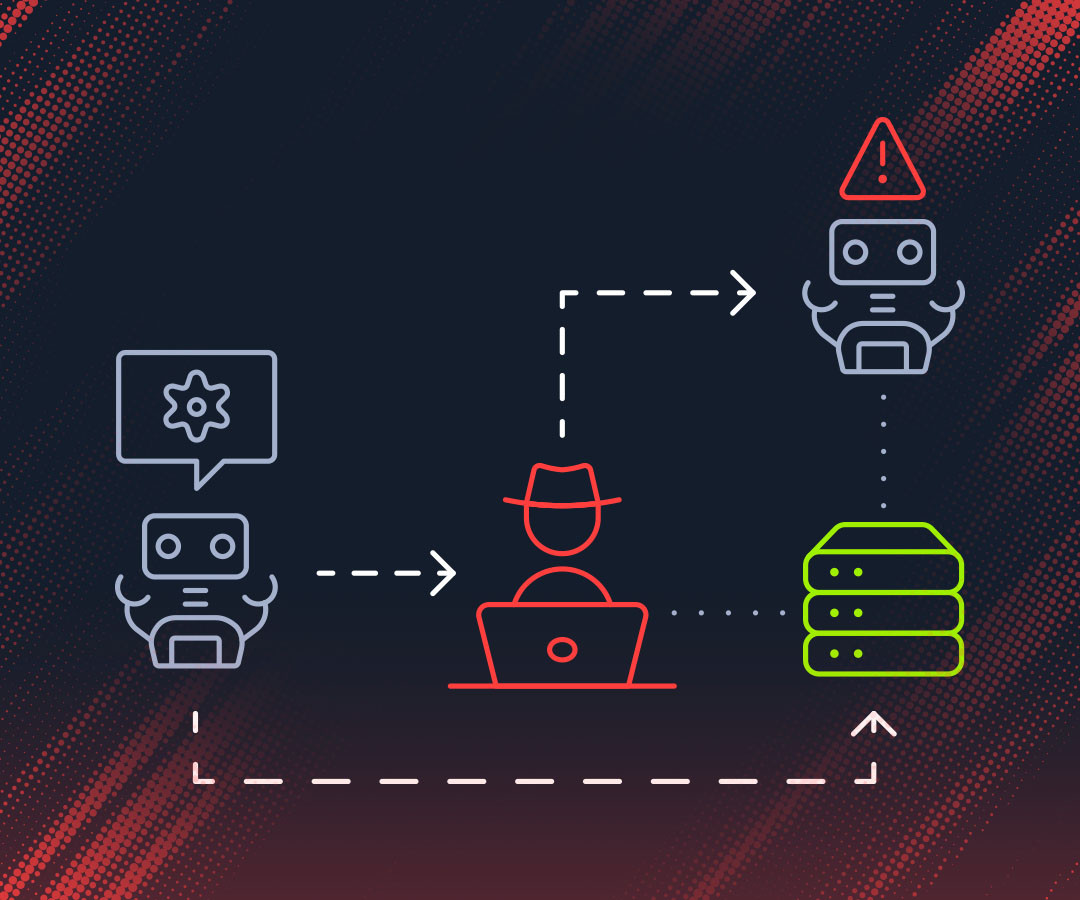

Ai Red Teaming Methodology Secnora Ai red teaming is a structured, proactive security practice where expert teams simulate adversarial attacks on ai systems to uncover vulnerabilities and improve their security and resilience. This article provides a comprehensive exploration of ai red teaming methodology, its evolution, core principles, and practical implementation, equipping security professionals, ai developers, and organizational leaders with the knowledge to safeguard ai driven environments. Ai red teaming is the practice of attacking systems and applications that include ai components in order to identify real security weaknesses. the focus is not on the ai model in isolation, but on how ai is embedded into products and how those features behave under adversarial use. Ai red teaming is a structured, adversarial testing process designed to uncover vulnerabilities in ai systems before attackers do. it simulates real world threats to identify flaws in models, training data, or outputs.

Ai Red Teaming Methodology Secnora Ai red teaming is the practice of attacking systems and applications that include ai components in order to identify real security weaknesses. the focus is not on the ai model in isolation, but on how ai is embedded into products and how those features behave under adversarial use. Ai red teaming is a structured, adversarial testing process designed to uncover vulnerabilities in ai systems before attackers do. it simulates real world threats to identify flaws in models, training data, or outputs. Red teaming is an adversarial testing methodology where skilled testers deliberately attempt to break ai systems by simulating real world attack scenarios. Ai red teaming is the practice of deliberately trying to make an ai system fail, produce harmful outputs, or behave in unintended ways before real users encounter those problems. think of it as hiring an ethical hacker for your ai. This guide offers a structured, hands on introduction to red teaming in generative ai — focused on identifying safety and security vulnerabilities through adversarial testing. This guide offers some potential strategies for planning how to set up and manage red teaming for responsible ai (rai) risks throughout the large language model (llm) product life cycle.

Ai Red Teaming Explained Adversarial Simulation Testing And Capabilities Red teaming is an adversarial testing methodology where skilled testers deliberately attempt to break ai systems by simulating real world attack scenarios. Ai red teaming is the practice of deliberately trying to make an ai system fail, produce harmful outputs, or behave in unintended ways before real users encounter those problems. think of it as hiring an ethical hacker for your ai. This guide offers a structured, hands on introduction to red teaming in generative ai — focused on identifying safety and security vulnerabilities through adversarial testing. This guide offers some potential strategies for planning how to set up and manage red teaming for responsible ai (rai) risks throughout the large language model (llm) product life cycle.

Ai Red Teaming Explained Adversarial Simulation Testing And Capabilities This guide offers a structured, hands on introduction to red teaming in generative ai — focused on identifying safety and security vulnerabilities through adversarial testing. This guide offers some potential strategies for planning how to set up and manage red teaming for responsible ai (rai) risks throughout the large language model (llm) product life cycle.

Comments are closed.