Ai Llm Aihallucinations Version1difference Version 1

Hallucinations In Llm Ai Models Pdf If you’re curious about the deeper mechanics behind ai hallucinations, let’s explore the technical side. we’ll look at how llms are built, how they generate text, and why they sometimes go wrong. By understanding the mechanisms behind hallucinations and the efforts to mitigate them, this review aims to highlight the importance of this challenge in ensuring the future reliability of ai driven language systems.

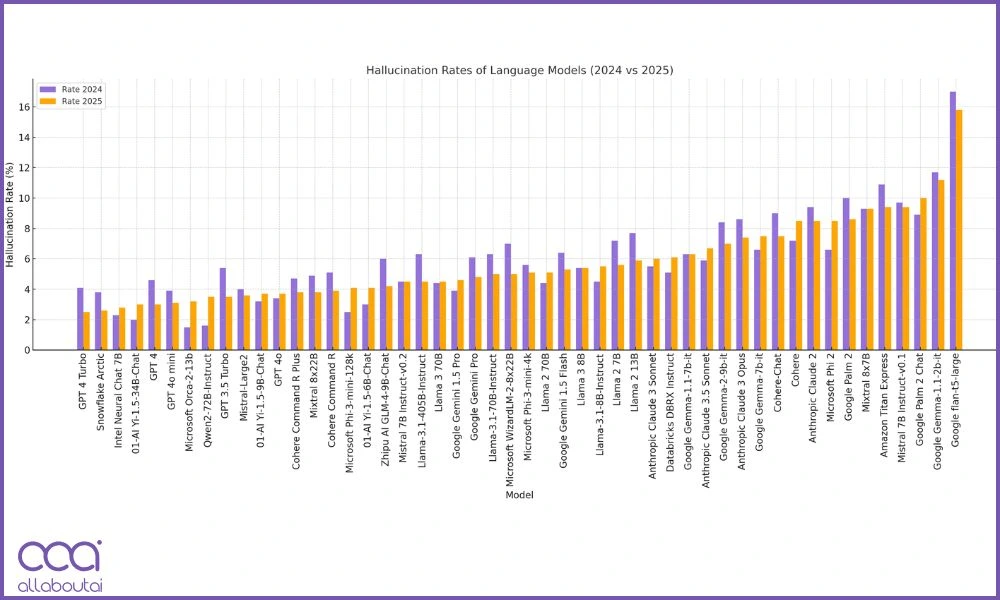

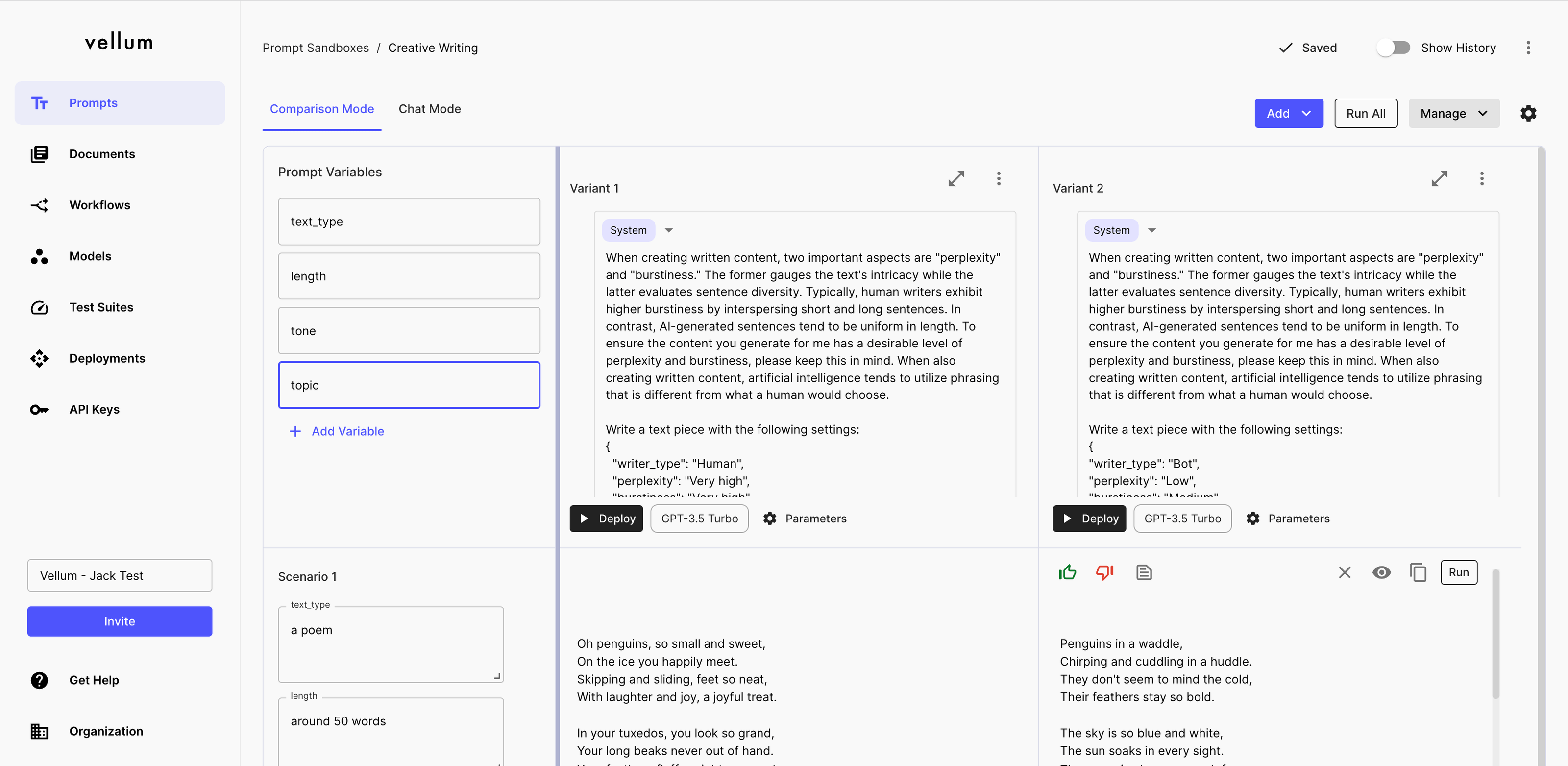

Ai Llm Aihallucinations Version1difference Version 1 Our benchmark evaluates llm hallucination rates using a dataset of questions derived from cnn news articles. we used an automated web data collection system to build the dataset, pulling articles directly from cnn’s rss feed. In this work, we present a comprehensive survey and empirical analysis of hallucination attribution in llms. introducing a novel framework to determine whether a given hallucination stems from not optimize prompting or the model's intrinsic behavior. This research provides a foundational, user centric understanding of llm hallucinations, paving the way for improved ai model development and more trustworthy mobile applications. In the first part in a new 'ai unpacked' series, nathan marlor, our global head of data x ai, explores what ai hallucinations are, why they occur, and how we can address them.

Fact Or Fiction What Are The Different Llm Hallucination Types This research provides a foundational, user centric understanding of llm hallucinations, paving the way for improved ai model development and more trustworthy mobile applications. In the first part in a new 'ai unpacked' series, nathan marlor, our global head of data x ai, explores what ai hallucinations are, why they occur, and how we can address them. Our error analysis is general yet has specific implications for hallucination. it applies broadly, including to reasoning and search and retrieval language models, and the analysis does not rely on properties of next word prediction or transformer based neural networks. Discover llm hallucination with tests across gpt 5, claude, gemini. compare use cases, hallucination types, and accuracy results. In medicine, hallucinations refer to sensory experiences that occur in the absence of corresponding external stimuli. in the field of llms, hallucinations refer to the generation of false or fabricated information. Insights into large language models. the work presented in the paper challenges the common view of hallucination as a flaw in large language models (llms), proposing instead that it is an inherent feature of their architecture.

Llm Hallucination Test Which Ai Model Hallucinates The Most Our error analysis is general yet has specific implications for hallucination. it applies broadly, including to reasoning and search and retrieval language models, and the analysis does not rely on properties of next word prediction or transformer based neural networks. Discover llm hallucination with tests across gpt 5, claude, gemini. compare use cases, hallucination types, and accuracy results. In medicine, hallucinations refer to sensory experiences that occur in the absence of corresponding external stimuli. in the field of llms, hallucinations refer to the generation of false or fabricated information. Insights into large language models. the work presented in the paper challenges the common view of hallucination as a flaw in large language models (llms), proposing instead that it is an inherent feature of their architecture.

4 Llm Hallucination Examples And How To Reduce Them In medicine, hallucinations refer to sensory experiences that occur in the absence of corresponding external stimuli. in the field of llms, hallucinations refer to the generation of false or fabricated information. Insights into large language models. the work presented in the paper challenges the common view of hallucination as a flaw in large language models (llms), proposing instead that it is an inherent feature of their architecture.

Comments are closed.