Ai Inference Memory System Tradeoffs

Ai Inference Memory System Tradeoffs The chart below shows the total megabytes required in an inference chip for weights and activations for resnet 50 and yolov3 at various images sizes. there are 3 choices for memory system implementation for ai inference chips. In this paper, we present an initial systems level characterization of the performance implications of rag. to guide our analysis, we construct a taxonomy of rag systems based on recent rag based llm literature.

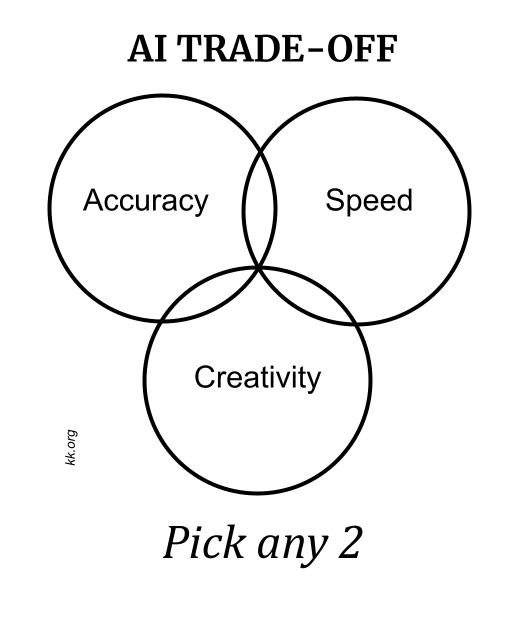

The Technium The Tradeoffs In Ai Ai memory systems give agents cross session recall. covers four memory types, the ingestion eviction lifecycle, 2026 tools, and enterprise memory failures. There are 3 choices for memory system implementation for ai inference chips. most chips will have a combination of 2 or 3 of these choices in different ratios: 1. distributed local sram – a little less area efficient since overhead is shared across fewer bits, but keeping sram close to compute cuts latency, cuts power and increases bandwidth. 2. Deep dive into the computational economics of different ai memory approaches from an implementation standpoint. Google researchers have revealed that memory and interconnect are the primary bottlenecks for llm inference, not compute power, as memory bandwidth lags 4.7x behind.

Memory Tradeoffs Intensify In Ai Automotive Applications Deep dive into the computational economics of different ai memory approaches from an implementation standpoint. Google researchers have revealed that memory and interconnect are the primary bottlenecks for llm inference, not compute power, as memory bandwidth lags 4.7x behind. Ai’s own trade offs: memory vs. meaning artificial intelligence systems, especially large language models and neural networks, face similar constraints. while these systems can store vast amounts of data and retrieve it quickly, that memory comes at the cost of contextual awareness and abstraction. This article explores how fast inference architectures are evolving in 2026, focusing on the fundamental tradeoffs between latency and throughput, and how modern ai systems are designed to balance responsiveness, efficiency and cost at scale. Learn about the ability of ai agent memory to recognize patterns, preserve context and adapt to changes, all essential in strategic business planning. In this article, i’ll share the approaches i’ve learned over the last couple of years through research, offline benchmarking, runtime experiments, and building customer facing ai tools. i’ll.

How The Economics Of Inference Can Maximize Ai Value Nvidia Blog Ai’s own trade offs: memory vs. meaning artificial intelligence systems, especially large language models and neural networks, face similar constraints. while these systems can store vast amounts of data and retrieve it quickly, that memory comes at the cost of contextual awareness and abstraction. This article explores how fast inference architectures are evolving in 2026, focusing on the fundamental tradeoffs between latency and throughput, and how modern ai systems are designed to balance responsiveness, efficiency and cost at scale. Learn about the ability of ai agent memory to recognize patterns, preserve context and adapt to changes, all essential in strategic business planning. In this article, i’ll share the approaches i’ve learned over the last couple of years through research, offline benchmarking, runtime experiments, and building customer facing ai tools. i’ll.

Comments are closed.