Ai Hardware Training Inference Devices And Model Optimization

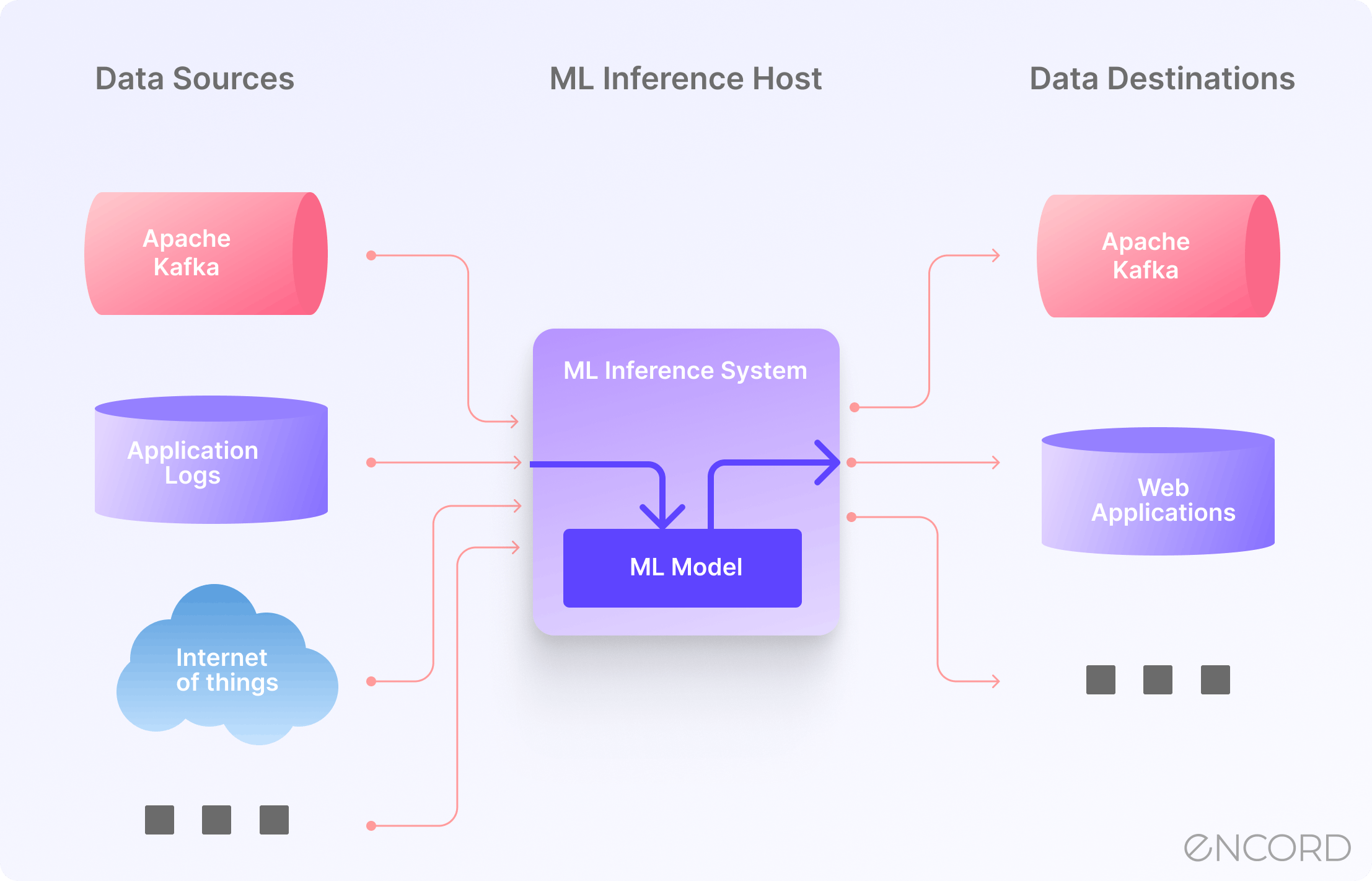

Model Inference In Machine Learning Encord This article provides a comprehensive analysis of the hardware requirements for ai, focusing on key providers, the latest research breakthroughs, and emerging trends shaping the future of ai. Learn to choose hardware, frameworks, and configurations for inference. master optimization: quantization, speculative decoding, kv caching, parallelism. from prerequisites and model architecture through hardware, software, optimization techniques, multimodal inference, and production deployment.

Chris Hay On Linkedin Ai Hardware Training Inference Devices And By exploring the hardware requirements for ai training and inference, this article provides a roadmap for understanding ai hardware's current state and future trajectory. First, we provide an overview of the algorithm architecture of mainstream generative llms and delve into the inference process. then, we summarize different optimization methods for different platforms such as cpu, gpu, fpga, asic, and pim ndp, and provide inference results for generative llms. Let us delve deeper into ai inference and its applications, the role of software optimization, and how cpus and particularly intel® cpus with built in ai acceleration deliver optimal ai inference performance, while looking at a few interesting use case examples. Ai hardware are specialized processors for ai inference and model training. we analyzed major ai chip manufacturers, benchmarking the latest generation ai chips on cloud and serverless environments with different llms.

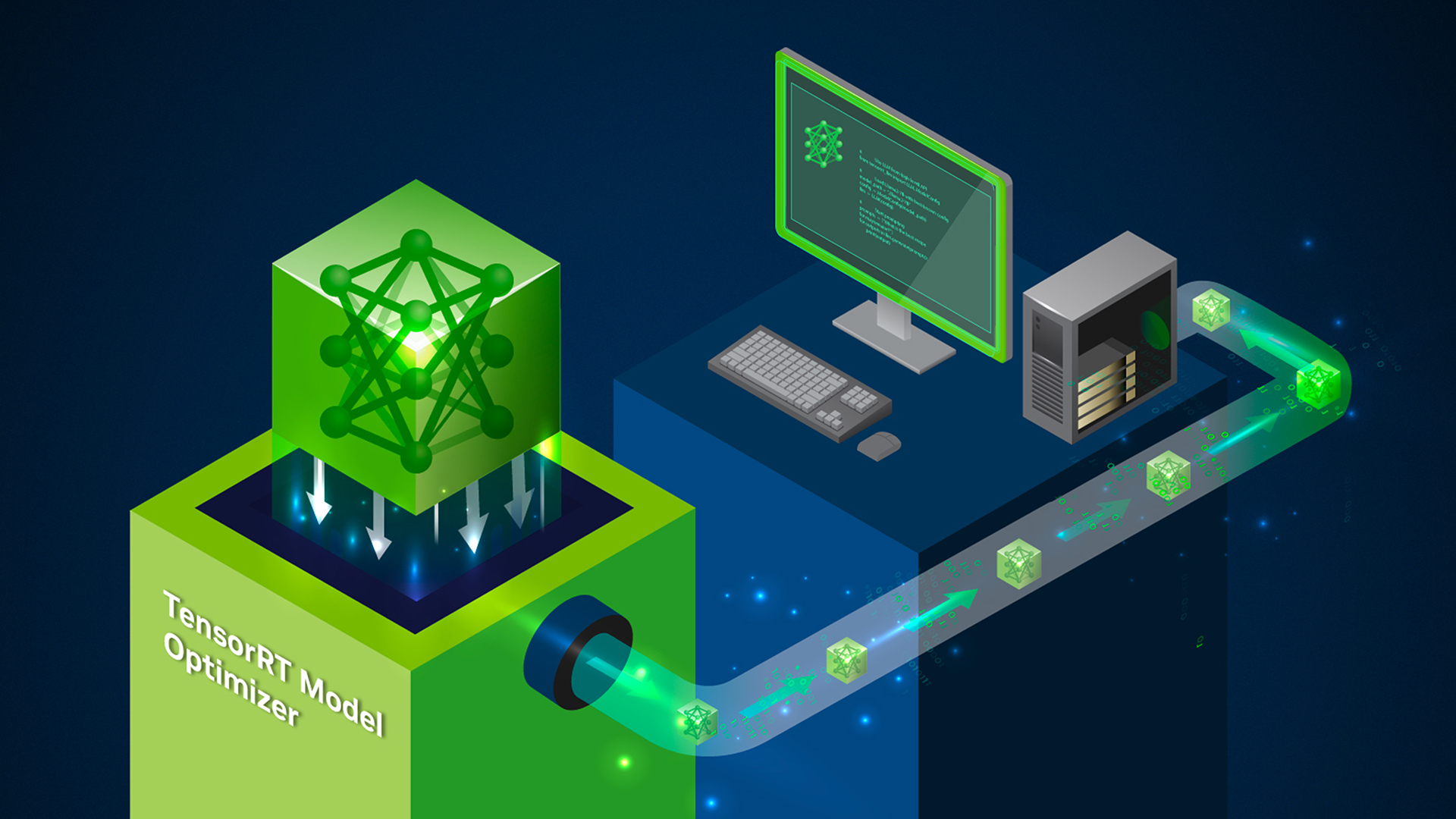

Top 5 Ai Model Optimization Techniques For Faster Smarter Inference Let us delve deeper into ai inference and its applications, the role of software optimization, and how cpus and particularly intel® cpus with built in ai acceleration deliver optimal ai inference performance, while looking at a few interesting use case examples. Ai hardware are specialized processors for ai inference and model training. we analyzed major ai chip manufacturers, benchmarking the latest generation ai chips on cloud and serverless environments with different llms. This blog post explores the various types of ai hardware, their impact on training speed and inference efficiency, notable case studies, future trends, and the challenges faced in this domain. On device training on device training with onnx runtime lets developers take an inference model and train it locally to deliver a more personalized and privacy respecting experience for customers. The goal of this project is to develop a general method that can train many different types of neural networks, and to demonstrate and evaluate their performance on new emerging hardware. We define a standard inference model for pcm based aimc that can readily be extended to other types of nvm devices.

Comments are closed.