Ai Chips Ugpcb

Ai Chips Ugpcb An ai chip, formally termed an artificial intelligence specific integrated circuit, is a hardware accelerator designed to optimize machine learning algorithms (e.g., deep learning, reinforcement learning). Adapted from a section of a report by erich grunewald and christopher phenicie, this blog post introduces the core concepts and background information needed to understand the ai chip making process.

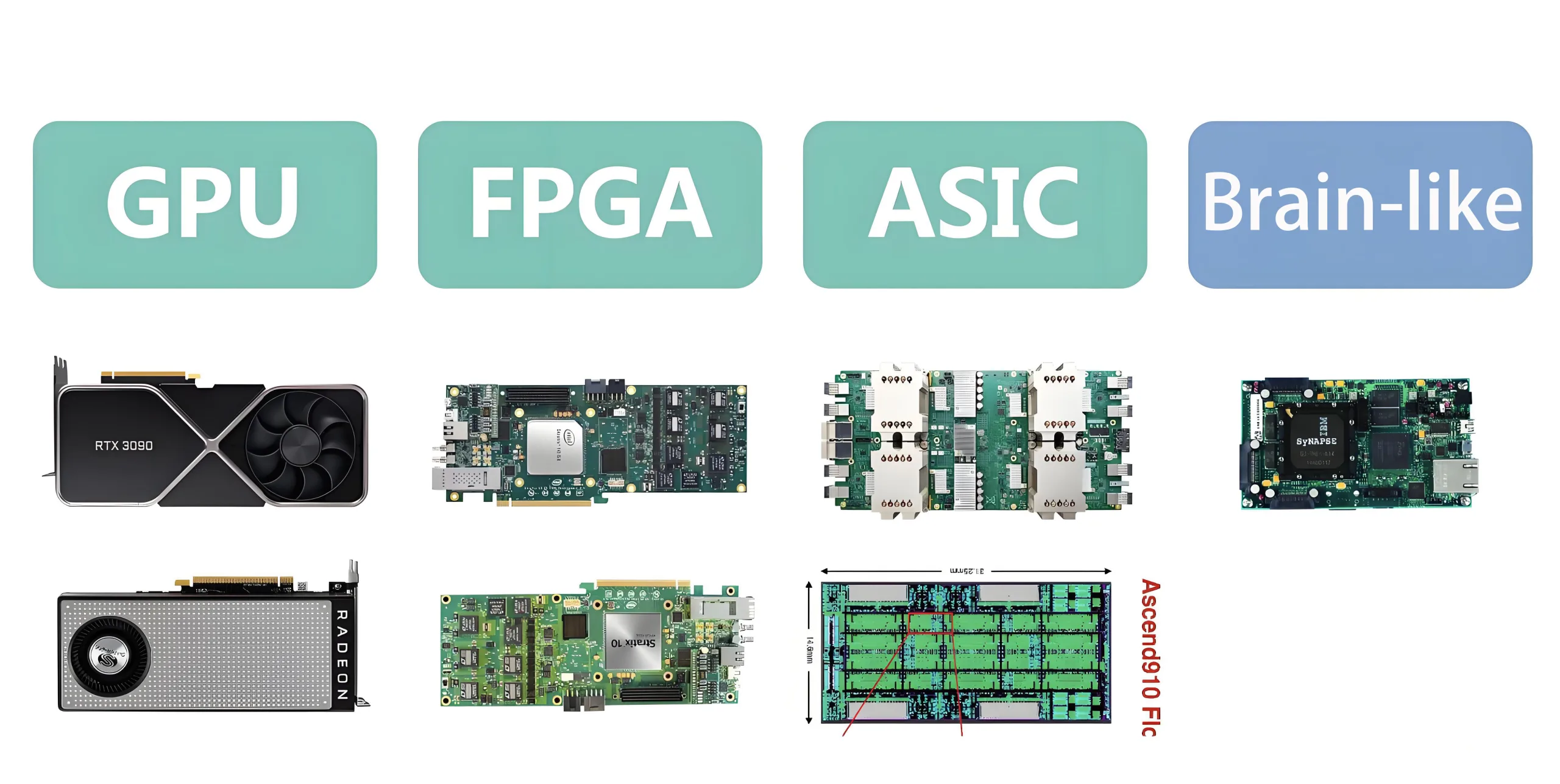

Ai Chips Ugpcb The ai chip is intended to provide the required amount of power for the functionality of ai. ai applications need a tremendous level of computing power, which general purpose devices, like cpus, usually cannot offer at scale. Our introduction to ai chip making in china report contains more detail on ai chip making, especially as it relates to chinese efforts to develop better domestic ai chips. The term “ai chip” is broad and includes many kinds of chips designed for the demanding compute environments required by ai tasks. examples of popular ai chips include graphics processing units (gpus), field programmable gate arrays (fpgas) and application specific integrated circuits (asics). Ai chips, aka logic chips, have the power to process large volumes of data needed for ai workloads. they are typically smaller in size and manifold more efficient than those in standard chips, providing compute power with faster processing capabilities and smaller energy footprints.

Ai Chips Ugpcb The term “ai chip” is broad and includes many kinds of chips designed for the demanding compute environments required by ai tasks. examples of popular ai chips include graphics processing units (gpus), field programmable gate arrays (fpgas) and application specific integrated circuits (asics). Ai chips, aka logic chips, have the power to process large volumes of data needed for ai workloads. they are typically smaller in size and manifold more efficient than those in standard chips, providing compute power with faster processing capabilities and smaller energy footprints. Learn about the role of ai chips in overcoming the challenges of running deep neural networks and other complex ai algorithms. The main difference between ai chips and traditional chips lies in the architecture. while conventional chips, such as cpus, are designed to perform a wide range of tasks sequentially, ai chips excel at parallel processing, which is the ability to handle multiple operations simultaneously. Learn how to extract business value from ai and llms. to understand how ai chips work, let’s explore in more detail their specialized architectures, parallel processing capabilities, key architectural components, software, and energy efficiency. Such leading edge, specialized “ai chips” are essential for cost effectively implementing ai at scale; trying to deliver the same ai application using older ai chips or general purpose chips can cost tens to thousands of times more.

Comments are closed.