Ai Agents Memory Design Optimization Techniques

Tutorial A Practical Deep Dive Into Memory Optimization For Agentic In this blog, we will code and evaluate 9 beginner to advanced memory optimization techniques for ai agents. you will learn how to apply each technique, along with their advantages and drawbacks—from simple sequential approaches to advanced, os like memory management implementations. In this blog, we will code and evaluate 9 beginner to advanced memory optimization techniques for ai agents. you will learn how to apply each technique, along with their advantages and drawbacks from simple sequential approaches to advanced, os like memory management implementations.

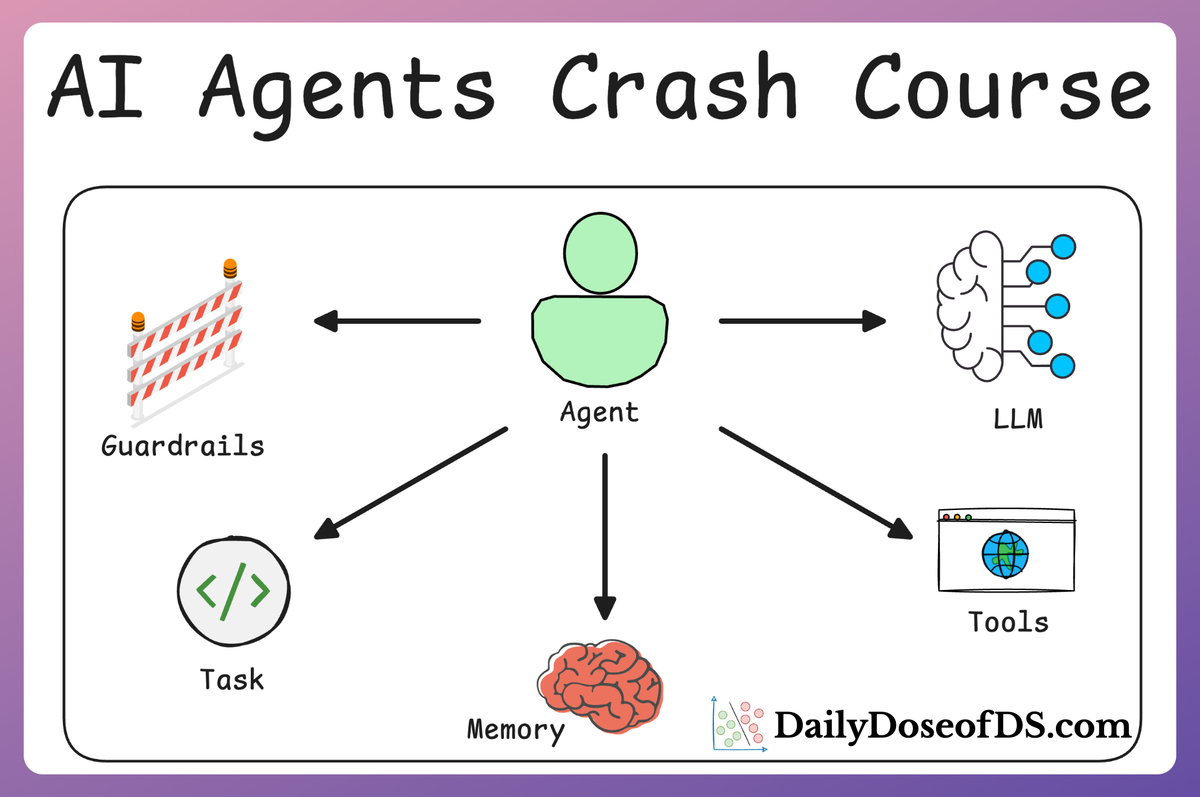

Managing Short Term Memory In Ai Agents Efficient Techniques For This article explores 9 powerful techniques to optimize memory in ai agents, helping developers and researchers design smarter, more scalable ai systems. A practical tutorial on implementing long term and short term memory systems in ai agents using langchain, pydantic ai, and agno frameworks for production applications. Learn how to optimize memory for ai agents, understanding the difference between memory, knowledge, and tools, and why context management is crucial for production agent systems. We have added part 16 and part 17 to our ai agents crash course, where we use langgraph to implement 6 production grade memory optimization techniques in agentic workflows.

How To Enhance Ai Agents Memory With Memary And Falkordb Learn how to optimize memory for ai agents, understanding the difference between memory, knowledge, and tools, and why context management is crucial for production agent systems. We have added part 16 and part 17 to our ai agents crash course, where we use langgraph to implement 6 production grade memory optimization techniques in agentic workflows. This article covered nine memory optimization techniques for ai agents, ranging from simple approaches like sliding windows to advanced methods inspired by operating system memory management. In this article, you will learn how to design, implement, and evaluate memory systems that make agentic ai applications more reliable, personalized, and effective over time. Memory optimization for llms reduces peak and steady state memory by combining quantization (weights kv cache), efficient attention (paged kv cache), activation gradient checkpointing, sharding offloading, and context compression. Learn the current models for generating, storing, and retrieving these memories to provide better results from ai agents. explore tools and techniques like semantic caching, vector embeddings, checkpointing, and long term memory design to optimize speed, accuracy, and cost.

Comments are closed.