Agentic Ai Evaluation Framework

Github Gokulan006 Agentic Ai Evaluation Framework By evaluating tool utilisation, memory management, strategic planning, and component integration, aaef enables developers, researchers, and stakeholders to identify strengths and areas for improvement in their agentic ai applications. To address these challenges, we propose a holistic agentic ai evaluation framework, as shown in the following figure. the framework contains two key components: an automated ai agent evaluation workflow and an ai agent evaluation library.

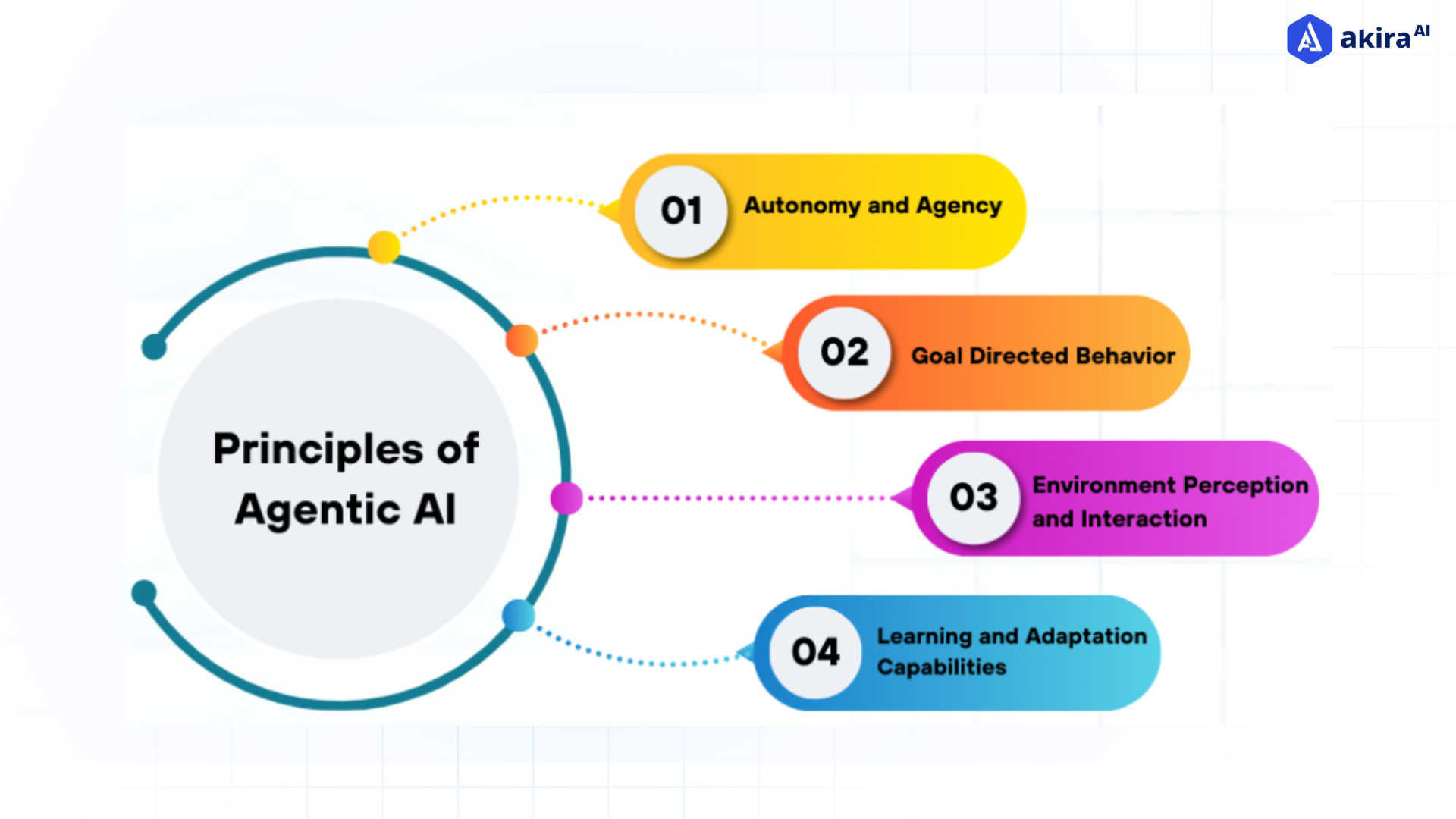

Agentic Ai Evaluation Framework This guide covers a practical framework for evaluating agent performance across four dimensions that determine production readiness. you’ll see what to measure, which evaluation methods fit different use cases, and how to build an evaluation pipeline that catches problems before they hit users. We will explore multiple frameworks for understanding agents (from classical ai agent types to modern implementation and autonomy levels) and how these dimensions intersect. The goal of this paper is twofold: (1) to synthesise existing evaluation practices for agentic ai and identify their strengths and limitations, and (2) to propose a balanced evaluation framework that integrates performance, robustness, safety, human factors and economic sustainability. Many teams combine multiple tools, roll their own eval framework, or just use simple evaluation scripts as a starting point. we find that while frameworks can be a valuable way to accelerate progress and standardize, they’re only as good as the eval tasks you run through them.

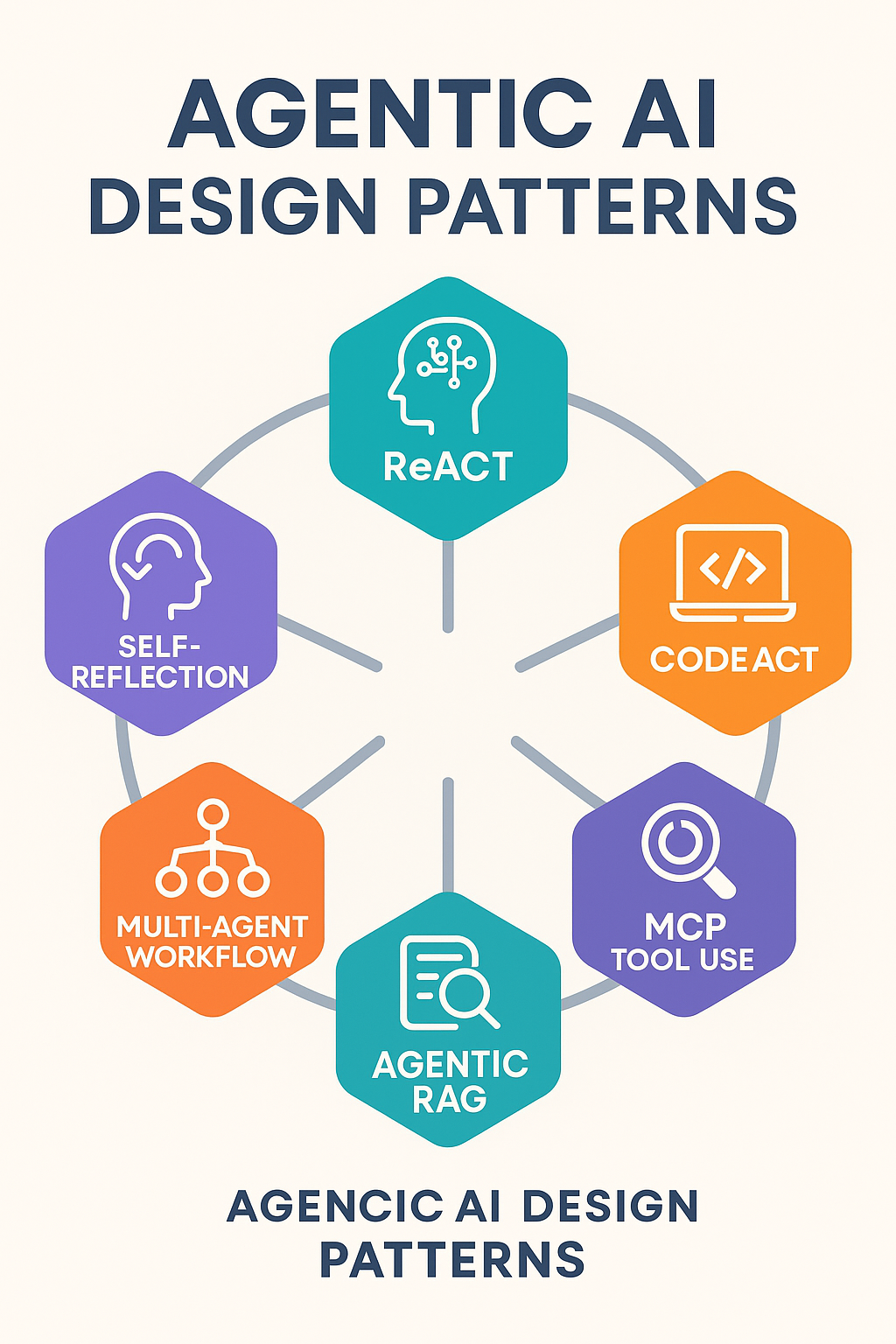

Building Agentic Ai Framework Architecture Key Components The goal of this paper is twofold: (1) to synthesise existing evaluation practices for agentic ai and identify their strengths and limitations, and (2) to propose a balanced evaluation framework that integrates performance, robustness, safety, human factors and economic sustainability. Many teams combine multiple tools, roll their own eval framework, or just use simple evaluation scripts as a starting point. we find that while frameworks can be a valuable way to accelerate progress and standardize, they’re only as good as the eval tasks you run through them. The evaluation framework diagram below illustrates the architecture of our approach, highlighting its key components and their interactions. we designed it to address the unique challenges of evaluating lob agents, ensuring scalability, reproducibility, and actionable insights. To understand these new capabilities, let's walk through an example of building a high quality agentic application using agent framework and improving its quality using agent evaluation. This section provides comprehensive coverage of evaluation frameworks, benchmarks, and platforms for assessing the performance and capabilities of agentic ai systems. We organized the conceptual foundations, available tools, architectures, and evaluation metrics in this research, which defines a structured foundation for understanding and advancing agentic.

Blog Agent Evaluation The evaluation framework diagram below illustrates the architecture of our approach, highlighting its key components and their interactions. we designed it to address the unique challenges of evaluating lob agents, ensuring scalability, reproducibility, and actionable insights. To understand these new capabilities, let's walk through an example of building a high quality agentic application using agent framework and improving its quality using agent evaluation. This section provides comprehensive coverage of evaluation frameworks, benchmarks, and platforms for assessing the performance and capabilities of agentic ai systems. We organized the conceptual foundations, available tools, architectures, and evaluation metrics in this research, which defines a structured foundation for understanding and advancing agentic.

Ai Agent Evaluation Framework From Apple By Cobus Greyling Medium This section provides comprehensive coverage of evaluation frameworks, benchmarks, and platforms for assessing the performance and capabilities of agentic ai systems. We organized the conceptual foundations, available tools, architectures, and evaluation metrics in this research, which defines a structured foundation for understanding and advancing agentic.

Ai Agent Evaluation Framework From Apple By Cobus Greyling Medium

Comments are closed.