Adam Optimization Algorithm Explained Visually Deep Learning 13

Adam Advanced Optimization Algorithm Advanced Learning Algorithms In this video, you’ll learn how adam makes gradient descent faster, smoother, and more reliable by combining the strengths of momentum and rmsprop into a single optimizer. Adam optimizer from definition, math explanation, algorithm walkthrough, visual comparison, implementation, to finally the advantages and disadvantages of adam compared to other.

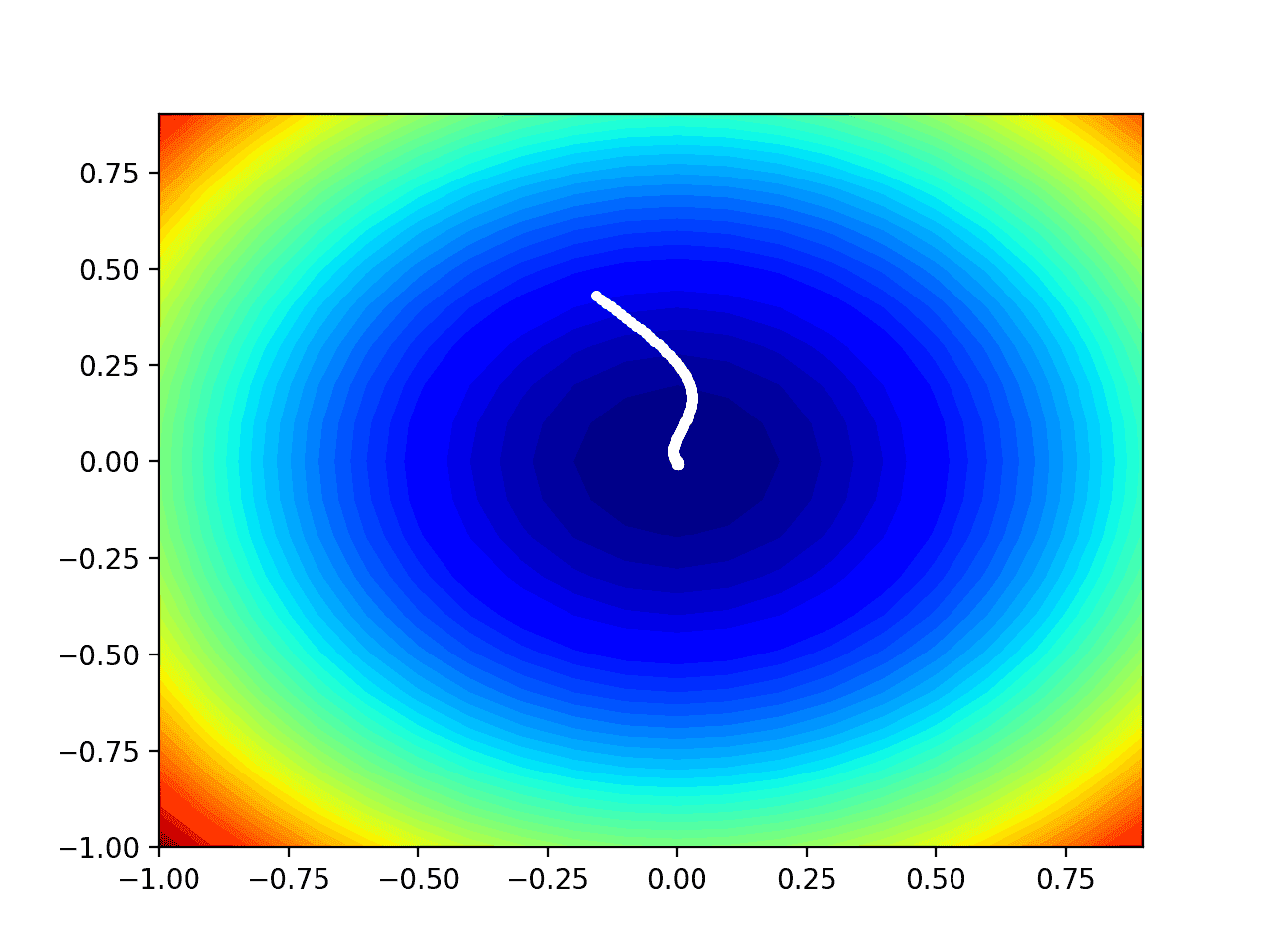

Adam Advanced Optimization Algorithm Advanced Learning Algorithms Adam optimizer is the extended version of stochastic gradient descent which could be implemented in various deep learning applications such as computer vision and natural language processing in the future years. Adam (adaptive moment estimation) optimizer combines the advantages of momentum and rmsprop techniques to adjust learning rates during training. it works well with large datasets and complex models because it uses memory efficiently and adapts the learning rate for each parameter automatically. Because of its its fast convergence and robustness across problems, the adam optimization algorithm is the default algorithm used for deep learning. our expert explains how it works. Optimization on non convex functions in high dimensional spaces, like those encountered in deep learning, can be hard to visualize. however, we can learn a lot from visualizing optimization paths on simple 2d non convex functions.

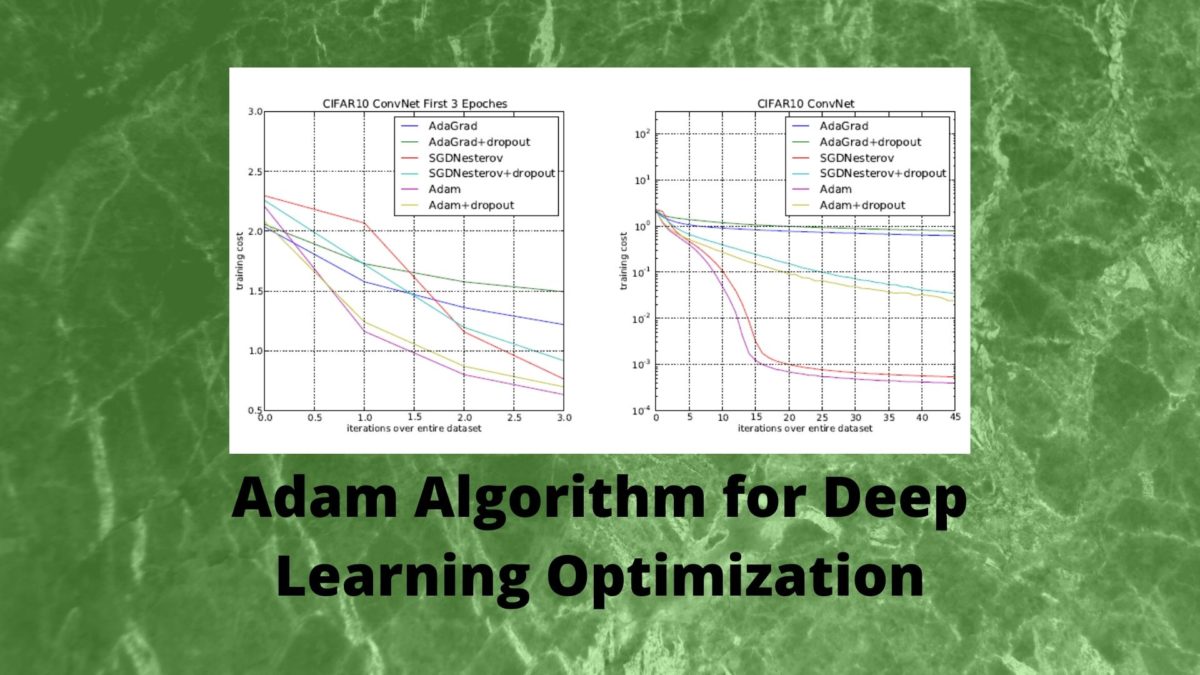

Adam Algorithm For Deep Learning Optimization Because of its its fast convergence and robustness across problems, the adam optimization algorithm is the default algorithm used for deep learning. our expert explains how it works. Optimization on non convex functions in high dimensional spaces, like those encountered in deep learning, can be hard to visualize. however, we can learn a lot from visualizing optimization paths on simple 2d non convex functions. Detailed look at the adaptive moment estimation (adam) optimizer, incorporating first and second moment estimates. The adam optimization algorithm is an extension to stochastic gradient descent that has recently seen broader adoption for deep learning applications in computer vision and natural language processing. In machine learning, adam (adaptive moment estimation) stands out as a highly efficient optimization algorithm. it’s designed to adjust the learning rates of each parameter. This is where optimization algorithms come in handy, and adam is like having a smart flashlight on this journey. adam, short for adaptive moment estimation, is a popular optimization technique, especially in deep learning. in this article, you’ll see why this is the case.

Gentle Introduction To The Adam Optimization Algorithm For Deep Detailed look at the adaptive moment estimation (adam) optimizer, incorporating first and second moment estimates. The adam optimization algorithm is an extension to stochastic gradient descent that has recently seen broader adoption for deep learning applications in computer vision and natural language processing. In machine learning, adam (adaptive moment estimation) stands out as a highly efficient optimization algorithm. it’s designed to adjust the learning rates of each parameter. This is where optimization algorithms come in handy, and adam is like having a smart flashlight on this journey. adam, short for adaptive moment estimation, is a popular optimization technique, especially in deep learning. in this article, you’ll see why this is the case.

Gentle Introduction To The Adam Optimization Algorithm For Deep In machine learning, adam (adaptive moment estimation) stands out as a highly efficient optimization algorithm. it’s designed to adjust the learning rates of each parameter. This is where optimization algorithms come in handy, and adam is like having a smart flashlight on this journey. adam, short for adaptive moment estimation, is a popular optimization technique, especially in deep learning. in this article, you’ll see why this is the case.

Comments are closed.