4 2 Multivariable Calculus Numerical Methods For Machine Learning

Calculus For Machine Learning Sample Pdf A multivariate function is a function that takes two or more variables as inputs and produces a single output. in general a multivariate function maps from r n to r: (f (x 1, x 2, , x n). This article delves into the key concepts of multivariable calculus that are pertinent to machine learning, including partial derivatives, gradient vectors, the hessian matrix, and optimization techniques.

Module 2 Multivariable Calculus Pdf This course covers differential, integral and vector calculus for functions of more than one variable. these mathematical tools and methods are used extensively in the physical sciences, engineering, economics and computer graphics. Mathematics for machine learning: multivariate calculus this repository explores the fundamental concepts of multivariate calculus that power modern machine learning algorithms, particularly neural networks. This course offers a brief introduction to the multivariate calculus required to build many common machine learning techniques. we start at the very beginning with a refresher on the “rise over run” formulation of a slope, before converting this to the formal definition of the gradient of a function. This brings us to one of the most important mathematical concepts in machine learning: the direction of steepest decent points in the direction of −∇wl(w). thus our informal algorithm can be.

Multivariable Calculus Week 4 Revised 2 Pdf Curvature This course offers a brief introduction to the multivariate calculus required to build many common machine learning techniques. we start at the very beginning with a refresher on the “rise over run” formulation of a slope, before converting this to the formal definition of the gradient of a function. This brings us to one of the most important mathematical concepts in machine learning: the direction of steepest decent points in the direction of −∇wl(w). thus our informal algorithm can be. We have to minimize within a radius since a linear function is unbounded otherwise. for l2 bounded steps (i.e. ∥x − x0∥2 ≤ α this is equivalent to gradient descent. for other norms, this is called steepest descent with respect to the lp norm:. Master essential multivariate calculus concepts for machine learning, from gradients to optimization techniques. This document provides a summary of key concepts in multivariate calculus and optimization that are important for machine learning, including definitions of derivatives, rules for computing derivatives, taylor series, neural network activation functions, vector calculus concepts like gradients and hessians, and optimization techniques like. In this module, we will derive the formal expression for the univariate taylor series and discuss some important consequences of this result relevant to machine learning. finally, we will discuss the multivariate case and see how the jacobian and the hessian come in to play.

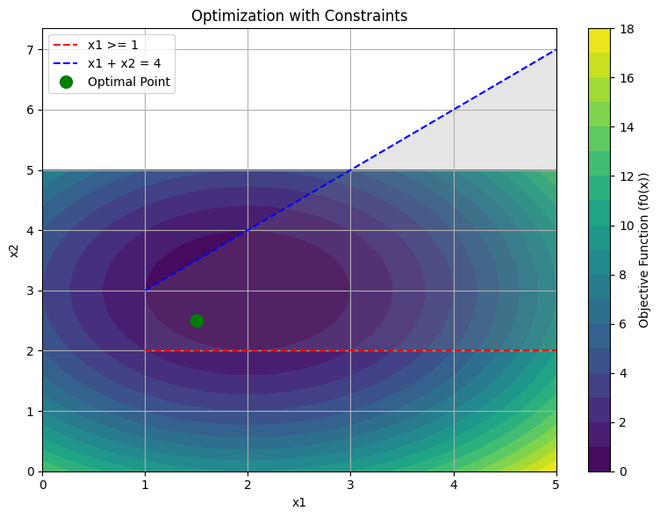

Multivariable Calculus For Machine Learning Geeksforgeeks We have to minimize within a radius since a linear function is unbounded otherwise. for l2 bounded steps (i.e. ∥x − x0∥2 ≤ α this is equivalent to gradient descent. for other norms, this is called steepest descent with respect to the lp norm:. Master essential multivariate calculus concepts for machine learning, from gradients to optimization techniques. This document provides a summary of key concepts in multivariate calculus and optimization that are important for machine learning, including definitions of derivatives, rules for computing derivatives, taylor series, neural network activation functions, vector calculus concepts like gradients and hessians, and optimization techniques like. In this module, we will derive the formal expression for the univariate taylor series and discuss some important consequences of this result relevant to machine learning. finally, we will discuss the multivariate case and see how the jacobian and the hessian come in to play.

Multivariable Calculus For Machine Learning This document provides a summary of key concepts in multivariate calculus and optimization that are important for machine learning, including definitions of derivatives, rules for computing derivatives, taylor series, neural network activation functions, vector calculus concepts like gradients and hessians, and optimization techniques like. In this module, we will derive the formal expression for the univariate taylor series and discuss some important consequences of this result relevant to machine learning. finally, we will discuss the multivariate case and see how the jacobian and the hessian come in to play.

Comments are closed.