38 Elu Activation Tensorflow Tutorial

Activation Functions Sigmoid Tanh Relu Leaky Relu Prelu Elu The video discusses in activation functions in tensorflow: elu (exponential linear unit) 00:00 overview more. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient.

Elu Activation Function Elu is a activation function used in neural networks which is an advanced version of widely used relu activation function. but before understanding elu it's important to recognize the shortcomings of relu and leaky relu activation function. relu returns 0 for any negative input. Applies the rectified linear unit activation function. with default values, this returns the standard relu activation: max(x, 0), the element wise maximum of 0 and the input tensor. In this guide, we’ll dive deep into the elu function in keras, exploring its benefits, how it works, and real world examples. what is the elu function in keras? the exponential linear unit. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. elus saturate to a negative value when the argument gets smaller.

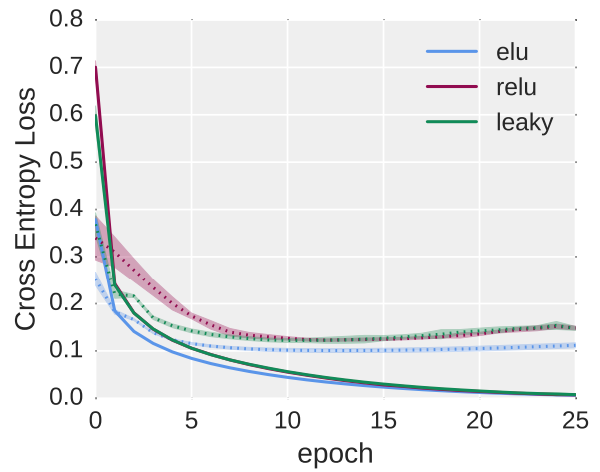

Elu Activation A Comprehensive Analysis In this guide, we’ll dive deep into the elu function in keras, exploring its benefits, how it works, and real world examples. what is the elu function in keras? the exponential linear unit. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. elus saturate to a negative value when the argument gets smaller. This article is an introduction to elu and its position when compared to other popular activation functions. it also includes an interactive example and usage with pytorch and tensorflow. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. elus saturate to a negative value when the argument gets smaller. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. Compare relu vs elu activation functions in deep learning. learn their differences, advantages, and how to choose the right one for your neural network.

Elu Activation A Comprehensive Analysis This article is an introduction to elu and its position when compared to other popular activation functions. it also includes an interactive example and usage with pytorch and tensorflow. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. elus saturate to a negative value when the argument gets smaller. Elus have negative values which pushes the mean of the activations closer to zero. mean activations that are closer to zero enable faster learning as they bring the gradient closer to the natural gradient. Compare relu vs elu activation functions in deep learning. learn their differences, advantages, and how to choose the right one for your neural network.

Comments are closed.