3 3 Bias Variability Errors

24 Bias And Variance Pdf Machine Learning Errors And Residuals Bias and variance are two fundamental concepts that help explain a model’s prediction errors in machine learning. bias refers to the error caused by oversimplifying a model while variance refers to the error from making the model too sensitive to training data. In this article, you’ll understand exactly what bias and variance mean, how to spot them in your models, and more importantly, how to fix them.

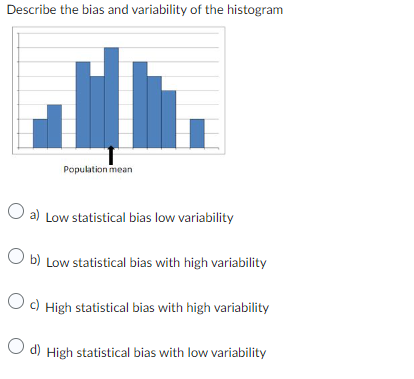

Solved Describe The Bias And Variability Of The Histogram A Chegg Why 3 sources of error? a formal derivation. what you can do now. The bias–variance decomposition is a way of analyzing a learning algorithm's expected generalization error with respect to a particular problem as a sum of three terms, the bias, variance, and a quantity called the irreducible error, resulting from noise in the problem itself. In this explainer, we dive into technical details of bias variance tradeoff and prediction error, painting a picture of how to build the right model for a dataset. Bias and variance are reduciable errors in machine learning model. check this tutorial to understand its concepts with graphs, datasets and examples.

Taylor Diagram Representing Variability Bias And Correlation Points In this explainer, we dive into technical details of bias variance tradeoff and prediction error, painting a picture of how to build the right model for a dataset. Bias and variance are reduciable errors in machine learning model. check this tutorial to understand its concepts with graphs, datasets and examples. In this guide, we’ll break down bias, variance, and irreducible error in simple terms, show how they add up mathematically, and clarify when one of these errors can be zero. The bias variance tradeoff describes the balance between a model being too simple and too complex. a simple model may miss important patterns (high bias), while a very complex model may learn noise from training data (high variance). This chapter will begin to dig into some theoretical details of estimating regression functions, in particular how the bias variance tradeoff helps explain the relationship between model flexibility and the errors a model makes. The bias variance decomposition the bias variance decomposition measures how sensitive prediction error is to changes in the training data (in this case, y. if there are systematic errors in prediction made regardless of the training data, then there is high bias.

Bias And Errors Ppt In this guide, we’ll break down bias, variance, and irreducible error in simple terms, show how they add up mathematically, and clarify when one of these errors can be zero. The bias variance tradeoff describes the balance between a model being too simple and too complex. a simple model may miss important patterns (high bias), while a very complex model may learn noise from training data (high variance). This chapter will begin to dig into some theoretical details of estimating regression functions, in particular how the bias variance tradeoff helps explain the relationship between model flexibility and the errors a model makes. The bias variance decomposition the bias variance decomposition measures how sensitive prediction error is to changes in the training data (in this case, y. if there are systematic errors in prediction made regardless of the training data, then there is high bias.

Variability Gauge Bias Report Error Jmp User Community This chapter will begin to dig into some theoretical details of estimating regression functions, in particular how the bias variance tradeoff helps explain the relationship between model flexibility and the errors a model makes. The bias variance decomposition the bias variance decomposition measures how sensitive prediction error is to changes in the training data (in this case, y. if there are systematic errors in prediction made regardless of the training data, then there is high bias.

Comments are closed.