23 Dataframe Transform Function In Pyspark Pyspark Tutorial

Pyspark Transformations Tutorial Download Free Pdf Apache Spark Pyspark.sql.dataframe.transform # dataframe.transform(func, *args, **kwargs) [source] # returns a new dataframe. concise syntax for chaining custom transformations. new in version 3.0.0. changed in version 3.4.0: supports spark connect. Pyspark provides two transform () functions one with dataframe and another in pyspark.sql.functions. pyspark.sql.dataframe.transform () available since.

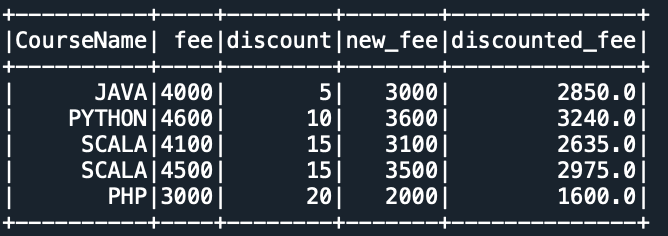

Transform And Apply A Function Pyspark 4 1 1 Documentation Concise syntax for chaining custom transformations. a function that takes and returns a dataframe. positional arguments to pass to func. keyword arguments to pass to func. #spark #pyspark #dataengineering in this video, i discussed about dataframe transform function in pyspark using which we can apply custom transformations on dataframe. In this tutorial, you will learn how to use the transform() function in pyspark to apply custom reusable transformations on dataframes. this is a great way to simplify complex logic and make your code cleaner!. In this article, we are going to learn how to apply a transformation to multiple columns in a data frame using pyspark in python. the api which was introduced to support spark and python language and has features of scikit learn and pandas libraries of python is known as pyspark.

Transform And Apply A Function Pyspark 4 1 1 Documentation In this tutorial, you will learn how to use the transform() function in pyspark to apply custom reusable transformations on dataframes. this is a great way to simplify complex logic and make your code cleaner!. In this article, we are going to learn how to apply a transformation to multiple columns in a data frame using pyspark in python. the api which was introduced to support spark and python language and has features of scikit learn and pandas libraries of python is known as pyspark. In this guide, we’ll explore what dataframe operation transformations are, break down their mechanics step by step, detail each transformation type, highlight practical applications, and tackle common questions—all with rich insights to illuminate their capabilities. The `transform ()` method in pyspark dataframe api applies a user defined function (udf) to each row of the dataframe. it takes a function as an argument and returns a new dataframe with. In this guide, we will explore pyspark transformations from the ground up — from their types and inner workings to optimization strategies — with code examples and real world analogies. In this chapter, you will learn how to apply some of these basic transformations to your spark dataframe. spark dataframes are immutable, meaning that, they cannot be directly changed. but you can use an existing dataframe to create a new one, based on a set of transformations.

Transform And Apply A Function Pyspark 4 1 1 Documentation In this guide, we’ll explore what dataframe operation transformations are, break down their mechanics step by step, detail each transformation type, highlight practical applications, and tackle common questions—all with rich insights to illuminate their capabilities. The `transform ()` method in pyspark dataframe api applies a user defined function (udf) to each row of the dataframe. it takes a function as an argument and returns a new dataframe with. In this guide, we will explore pyspark transformations from the ground up — from their types and inner workings to optimization strategies — with code examples and real world analogies. In this chapter, you will learn how to apply some of these basic transformations to your spark dataframe. spark dataframes are immutable, meaning that, they cannot be directly changed. but you can use an existing dataframe to create a new one, based on a set of transformations.

Pyspark Transform Function With Example Spark By Examples In this guide, we will explore pyspark transformations from the ground up — from their types and inner workings to optimization strategies — with code examples and real world analogies. In this chapter, you will learn how to apply some of these basic transformations to your spark dataframe. spark dataframes are immutable, meaning that, they cannot be directly changed. but you can use an existing dataframe to create a new one, based on a set of transformations.

Comments are closed.