22 Optimize Joins In Spark Understand Bucketing For Faster Joins Sort Merge Join Broad Cast Join

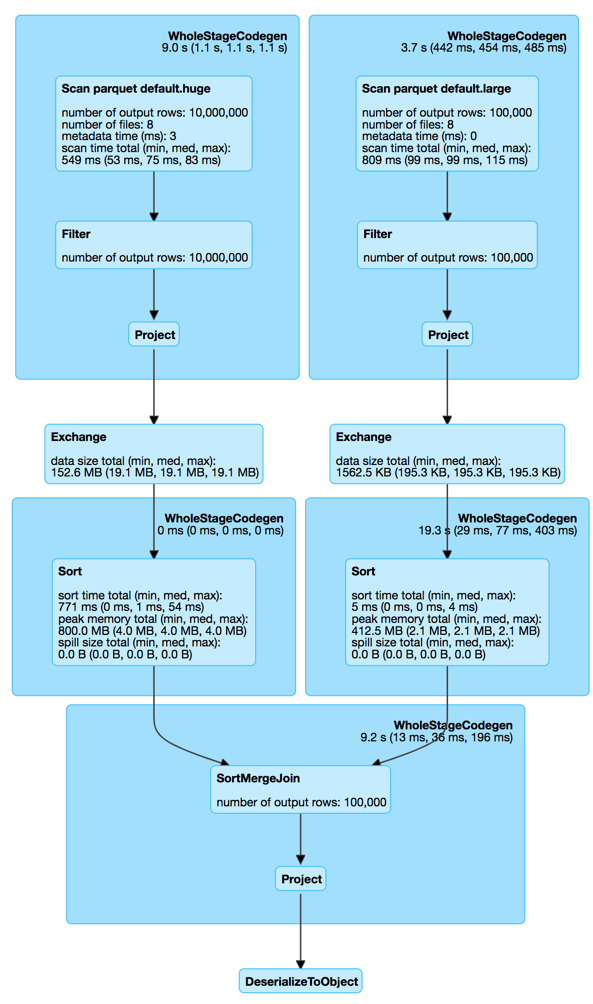

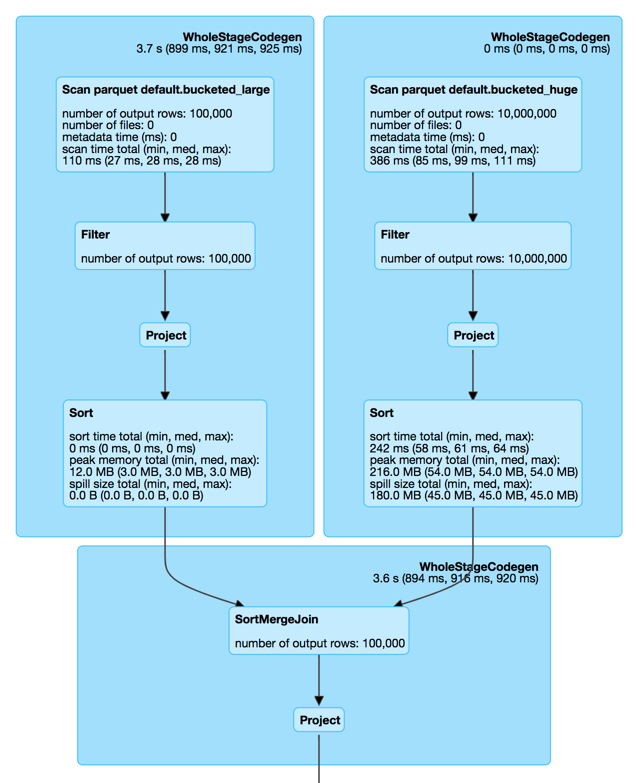

Join Optimization With Bucketing Spark Sql Wrapping up your join optimization mastery optimizing joins in pyspark to avoid data shuffling is a critical skill for efficient data processing. from broadcast joins to partitioning, bucketing, nested data, sql expressions, and null handling, you’ve got a robust toolkit to boost performance. When working with large scale data in spark, joins are often the biggest performance bottleneck. choosing the right join strategy can drastically reduce execution time and cost. let’s break down the most important join strategies in pyspark. why join strategy matters in distributed systems like spark: data is spread across nodes joins may trigger shuffles (expensive!) poor strategy →.

Join Optimization With Bucketing Spark Sql Learn how to optimize pyspark joins, reduce shuffles, handle skew, and improve performance across big data pipelines and machine learning workflows. Video explains how to optimize joins in spark ? what is sortmerge join? what is shufflehash join? what is broadcast joins? what is bucketing and how to use it for better. Sort merge join is one of the core join strategies in apache spark, especially used when: * datasets are too large to fit in memory (thus broadcast hash join is not feasible). *. This context discusses optimizing joins in pyspark, focusing on shuffle hash join, sort merge join, broadcast joins, and bucketing for better join performance.

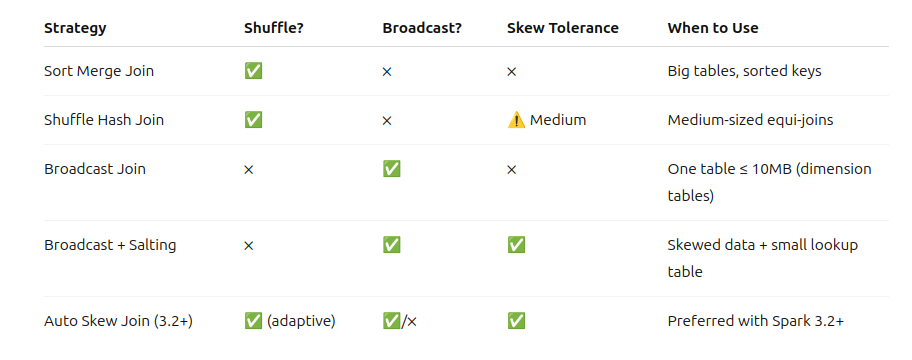

1 4 рџ Demystifying Spark Join Strategies Shuffle Sort Merge Shuffle Sort merge join is one of the core join strategies in apache spark, especially used when: * datasets are too large to fit in memory (thus broadcast hash join is not feasible). *. This context discusses optimizing joins in pyspark, focusing on shuffle hash join, sort merge join, broadcast joins, and bucketing for better join performance. Spark offers five distinct join strategies, each with different performance characteristics, memory requirements, and failure modes. the optimizer picks one based on statistics, hints, configuration, and join type — and it often picks wrong when it lacks information. We will explore three famous joining strategies that spark offers — shufflehash join, sortmerge join and broadcast joins. and before we experiment with these joining strategies, lets set up some ground. In some cases, specifying the join strategy explicitly—like using the broadcast() function or setting up bucketing—can further optimize performance, especially when spark’s automatic decision doesn’t align perfectly with your workflow. Spark optimizes join strategies based on data size, partitioning, and join conditions. we’ll explore the four key join strategies in spark: broadcast hash join, shuffle hash.

Comments are closed.