2015 Dl Summer School Tutorial On Neural Network Optimization Problems

Tutorial On Neural Network Optimization Problems Presentation By Ian This video is an archive of 'tutorial on neural network optimization problems' lecture from ian goodfellow at 2015 deep learning summer school. Do not blame optimization troubles on one specific boogeyman simply because it is the one that frightens you. consider all possible obstacles, and seek evidence for which ones are there.

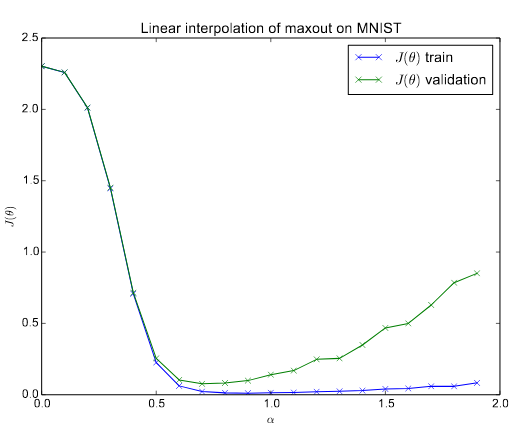

7 Network Optimization Problems Pdf It covers several papers on identifying and characterizing issues like saddle points. optimization methods like gradient descent, newton's method, and sgd are examined. while sgd often gets stuck, this is typically at a saddle point rather than a local minimum. Figure 1: experiments with maxout on mnist. top row) the state of the art model, sarial training. bottom row) the previous best maxout network, without adversarial column) the linear interpolation experiment. Having performed experiments to understand the behavior of neural network optimization on su pervised feedforward networks, we now verify that the same behavior occurs for more advanced networks. Neural networks never encounter any significant obstacles. we maintain a portfolio of research projects, providing individuals and teams the freedom to emphasize specific types of work.

Graph Neural Network Optimization Model Ppt Sample Having performed experiments to understand the behavior of neural network optimization on su pervised feedforward networks, we now verify that the same behavior occurs for more advanced networks. Neural networks never encounter any significant obstacles. we maintain a portfolio of research projects, providing individuals and teams the freedom to emphasize specific types of work. We introduce a simple analysis technique to look for evidence that such networks are overcoming local optima. we find that, in fact, on a straight path from initialization to solution, a variety of state of the art neural networks never encounter any significant obstacles. Presentation slides and video link of deep learning summer school 2015. montreal summer school 15 03 deeplearning2015 bottou multilayer neural networks 1.pdf at master · dschappler montreal summer school 15. Figure 2: the net2widernet transformation. in this example, the teacher network has an input layer with two inputs x[1] and x[2], a hidden layer with two “net2net: rectified accelerating linear hidden learning units via h[1] and h[2], and an output y . This paper qualitatively explores the loss landscape for neural networks and compares the properties of modern successful networks to architectures that were studied in the past.

Now Reading Qualitatively Characterizing Neural Network Optimization We introduce a simple analysis technique to look for evidence that such networks are overcoming local optima. we find that, in fact, on a straight path from initialization to solution, a variety of state of the art neural networks never encounter any significant obstacles. Presentation slides and video link of deep learning summer school 2015. montreal summer school 15 03 deeplearning2015 bottou multilayer neural networks 1.pdf at master · dschappler montreal summer school 15. Figure 2: the net2widernet transformation. in this example, the teacher network has an input layer with two inputs x[1] and x[2], a hidden layer with two “net2net: rectified accelerating linear hidden learning units via h[1] and h[2], and an output y . This paper qualitatively explores the loss landscape for neural networks and compares the properties of modern successful networks to architectures that were studied in the past.

Introduction To Neural Network Optimization Techniques Pdf Figure 2: the net2widernet transformation. in this example, the teacher network has an input layer with two inputs x[1] and x[2], a hidden layer with two “net2net: rectified accelerating linear hidden learning units via h[1] and h[2], and an output y . This paper qualitatively explores the loss landscape for neural networks and compares the properties of modern successful networks to architectures that were studied in the past.

Comments are closed.