15 Llm Coding Benchmarks

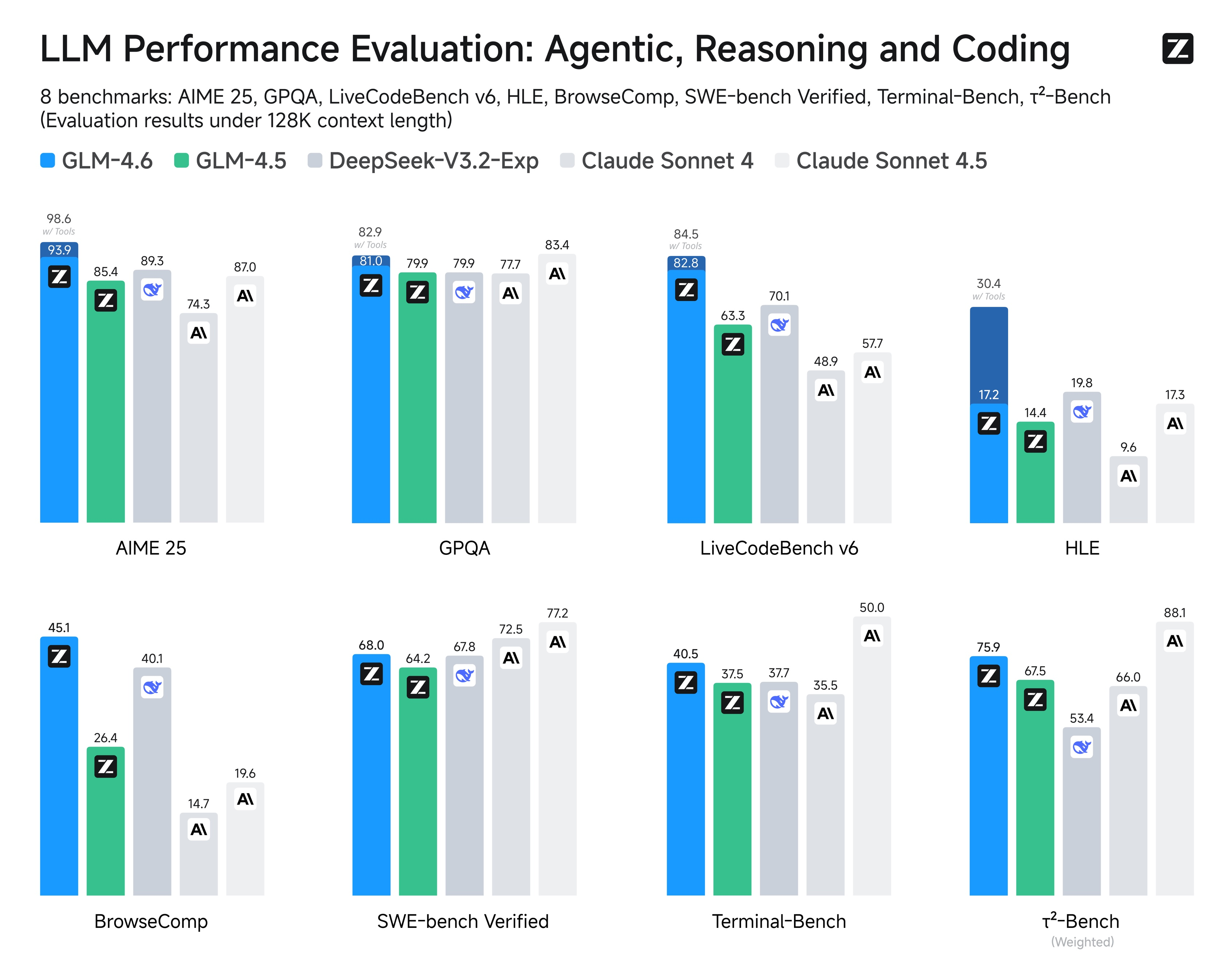

15 Llm Coding Benchmarks This blog highlights 15 llm coding benchmarks designed to evaluate and compare how different models perform on various coding tasks, including code completion, snippet generation, debugging, and more. This article compares every major llm on the benchmarks that actually matter for coding: swe bench (real github issue resolution) and aider's polyglot benchmark (multi language coding tasks). we include pricing for every model so you can calculate your own cost performance tradeoff.

15 Llm Coding Benchmarks We rank every major llm — open and closed source — across swe bench, humaneval, livecodebench, and terminal bench coding benchmarks. compare the best llms for coding, software engineering, and programming. Live leaderboard ranking 189 ai models on swe bench pro, swe rebench, livecodebench, humaneval, swe bench verified, flteval, and react native evals. see which llm writes the best code — updated march 2026. I benchmarked claude, gpt, gemini, deepseek, and 11 others on my actual daily coding tasks. see pass rates, latency, costs, and exactly when to use cheap vs. expensive models. This article compares the top ai coding models, best llm for software engineering, the best ai model for code generation, and the best budget or open weight options for real development workflows.

Glm 4 6 Advanced Agentic Reasoning And Coding Capabilities I benchmarked claude, gpt, gemini, deepseek, and 11 others on my actual daily coding tasks. see pass rates, latency, costs, and exactly when to use cheap vs. expensive models. This article compares the top ai coding models, best llm for software engineering, the best ai model for code generation, and the best budget or open weight options for real development workflows. Best llm for coding this coding llm leaderboard compares the latest models on engineering specific benchmarks including swe bench, livecodebench, aider polyglot, bfcl tool use, and more. the data comes from model providers as well as independently run evaluations by vellum or the open source community. Explore the latest rankings of coding focused large language models (llms) based on their performance in coding tasks and benchmarks. When you test an llm for coding tasks, benchmarks provide repeatable, objective measurements. they replace subjective code review with automated testing, letting you compare models based on how often they generate working code. Find the best llm for coding in 2026. we compared 50 models on agentic coding, scaffold controlled benchmarks, real practitioner use, and cost.

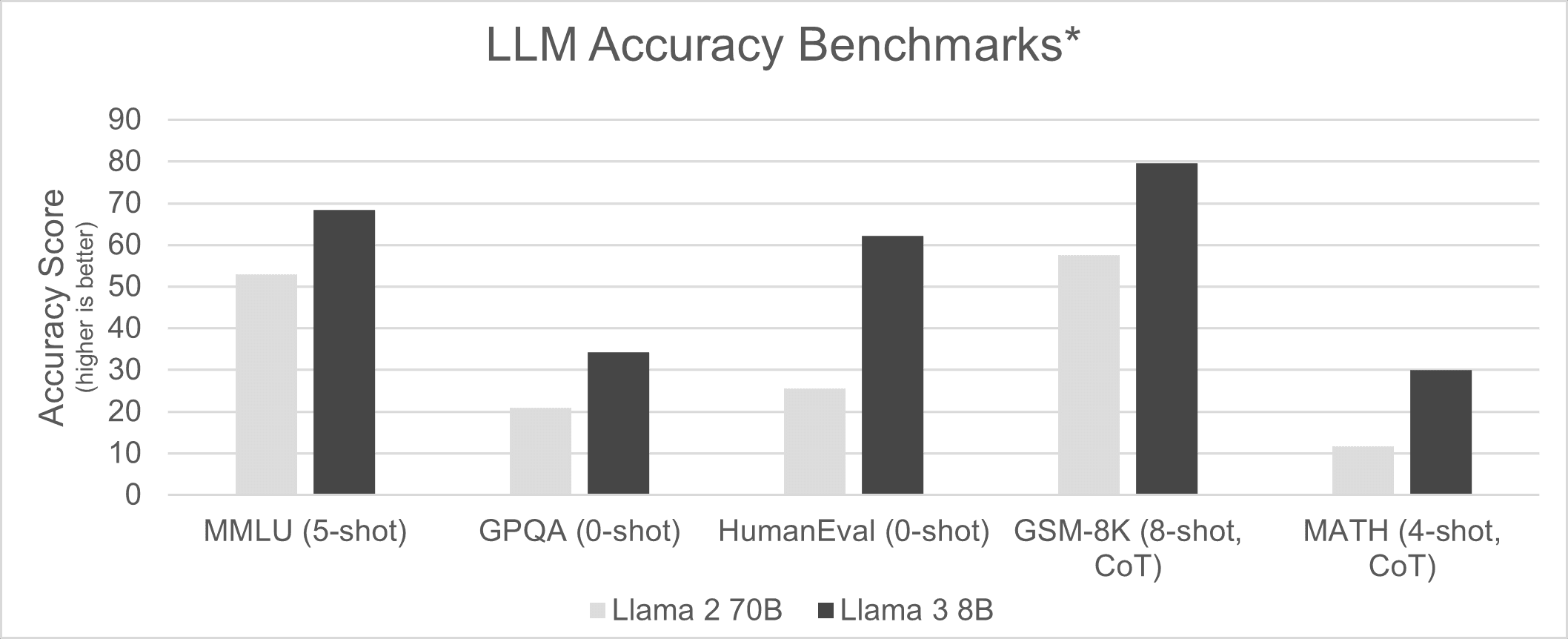

Llms Bigger Is Not Always Better Ai Platform Alliance Best llm for coding this coding llm leaderboard compares the latest models on engineering specific benchmarks including swe bench, livecodebench, aider polyglot, bfcl tool use, and more. the data comes from model providers as well as independently run evaluations by vellum or the open source community. Explore the latest rankings of coding focused large language models (llms) based on their performance in coding tasks and benchmarks. When you test an llm for coding tasks, benchmarks provide repeatable, objective measurements. they replace subjective code review with automated testing, letting you compare models based on how often they generate working code. Find the best llm for coding in 2026. we compared 50 models on agentic coding, scaffold controlled benchmarks, real practitioner use, and cost.

40 Top Research Backed Llm Benchmarks And Where To Use Them When you test an llm for coding tasks, benchmarks provide repeatable, objective measurements. they replace subjective code review with automated testing, letting you compare models based on how often they generate working code. Find the best llm for coding in 2026. we compared 50 models on agentic coding, scaffold controlled benchmarks, real practitioner use, and cost.

Comments are closed.