10 Min Walkthrough Of Langfuse Open Source Llm Observability Evaluation And Prompt Management

Langfuse Open Source Llm Engineering Platform For Observability 10 min walkthrough of langfuse – open source llm observability, evaluation, and prompt management langfuse 2.78k subscribers subscribed. End to end examples and resources to get started with langfuse for llm tracing, monitoring, prompt management, and more.

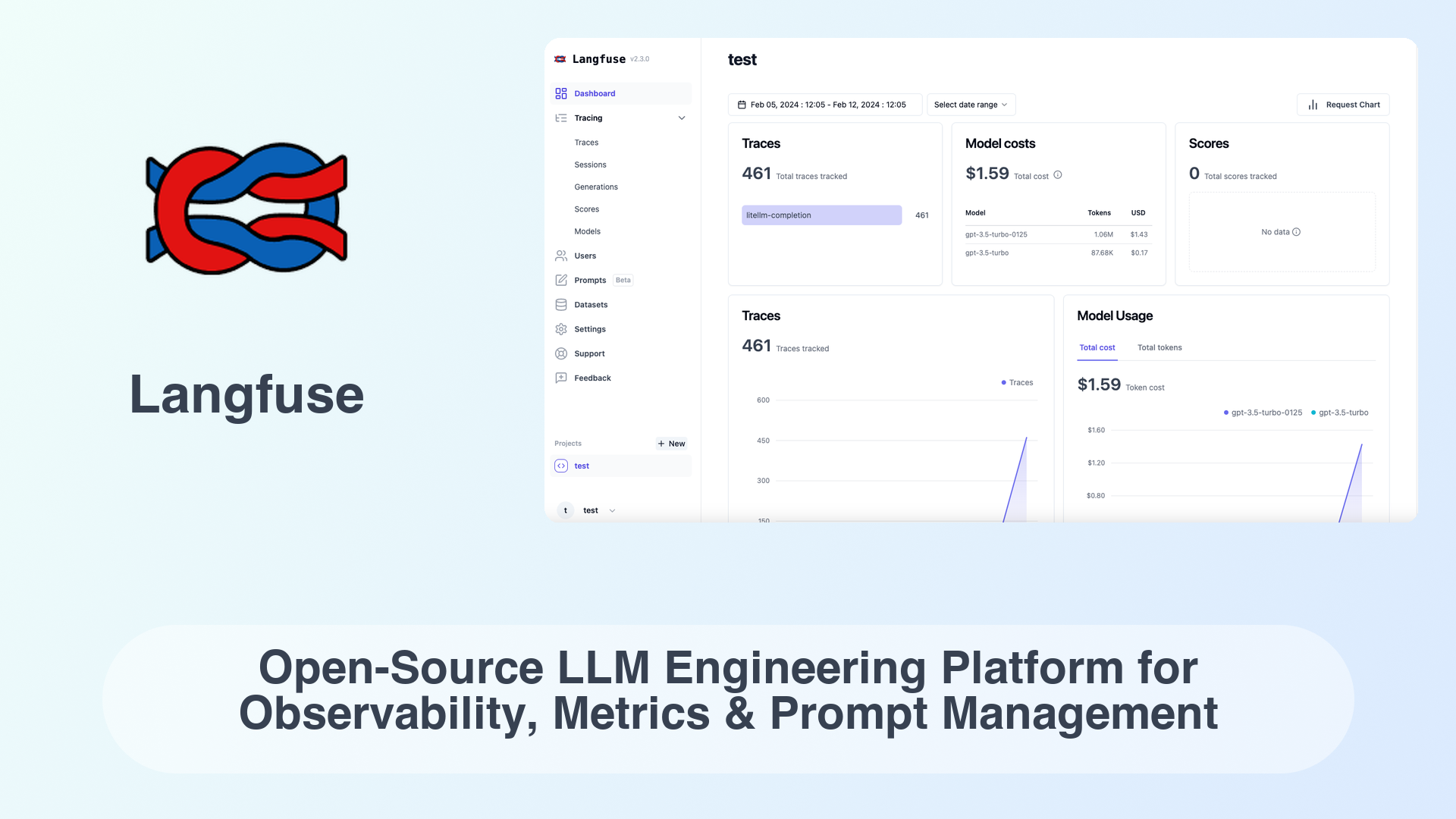

Open Source Prompt Management Langfuse Learn the fundamentals of llm monitoring and observability, from tracing to evaluation and setting up a dashboard using langfuse. let’s start with an example: you have built a complex llm application that responds to user queries about a specific domain. In this video langfuse co founder and ceo marc will give you a high level introduction of the different langfuse products: observability, prompt management and evaluations. Meet langfuse — the open source observability tool for llm applications. Discover how langfuse brings visibility, organization, and quality control to llm apps. follow this tutorial to build a complete document q&a project.

Langfuse Meet langfuse — the open source observability tool for llm applications. Discover how langfuse brings visibility, organization, and quality control to llm apps. follow this tutorial to build a complete document q&a project. Get an overview of the complete langfuse platform and learn how it helps teams build better llm applications through observability, prompt management, and evaluation. This guide shows you how to deploy langfuse on hugging face spaces and start instrumenting your llm application for observability. this integration helps you to experiment with llm apis on the hugging face hub, manage your prompts in one place, and evaluate model outputs. In this blog post, i will demonstrate how to build a llm powered solution, and walk through on using langfuse for llm observability and evaluation. for more information on. In this tutorial, we'll build and deploy a chat backend with full observability. you'll learn how to instrument llm calls, track costs across different models, collect user feedback, and use the resulting data to make informed optimization decisions.

Comments are closed.