1 5 Byte Pair Encoding

Byte Pair Encoding Github Topics Github It replaces the highest frequency pair of bytes with a new byte that was not contained in the initial dataset. a lookup table of the replacements is required to rebuild the initial dataset. Byte pair encoding (bpe) was initially developed as an algorithm to compress texts, and then used by openai for tokenization when pretraining the gpt model. it’s used by a lot of transformer models, including gpt, gpt 2, roberta, bart, and deberta.

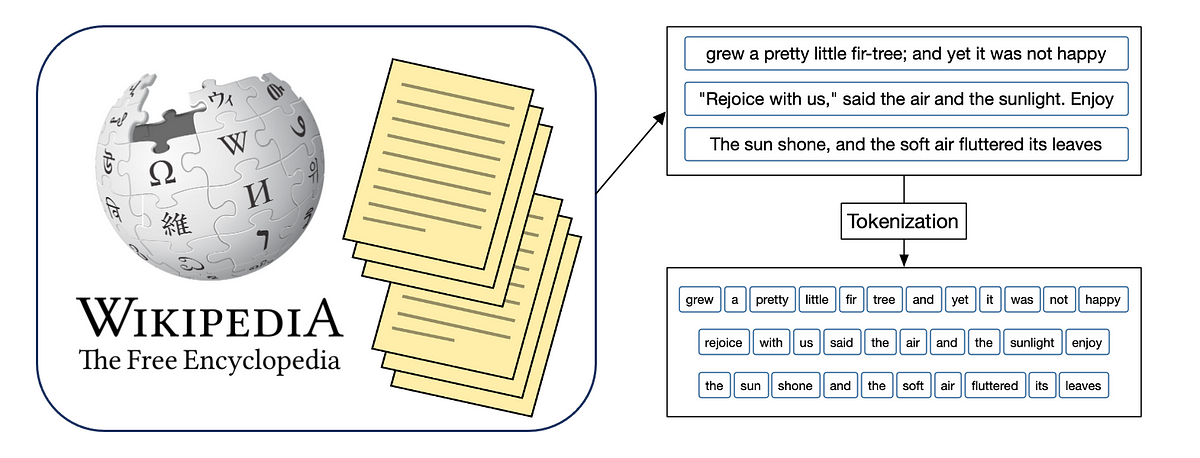

Demystifying Byte Pair Encoding Bpe Aiml It works by repeatedly finding the most common pairs of characters in the text and combining them into a new subword until the vocabulary reaches a desired size. This post explores the process of byte pair encoding, from handling raw training text and pre tokenization to constructing vocabularies and tokenizing new text. Byte pair encoding (bpe) is one of the most popular subword tokenization techniques used in natural language processing (nlp). it plays a crucial role in improving the efficiency of large language models (llms) like gpt, bert, and others. In this comprehensive guide, we’ll demystify byte pair encoding, explore its origins, applications, and impact on modern ai, and show you how to leverage bpe in your own data science projects.

Demystifying Byte Pair Encoding Bpe Aiml Byte pair encoding (bpe) is one of the most popular subword tokenization techniques used in natural language processing (nlp). it plays a crucial role in improving the efficiency of large language models (llms) like gpt, bert, and others. In this comprehensive guide, we’ll demystify byte pair encoding, explore its origins, applications, and impact on modern ai, and show you how to leverage bpe in your own data science projects. The video from hugging face walks through byte pair encoding, explaining its subword tokenization algorithm, how to train it, and how tokenization of the text is done with the algorithm. The rules for translating a unicode string into a sequence of bytes are called a character encoding, or just an encoding.” it seems the answer is that python assumes the text is encoded using utf 8 and that the encoding is done natively under the hood. By learning to merge frequent character or byte pairs from a large corpus, it creates a vocabulary that effectively handles large amounts of text, avoids oov issues, and allows control over the balance between vocabulary size and sequence length. Below is a comprehensive article that is both easy to understand and covers advanced details of byte pair encoding (bpe). it uses real‐life analogies, step by step explanations, and a.

Demystifying Byte Pair Encoding Bpe Aiml The video from hugging face walks through byte pair encoding, explaining its subword tokenization algorithm, how to train it, and how tokenization of the text is done with the algorithm. The rules for translating a unicode string into a sequence of bytes are called a character encoding, or just an encoding.” it seems the answer is that python assumes the text is encoded using utf 8 and that the encoding is done natively under the hood. By learning to merge frequent character or byte pairs from a large corpus, it creates a vocabulary that effectively handles large amounts of text, avoids oov issues, and allows control over the balance between vocabulary size and sequence length. Below is a comprehensive article that is both easy to understand and covers advanced details of byte pair encoding (bpe). it uses real‐life analogies, step by step explanations, and a.

Byte Pair Encoding For Beginners By learning to merge frequent character or byte pairs from a large corpus, it creates a vocabulary that effectively handles large amounts of text, avoids oov issues, and allows control over the balance between vocabulary size and sequence length. Below is a comprehensive article that is both easy to understand and covers advanced details of byte pair encoding (bpe). it uses real‐life analogies, step by step explanations, and a.

Comments are closed.