%f0%9f%9a%80 Inference Processing The Runway Of Llm Apps

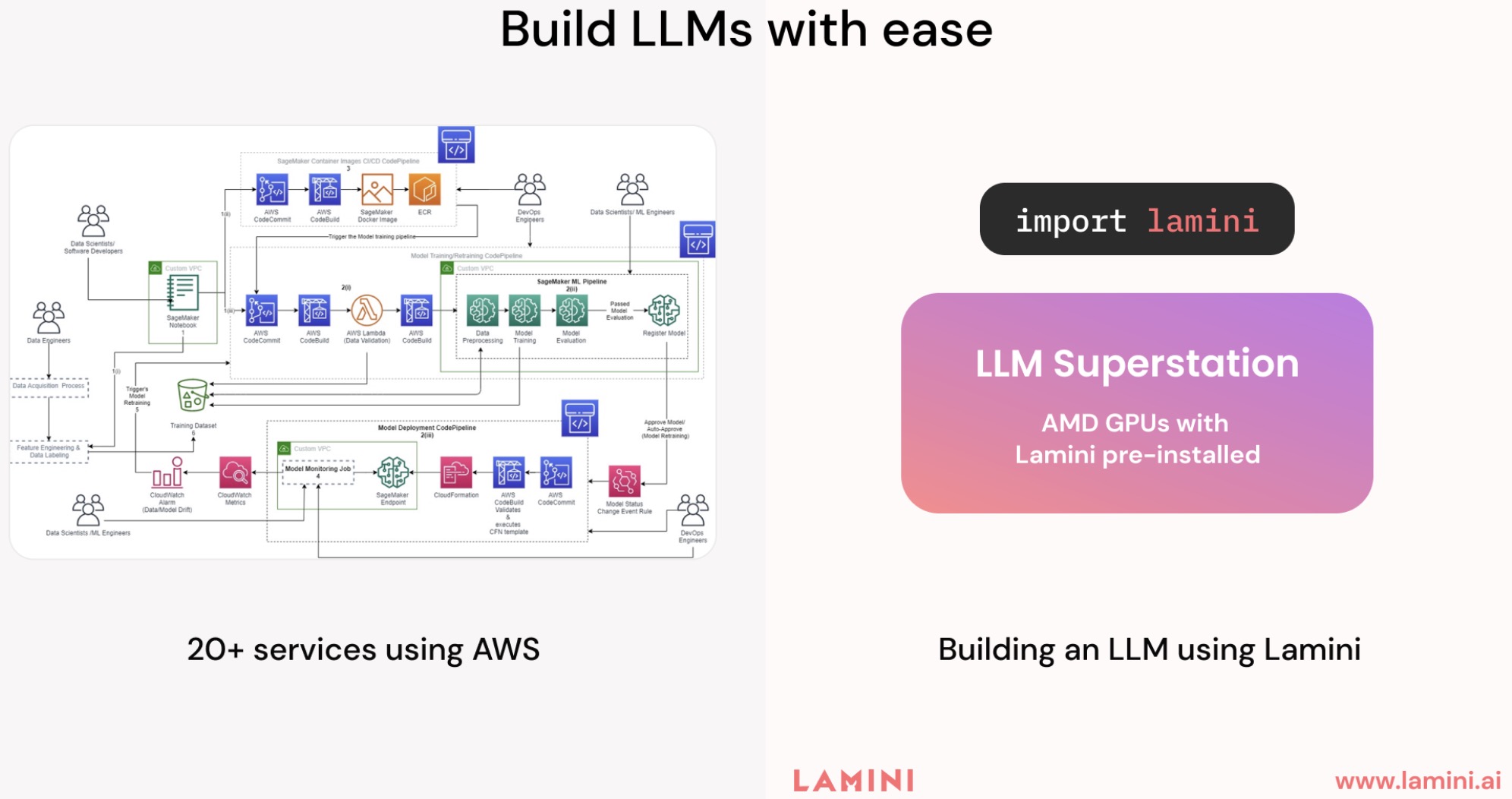

How Does Llm Inference Work Llm Inference Handbook While dense transformer architectures have dominated early llm development, two emerging paradigms are fundamentally reshaping the computational characteristics of llm inference. This repository breaks down the llm pipeline step by step, helping you understand how ai models process, generate, and optimize text responses. instead of seeing llms as a black box, this framework reverse engineers each component, giving both high level intuition and technical deep dives.

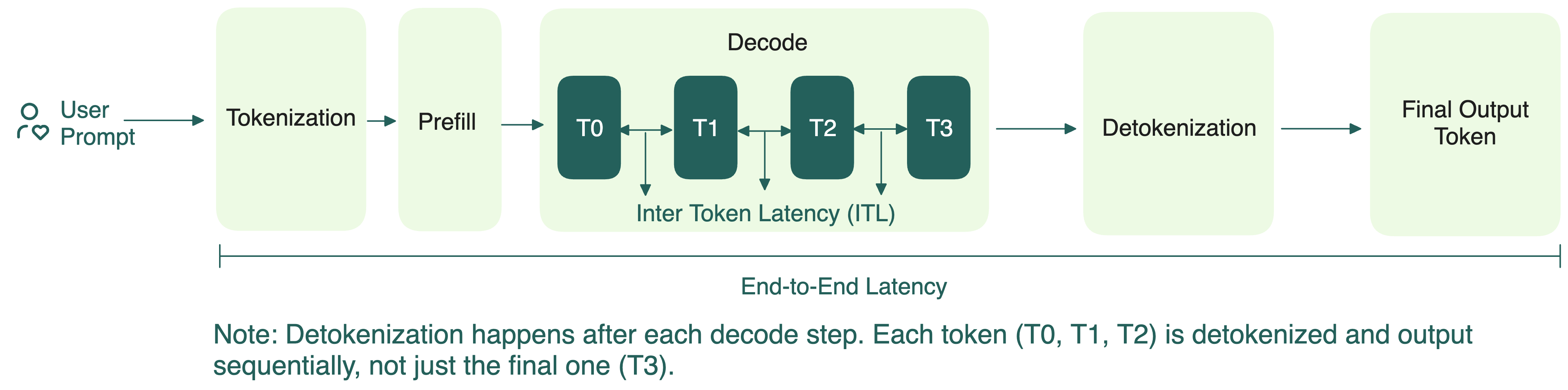

Decoding Llm Inference A Deep Dive Into Workloads Optimization And Text generation inference (tgi) is a toolkit for deploying and serving large language models (llms). tgi enables high performance text generation for the most popular open source llms, including llama, falcon, starcoder, bloom, gpt neox, and t5. text generation inference implements many optimizations and features, such as:. What is llmfit? it automatically detects your cpu, ram, and gpu, compares them against a curated llm database, and recommends models that fit. ( [docs.rs] [1]) think of it as: “pcpartpicker — but for local llms.” local ai adoption fails mostly because of hardware mismatch. typical workflow today:. Overview of popular llm inference performance metrics. this metric shows how long a user needs to wait before seeing the model’s output. this is the time it takes from submitting the query to receiving the first token (if the response is not empty). Inference in ai refers to the process of drawing logical conclusions, predictions, or decisions based on available information, often using predefined rules, statistical models, or machine learning algorithms.

Llm Inference Acceleration Continuous Batching Sarathi Efficient Llm Overview of popular llm inference performance metrics. this metric shows how long a user needs to wait before seeing the model’s output. this is the time it takes from submitting the query to receiving the first token (if the response is not empty). Inference in ai refers to the process of drawing logical conclusions, predictions, or decisions based on available information, often using predefined rules, statistical models, or machine learning algorithms. In this blog post series, i will walk you through the different aspects and challenges of llm inference. Measuring the inference performance of large language models (llms) is crucial to understanding how effectively they respond to input prompts and produce outputs in real world applications. Inference covers the most widely used ai and machine learning (ml) workloads and use cases. on consumer devices, common ai workloads like object detection and facial recognition, as well as text generation and summarization, are all inference. Learn if llm inference is compute or memory bound to fully utilize gpu power. get insights on better gpu resource utilization.

Llm Inference Benchmarking How Much Does Your Llm Inference Cost In this blog post series, i will walk you through the different aspects and challenges of llm inference. Measuring the inference performance of large language models (llms) is crucial to understanding how effectively they respond to input prompts and produce outputs in real world applications. Inference covers the most widely used ai and machine learning (ml) workloads and use cases. on consumer devices, common ai workloads like object detection and facial recognition, as well as text generation and summarization, are all inference. Learn if llm inference is compute or memory bound to fully utilize gpu power. get insights on better gpu resource utilization.

Mastering Llm Techniques Inference Optimization Nvidia Technical Inference covers the most widely used ai and machine learning (ml) workloads and use cases. on consumer devices, common ai workloads like object detection and facial recognition, as well as text generation and summarization, are all inference. Learn if llm inference is compute or memory bound to fully utilize gpu power. get insights on better gpu resource utilization.

Llm Inference Hardware Emerging From Nvidia S Shadow

Comments are closed.