%f0%9f%90%8d Testing Machine Learning Model K Fold Cross Validation

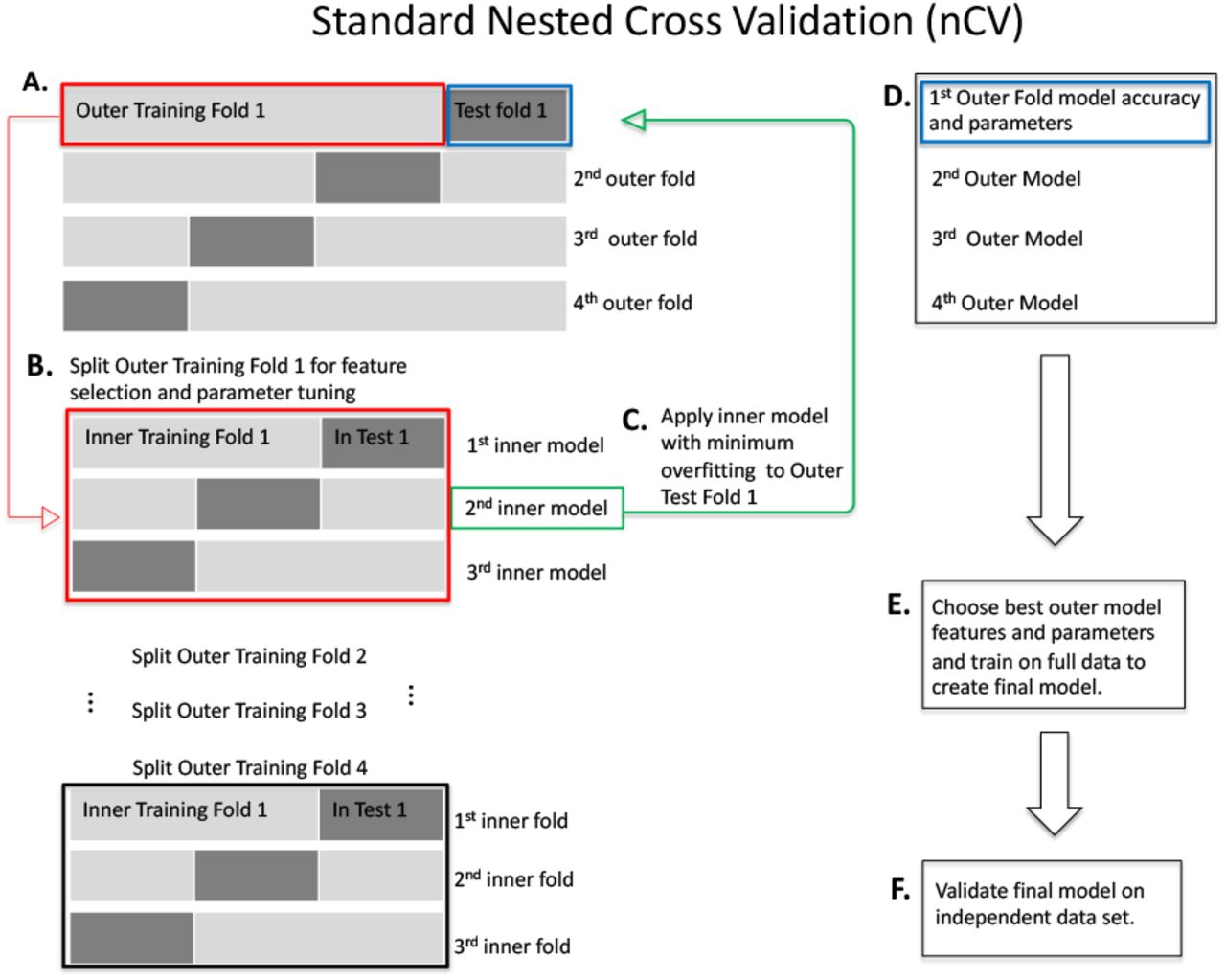

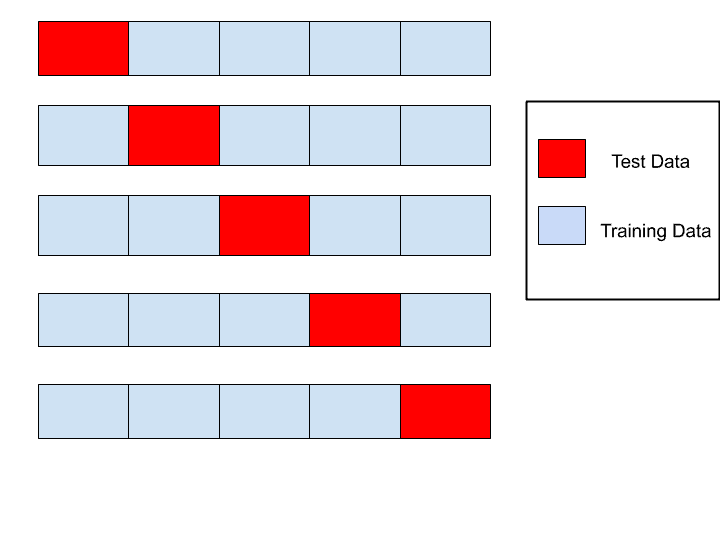

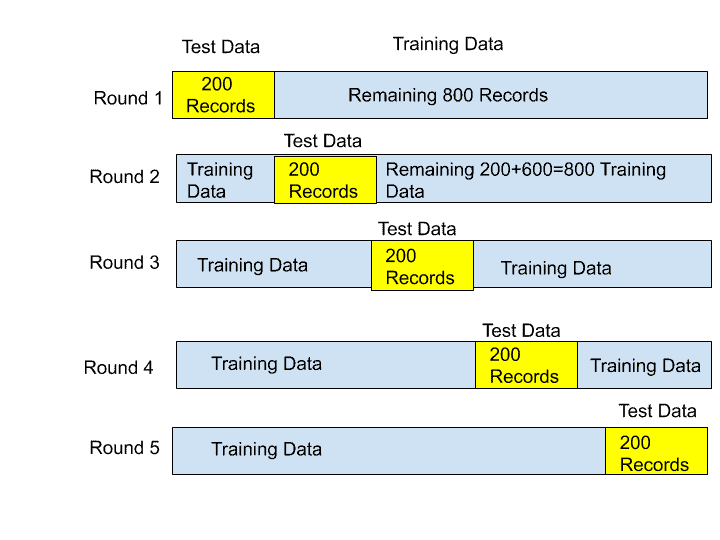

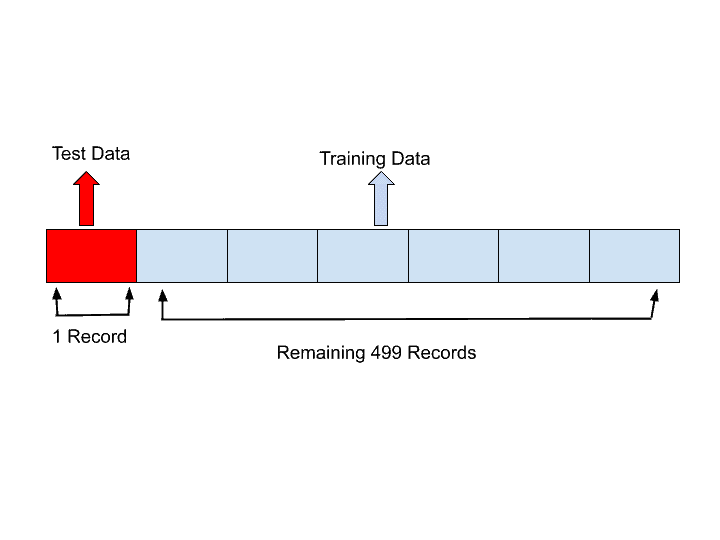

K Fold Cross Validation Technique In Machine Learning Explanation: this code does k fold cross validation with a randomforestclassifier on the iris dataset. it divides the data into 5 folds, trains the model on each fold and checks its accuracy. K fold cross validation is a resampling technique used to evaluate machine learning models by splitting the dataset into k equal sized folds. the model is trained on k 1 folds and validated on the remaining fold, repeating the process k times.

K Fold Cross Validation In Machine Learning Geeksforgeeks What is k fold cross validation? k fold cross validation is a popular technique used to evaluate the performance of machine learning models. it is advantageous when you have limited data and want to maximize it while estimating how well your model will generalize to new, unseen data. Provides train test indices to split data in train test sets. split dataset into k consecutive folds (without shuffling by default). each fold is then used once as a validation while the k 1 remaining folds form the training set. read more in the user guide. Let us use k fold cross validation to improve on the simpler holdout cross validation performed when we built a single boosted tree to predict the output variable in an artificially generated data set. This guide has shown you how k fold cross validation is a powerful tool for evaluating machine learning models. it's better than the simple train test split because it tests the model on various parts of your data, helping you trust that it will work well on unseen data too.

K Fold Cross Validation In Machine Learning How Does K Fold Work Let us use k fold cross validation to improve on the simpler holdout cross validation performed when we built a single boosted tree to predict the output variable in an artificially generated data set. This guide has shown you how k fold cross validation is a powerful tool for evaluating machine learning models. it's better than the simple train test split because it tests the model on various parts of your data, helping you trust that it will work well on unseen data too. Explore k fold cross validation, a key method in machine learning for accurate model performance evaluation and stability. What is k fold cross validation? k fold cross validation is a technique that helps test how well a machine learning model performs by splitting your dataset into k equal parts. In this section, we’ll explore some of the most commonly used machine learning models and the corresponding hyperparameters that are typically optimized during cross validation. K fold cross validation is a sophisticated extension of traditional cross validation techniques, designed to provide a more robust evaluation of machine learning models. it addresses the limitations of simple train test splits by systematically partitioning the dataset into 'k' subsets or folds.

K Fold Cross Validation In Machine Learning How Does K Fold Work Explore k fold cross validation, a key method in machine learning for accurate model performance evaluation and stability. What is k fold cross validation? k fold cross validation is a technique that helps test how well a machine learning model performs by splitting your dataset into k equal parts. In this section, we’ll explore some of the most commonly used machine learning models and the corresponding hyperparameters that are typically optimized during cross validation. K fold cross validation is a sophisticated extension of traditional cross validation techniques, designed to provide a more robust evaluation of machine learning models. it addresses the limitations of simple train test splits by systematically partitioning the dataset into 'k' subsets or folds.

K Fold Cross Validation In Machine Learning How Does K Fold Work In this section, we’ll explore some of the most commonly used machine learning models and the corresponding hyperparameters that are typically optimized during cross validation. K fold cross validation is a sophisticated extension of traditional cross validation techniques, designed to provide a more robust evaluation of machine learning models. it addresses the limitations of simple train test splits by systematically partitioning the dataset into 'k' subsets or folds.

Comments are closed.